moftransformer

Universal Transfer Learning in Porous Materials, including MOFs.

Science Score: 49.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

○CITATION.cff file

-

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

✓DOI references

Found 6 DOI reference(s) in README -

✓Academic publication links

Links to: nature.com -

○Committers with academic emails

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (14.0%) to scientific vocabulary

Repository

Universal Transfer Learning in Porous Materials, including MOFs.

Basic Info

- Host: GitHub

- Owner: hspark1212

- Language: Python

- Default Branch: master

- Homepage: https://hspark1212.github.io/MOFTransformer/

- Size: 1010 MB

Statistics

- Stars: 108

- Watchers: 4

- Forks: 16

- Open Issues: 2

- Releases: 18

Metadata Files

README.md

PMTransformer (MOFTransformer)

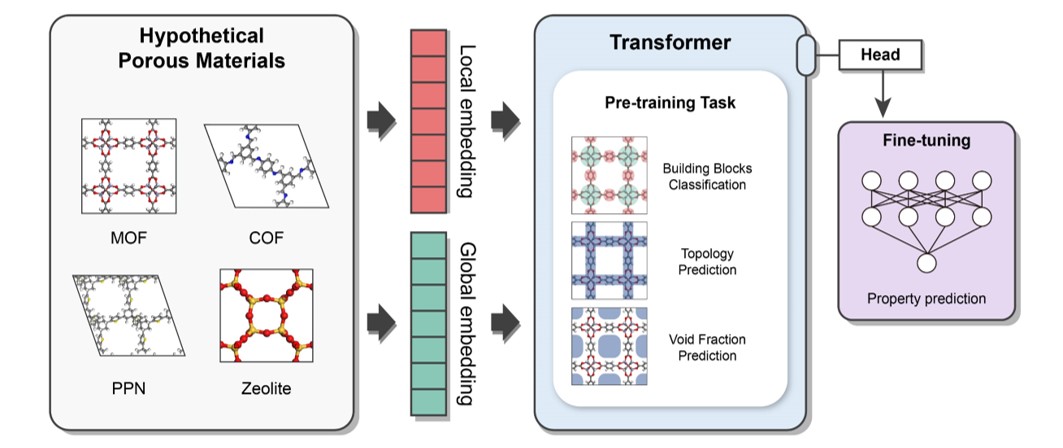

This package provides a universal transfer learning model, PMTransformer (Porous Materials Transformer), which obtains the state-of-the-art performance in predicting various properties of porous materials. The PMTRansformer was pre-trainied with 1.9 million hypothetical porous materials including Metal-Organic Frameworks (MOFs), Covalent-Organic Frameworks (COFs), Porous Polymer Networks (PPNs), and zeolites. By fine-tuning the pre-trained PMTransformer, you can easily obtain machine learning models to accurately predict various properties of porous materials .

NOTE: From version 2.0.0, the default pre-training model has been changed from MOFTransformer to PMTransformer, which was pre-trained with a larger dataset, containing other porous materials as well as MOFs. The PMTransformer outperforms the MOFTransformer in predicting various properties of porous materials.

Release Note

Version: 2.2.0

Now, MOFTransformer support multi-task learning (see multi-task learning)

Install

Depedencies

NOTE: This package is primarily tested on Linux. We strongly recommend using Linux for the installation.

python>=3.8

Given that MOFTransformer is based on pytorch, please install pytorch (>= 1.12.0) according to your environments.

Installation using PIP

$ pip install moftransformer

Download the pretrained models (ckpt file)

- you can download the pretrained models (

PMTransformer.ckptandMOFTransformer.ckpt) via figshare

or you can download with a command line:

$ moftransformer download pretrain_model

(Optional) Download pre-embeddings for CoREMOF, QMOF

- we've provide the pre-embeddings (i.e., atom-based graph embeddings and energy-grid embeddings), inputs of

PMTransformer, for CoREMOF, QMOF database.$ moftransformer download coremof $ moftransformer download qmof

Getting Started

- Install

GRIDAYto calculate energy-grids from cif files$ moftransformer install-griday - Run prepare-data . ```python from moftransformer.examples import examplepath from moftransformer.utils import preparedata

Get example path

rootcifs = examplepath['rootcif'] rootdataset = examplepath['rootdataset'] downstream = example_path['downstream']

trainfraction = 0.8 # default value testfraction = 0.1 # default value

Run prepare data

preparedata(rootcifs, rootdataset, downstream=downstream, trainfraction=trainfraction, testfraction=test_fraction) ```

- Fine-tune the pretrained MOFTransformer. ```python import moftransformer from moftransformer.examples import example_path

data root and downstream from example

rootdataset = examplepath['rootdataset'] downstream = examplepath['downstream'] log_dir = './logs/'

load_path = "pmtransformer" (default)

kwargs (optional)

maxepochs = 10 batchsize = 8 mean = 0 std = 1

moftransformer.run(rootdataset, downstream, logdir=logdir,

maxepochs=maxepochs, batchsize=batch_size,

mean=mean, std=std)

```

- Test fine-tuned model ```python from pathlib import Path import moftransformer from moftransformer.examples import example_path

rootdataset = examplepath['rootdataset'] downstream = examplepath['downstream']

Get ckpt file

seed = 0 # default seeds version = 0 # version for model. It increases with the number of trains

For version > 2.1.1, best.ckpt exists

checkpoint = 'best' # Epochs where the model is stored. save_dir = 'result/'

optional keyword

mean = 0 std = 1

loadpath = Path(logdir) / f'pretrainedmofseed{seed}frompmtransformer/version_{version}/checkpoints/{checkpoint}.ckpt'

if not loadpath.exists(): raise ValueError(f'loadpath does not exists. check path for .ckpt file : {load_path}')

moftransformer.test(rootdataset, loadpath, downstream=downstream, savedir=savedir, mean=mean, std=std) ```

- predict from fine-tuned model ```python from pathlib import Path import moftransformer from moftransformer.examples import example_path

rootdataset = examplepath['rootdataset'] downstream = examplepath['downstream']

Get ckpt file

log_dir = './logs/' # same directory make from training seed = 0 # default seeds version = 0 # version for model. It increases with the number of trains checkpoint = 'best' # Epochs where the model is stored. mean = 0 std = 1

loadpath = Path(logdir) / f'pretrainedmofseed{seed}frompmtransformer/version_{version}/checkpoints/{checkpoint}.ckpt'

if not loadpath.exists(): raise ValueError(f'loadpath does not exists. check path for .ckpt file : {load_path}')

moftransformer.predict( rootdataset, loadpath=load_path, downstream=downstream, split='all', mean=mean, std=std ) ```

- Visualize analysis of feature importance for the fine-tuned model. (You should download or train

fine-tunedmodel before visualization)

```python from moftransformer.visualize import PatchVisualizer from moftransformer.examples import visualizeexamplepath

modelpath = "examples/finetunedbandgap.ckpt" # or 'examples/finetunedh2uptake.ckpt' datapath = visualizeexamplepath cifname = 'MIBQAR01FSR'

vis = PatchVisualizer.fromcifname(cifname, modelpath, datapath) vis.drawgraph() ```

Architecture

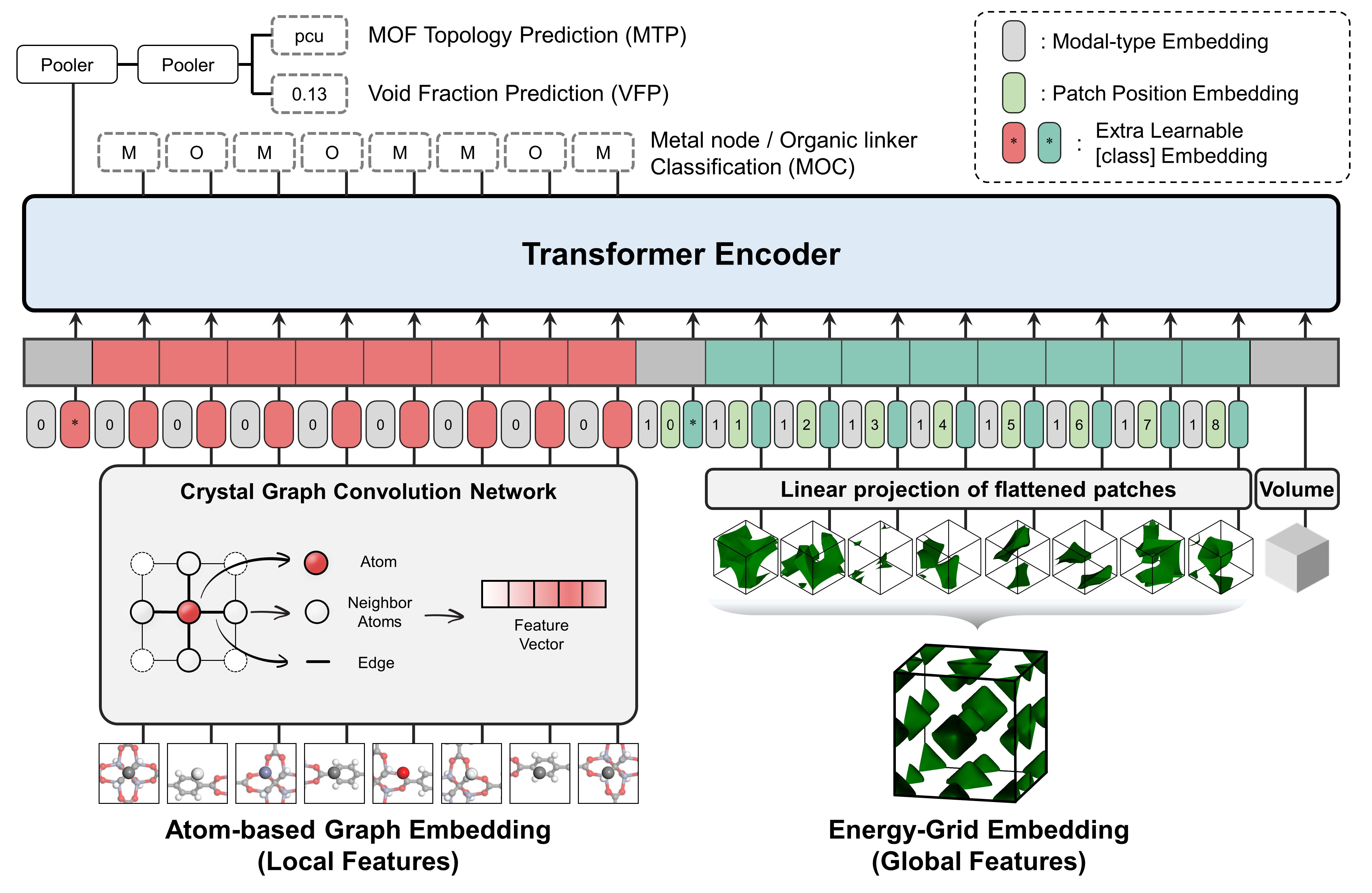

It is a multi-modal pre-training Transformer encoder which is designed to capture both local and global features of porous materials.

The pre-traning tasks are as follows: (1) Topology Prediction (2) Void Fraction Prediction (3) Building Block Classification

It takes two different representations as input - Atom-based Graph Embedding : CGCNN w/o pooling layer -> local features - Energy-grid Embedding : 1D flatten patches of 3D energy grid -> global features

Feature Importance Anaylsis

you can easily visualize feature importance analysis of atom-based graph embeddings and energy-grid embeddings. ```python %matplotlib widget from visualize import PatchVisualizer

modelpath = "examples/finetunedbandgap.ckpt" # or 'examples/finetunedh2uptake.ckpt' datapath = 'examples/visualize/dataset/' cifname = 'MIBQAR01FSR'

vis = PatchVisualizer.fromcifname(cifname, modelpath, datapath) vis.drawgraph() ```

python

vis = PatchVisualizer.from_cifname(cifname, model_path, data_path)

vis.draw_grid()

Universal Transfer Learning

Comparison of mean absolute error (MAE) values for various baseline models, scratch, MOFTransformer, and PMTransformer on different properties of MOFs, COFs, PPNs, and zeolites. The bold values indicate the lowest MAE value for each property. The details of information can be found in PMTransformer paper

| Material | Property | Number of Dataset | Energy histogram | Descriptor-based ML | CGCNN | Scratch | MOFTransformer | PMTransformer | | --- | --- | --- | --- | --- | --- | --- | --- | --- | | MOF | H2 Uptake (100 bar) | 20,000 | 9.183 | 9.456 | 32.864 | 7.018 | 6.377 | 5.963 | | MOF | H2 diffusivity (dilute) | 20,000 | 0.644 | 0.398 | 0.6600 | 0.391 | 0.367 | 0.366 | | MOF | Band-gap | 20.373 | 0.913 | 0.590 | 0.290 | 0.271 | 0.224 | 0.216 | | MOF | N2 uptake (1 bar) | 5,286 | 0.178 | 0.115 | 0.108 | 0.102 | 0.071 | 0.069 | | MOF | O2 uptake (1 bar) | 5,286 | 0.162 | 0.076 | 0.083 | 0.071 | 0.051 | 0.053 | | MOF | N2 diffusivity (1 bar) | 5,286 | 7.82e-5 | 5.22e-5 | 7.19e-5 | 5.82e-05 | 4.52e-05 | 4.53e-05 | | MOF | O2 diffusivity (1 bar) | 5,286 | 7.14e-5 | 4.59e-5 | 6.56e-5 | 5.00e-05 | 4.04e-05 | 3.99e-05 | | MOF | CO2 Henry coefficient | 8,183 | 0.737 | 0.468 | 0.426 | 0.362 | 0.295 | 0.288 | | MOF | Thermal stability | 3,098 | 68.74 | 49.27 | 52.38 | 52.557 | 45.875 | 45.766 | | COF | CH4 uptake (65bar) | 39,304 | 5.588 | 4.630 | 15.31 | 2.883 | 2.268 | 2.126 | | COF | CH4 uptake (5.8bar) | 39,304 | 3.444 | 1.853 | 5.620 | 1.255 | 0.999 | 1.009 | | COF | CO2 heat of adsorption | 39,304 | 2.101 | 1.341 | 1.846 | 1.058 | 0.874 | 0.842 | | COF | CO2 log KH | 39,304 | 0.242 | 0.169 | 0.238 | 0.134 | 0.108 | 0.103 | | PPN | CH4 uptake (65bar) | 17,870 | 6.260 | 4.233 | 9.731 | 3.748 | 3.187 | 2.995 | | PPN | CH4 uptake (1bar) | 17,870 | 1.356 | 0.563 | 1.525 | 0.602 | 0.493 | 0.461 | | Zeolite | CH4 KH (unitless) | 99,204 | 8.032 | 6.268 | 6.334 | 4.286 | 4.103 | 3.998 | | Zeolite | CH4 Heat of adsorption | 99,204 | 1.612 |1.033 | 1.603 | 0.670 | 0.647 |0.639 |

Citation

if you want to cite PMTransformer or MOFTransformer, please refer to the following paper: 1. A multi-modal pre-training transformer for universal transfer learning in metalorganic frameworks, Nature Machine Intelligence, 5, 2023. link

- Enhancing StructureProperty Relationships in Porous Materials through Transfer Learning and Cross-Material Few-Shot Learning, ACS Appl. Mater. Interfaces 2023, 15, 48, 5637556385. link

Contributing

Contributions are welcome! If you have any suggestions or find any issues, please open an issue or a pull request.

License

This project is licensed under the MIT License. See the LICENSE file for more information.

Owner

- Name: Hyunsoo Park

- Login: hspark1212

- Kind: user

- Website: https://hspark1212.github.io/

- Twitter: hspark1212

- Repositories: 4

- Profile: https://github.com/hspark1212

Materials.AI | Ph.D. Candidate at KAIST

GitHub Events

Total

- Issues event: 15

- Watch event: 21

- Issue comment event: 23

- Fork event: 5

Last Year

- Issues event: 15

- Watch event: 21

- Issue comment event: 23

- Fork event: 5

Committers

Last synced: about 3 years ago

All Time

- Total Commits: 229

- Total Committers: 4

- Avg Commits per committer: 57.25

- Development Distribution Score (DDS): 0.332

Top Committers

| Name | Commits | |

|---|---|---|

| Hyunsoo Park | p****8@g****m | 153 |

| Yeonghun | d****5@k****r | 59 |

| hspark92 | 6****2@u****m | 12 |

| Yeonghun1675 | 6****5@u****m | 5 |

Committer Domains (Top 20 + Academic)

Issues and Pull Requests

Last synced: 7 months ago

All Time

- Total issues: 45

- Total pull requests: 112

- Average time to close issues: 11 days

- Average time to close pull requests: about 9 hours

- Total issue authors: 24

- Total pull request authors: 3

- Average comments per issue: 2.89

- Average comments per pull request: 0.06

- Merged pull requests: 104

- Bot issues: 0

- Bot pull requests: 0

Past Year

- Issues: 9

- Pull requests: 0

- Average time to close issues: 7 days

- Average time to close pull requests: N/A

- Issue authors: 5

- Pull request authors: 0

- Average comments per issue: 2.22

- Average comments per pull request: 0

- Merged pull requests: 0

- Bot issues: 0

- Bot pull requests: 0

Top Authors

Issue Authors

- gianmarco-terrones (6)

- chenyuaner (6)

- MINGUUUS (3)

- kaljamal (2)

- adosar (2)

- paliwalpiyush151 (2)

- FMcil (2)

- jiali1025 (1)

- 872280516zyF (1)

- hrxia (1)

- shanniruo-tytx (1)

- tyvanzou (1)

- iawad (1)

- Thomaswbt (1)

- arosen93 (1)

Pull Request Authors

- hspark1212 (59)

- Yeonghun1675 (53)

- Mirual (1)

Top Labels

Issue Labels

Pull Request Labels

Dependencies

- ase >=3.22.1

- einops >=0.4.1

- ipympl >=0.9.2

- livereload *

- matplotlib >=3.5.0

- myst-parser *

- pandas *

- pymatgen >=2022.0.16

- pytorch-lightning ==1.6.0

- sacred >=0.8.2

- seaborn >=0.12.0

- sphinx *

- timm >=0.4.12

- torchmetrics >=0.6.0

- tqdm *

- transformers >=4.12.5

- actions/checkout v2 composite

- actions/setup-python v2 composite

- peaceiris/actions-gh-pages v3 composite