chgnet

Pretrained universal neural network potential for charge-informed atomistic modeling https://chgnet.lbl.gov

Science Score: 75.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

✓DOI references

Found 1 DOI reference(s) in README -

✓Academic publication links

Links to: arxiv.org, nature.com -

○Academic email domains

-

✓Institutional organization owner

Organization cedergrouphub has institutional domain (ceder.berkeley.edu) -

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (8.6%) to scientific vocabulary

Keywords

Repository

Pretrained universal neural network potential for charge-informed atomistic modeling https://chgnet.lbl.gov

Basic Info

- Host: GitHub

- Owner: CederGroupHub

- License: other

- Language: Python

- Default Branch: main

- Homepage: https://doi.org/10.1038/s42256-023-00716-3

- Size: 13.1 MB

Statistics

- Stars: 322

- Watchers: 7

- Forks: 85

- Open Issues: 4

- Releases: 19

Topics

Metadata Files

README.md

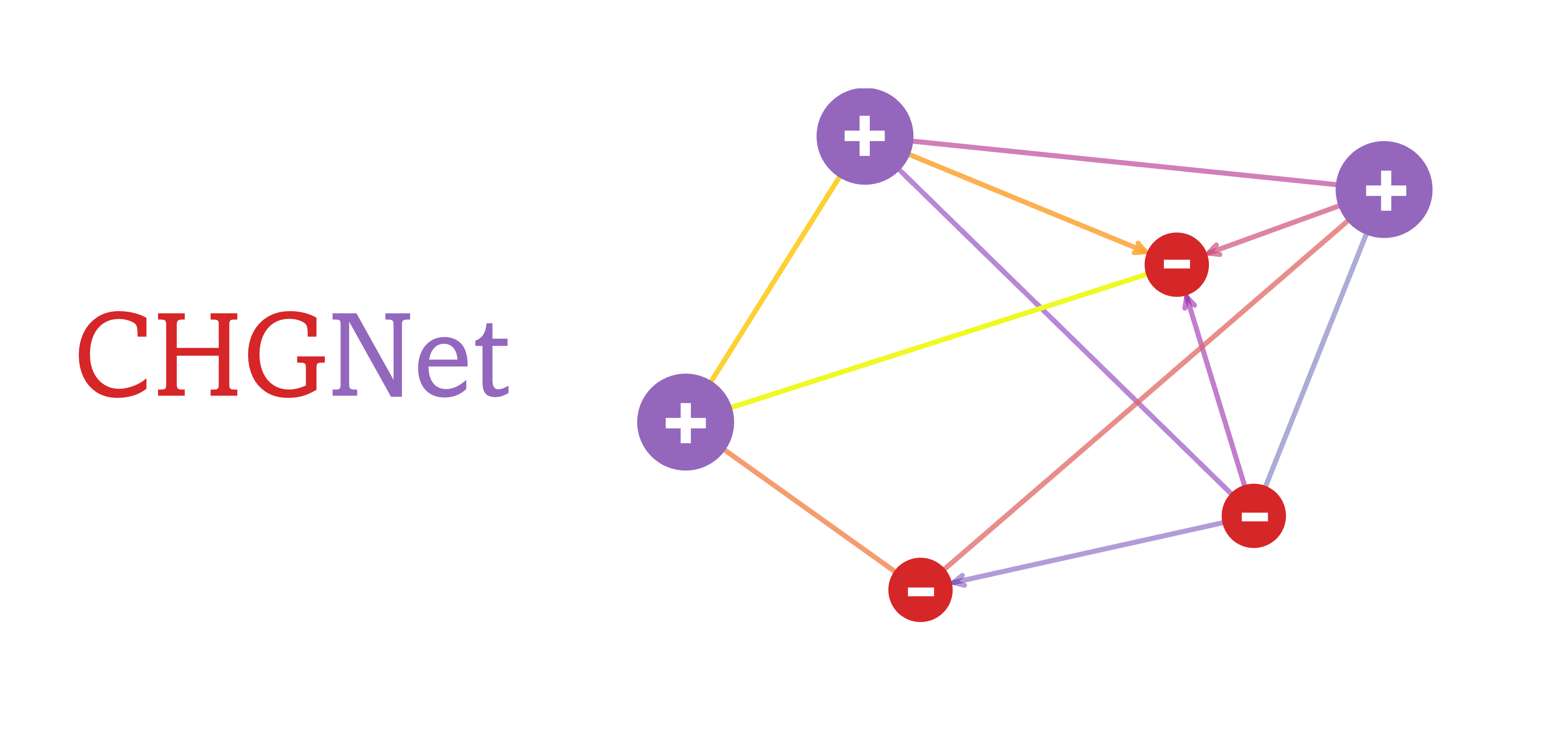

CHGNet

[](https://github.com/CederGroupHub/chgnet/actions/workflows/test.yml) [](https://app.codacy.com/gh/CederGroupHub/chgnet/dashboard?utm_source=gh&utm_medium=referral&utm_content=&utm_campaign=Badge_coverage) [](https://arxiv.org/abs/2302.14231)  [](https://pypi.org/project/chgnet?logo=pypi&logoColor=white) [](https://chgnet.lbl.gov) [](https://python.org/downloads)

A pretrained universal neural network potential for

charge-informed atomistic modeling (see publication)

Crystal Hamiltonian Graph neural Network is pretrained on the GGA/GGA+U static and relaxation trajectories from Materials Project,

a comprehensive dataset consisting of more than 1.5 Million structures from 146k compounds spanning the whole periodic table.

Crystal Hamiltonian Graph neural Network is pretrained on the GGA/GGA+U static and relaxation trajectories from Materials Project,

a comprehensive dataset consisting of more than 1.5 Million structures from 146k compounds spanning the whole periodic table.

CHGNet highlights its ability to study electron interactions and charge distribution in atomistic modeling with near DFT accuracy. The charge inference is realized by regularizing the atom features with DFT magnetic moments, which carry rich information about both local ionic environments and charge distribution.

Pretrained CHGNet achieves excellent performance on materials stability prediction from unrelaxed structures according to Matbench Discovery [repo].

Example notebooks

| Notebooks | Google Colab | Descriptions |

| ---------------------------------------------------------------------------------------------------------------------------------------------- | -------------------------------------------------------------------------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

| CHGNet Basics | | Examples for loading pre-trained CHGNet, predicting energy, force, stress, magmom as well as running structure optimization and MD. |

| Tuning CHGNet |

| Examples of fine tuning the pretrained CHGNet to your system of interest. |

| Visualize Relaxation |

| Crystal Toolkit app that visualizes convergence of atom positions, energies and forces of a structure during CHGNet relaxation. |

| Phonon DOS + Bands |

| Use CHGNet with the

atomate2 phonon workflow based on finite displacements as implemented in Phonopy to calculate phonon density of states and band structure for Si (mp-149). |

| Elastic tensor + bulk/shear modulus | | Use CHGNet with the

atomate2 elastic workflow based on a stress-strain approach to calculate elastic tensor and derived bulk and shear modulus for Si (mp-149). |

Installation

sh

pip install chgnet

if PyPI installation fails or you need the latest main branch commits, you can install from source:

sh

pip install git+https://github.com/CederGroupHub/chgnet

Tutorials and Docs

See the sciML webinar tutorial on 2023-11-02 and API docs.

Usage

Direct Inference (Static Calculation)

Pretrained CHGNet can predict the energy (eV/atom), force (eV/A), stress (GPa) and

magmom ($\mu_B$) of a given structure.

```python from chgnet.model.model import CHGNet from pymatgen.core import Structure

chgnet = CHGNet.load() structure = Structure.fromfile('examples/mp-18767-LiMnO2.cif') prediction = chgnet.predictstructure(structure)

for key, unit in [ ("energy", "eV/atom"), ("forces", "eV/A"), ("stress", "GPa"), ("magmom", "mu_B"), ]: print(f"CHGNet-predicted {key} ({unit}):\n{prediction[key[0]]}\n") ```

Molecular Dynamics

Charge-informed molecular dynamics can be simulated with pretrained CHGNet through ASE python interface (see below),

or through LAMMPS.

```python from chgnet.model.model import CHGNet from chgnet.model.dynamics import MolecularDynamics from pymatgen.core import Structure import warnings warnings.filterwarnings("ignore", module="pymatgen") warnings.filterwarnings("ignore", module="ase")

structure = Structure.from_file("examples/mp-18767-LiMnO2.cif") chgnet = CHGNet.load()

md = MolecularDynamics( atoms=structure, model=chgnet, ensemble="nvt", temperature=1000, # in K timestep=2, # in femto-seconds trajectory="mdout.traj", logfile="mdout.log", loginterval=100, ) md.run(50) # run a 0.1 ps MD simulation ```

The MD defaults to CUDA if available, to manually set device to cpu or mps:

MolecularDynamics(use_device='cpu').

MD outputs are saved to the ASE trajectory file, to visualize the MD trajectory and magnetic moments after the MD run:

```python from ase.io.trajectory import Trajectory from pymatgen.io.ase import AseAtomsAdaptor from chgnet.utils import solvechargeby_mag

traj = Trajectory("mdout.traj") mag = traj[-1].getmagnetic_moments()

get the non-charge-decorated structure

structure = AseAtomsAdaptor.get_structure(traj[-1]) print(structure)

get the charge-decorated structure

structwithchg = solvechargebymag(structure) print(structwith_chg) ```

To manipulate the MD trajectory, convert to other data formats, calculate mean square displacement, etc, please refer to ASE trajectory documentation.

Structure Optimization

CHGNet can perform fast structure optimization and provide site-wise magnetic moments. This makes it ideal for pre-relaxation and

MAGMOM initialization in spin-polarized DFT.

```python from chgnet.model import StructOptimizer

relaxer = StructOptimizer() result = relaxer.relax(structure) print("CHGNet relaxed structure", result["final_structure"]) print("relaxed total energy in eV:", result['trajectory'].energies[-1]) ```

Available Weights

CHGNet 0.3.0 is released with new pretrained weights! (release date: 10/22/23)

CHGNet.load() now loads 0.3.0 by default,

previous 0.2.0 version can be loaded with CHGNet.load('0.2.0')

Model Training / Fine-tune

Fine-tuning will help achieve better accuracy if a high-precision study is desired. To train/tune a CHGNet, you need to define your data in a

pytorch Dataset object. The example datasets are provided in data/dataset.py

```python from chgnet.data.dataset import StructureData, gettrainvaltestloader from chgnet.trainer import Trainer

dataset = StructureData( structures=listofstructures, energies=listofenergies, forces=listofforces, stresses=listofstresses, magmoms=listofmagmoms, ) trainloader, valloader, testloader = gettrainvaltestloader( dataset, batchsize=32, trainratio=0.9, valratio=0.05 ) trainer = Trainer( model=chgnet, targets="efsm", optimizer="Adam", criterion="MSE", learningrate=1e-2, epochs=50, usedevice="cuda", )

trainer.train(trainloader, valloader, test_loader) ```

Notes for Training

Check fine-tuning example notebook

- The target quantity used for training should be energy/atom (not total energy) if you're fine-tuning the pretrained

CHGNet. - The pretrained dataset of

CHGNetcomes from GGA+U DFT withMaterialsProject2020Compatibilitycorrections applied. The parameter for VASP is described inMPRelaxSet. If you're fine-tuning withMPRelaxSet, it is recommended to apply theMP2020compatibility to your energy labels so that they're consistent with the pretrained dataset. - If you're fine-tuning to functionals other than GGA, we recommend you refit the

AtomRef. CHGNetstress is in units of GPa, and the unit conversion has already been included indataset.py. SoVASPstress can be directly fed toStructureData- To save time from graph conversion step for each training, we recommend you use

GraphDatadefined indataset.py, which reads graphs directly from saved directory. To create saved graphs, seeexamples/make_graphs.py.

MPtrj Dataset

The Materials Project trajectory (MPtrj) dataset used to pretrain CHGNet is available at figshare.

The MPtrj dataset consists of all the GGA/GGA+U DFT calculations from the September 2022 Materials Project. By using the MPtrj dataset, users agree to abide the Materials Project terms of use.

Reference

If you use CHGNet or MPtrj dataset, please cite this paper:

bib

@article{deng_2023_chgnet,

title={CHGNet as a pretrained universal neural network potential for charge-informed atomistic modelling},

DOI={10.1038/s42256-023-00716-3},

journal={Nature Machine Intelligence},

author={Deng, Bowen and Zhong, Peichen and Jun, KyuJung and Riebesell, Janosh and Han, Kevin and Bartel, Christopher J. and Ceder, Gerbrand},

year={2023},

pages={1–11}

}

Development & Bugs

CHGNet is under active development, if you encounter any bugs in installation and usage,

please open an issue. We appreciate your contributions!

Owner

- Name: Ceder Group

- Login: CederGroupHub

- Kind: organization

- Website: http://ceder.berkeley.edu/

- Repositories: 19

- Profile: https://github.com/CederGroupHub

Citation (citation.cff)

cff-version: 1.2.0

message: If you use this software, please cite it as below.

title: CHGNet as a pretrained universal neural network potential for charge-informed atomistic modelling

authors:

- family-names: Deng

given-names: Bowen

- family-names: Zhong

given-names: Peichen

- family-names: Jun

given-names: KyuJung

- family-names: Riebesell

given-names: Janosh

- family-names: Han

given-names: Kevin

- family-names: Bartel

given-names: Christopher J.

- family-names: Ceder

given-names: Gerbrand

date-released: 2023-02-24

repository-code: https://github.com/CederGroupHub/chgnet

arxiv: https://arxiv.org/abs/2302.14231

doi: 10.1038/s42256-023-00716-3

url: https://chgnet.lbl.gov

type: software

keywords:

[machine learning, neural network, potential, charge, molecular dynamics]

version: 0.2.0 # replace with the version you use

journal: Nature Machine Intelligence

GitHub Events

Total

- Create event: 1

- Commit comment event: 2

- Issues event: 24

- Watch event: 82

- Delete event: 1

- Issue comment event: 25

- Push event: 15

- Pull request review event: 1

- Pull request event: 5

- Fork event: 24

Last Year

- Create event: 1

- Commit comment event: 2

- Issues event: 24

- Watch event: 82

- Delete event: 1

- Issue comment event: 25

- Push event: 15

- Pull request review event: 1

- Pull request event: 5

- Fork event: 24

Issues and Pull Requests

Last synced: 9 months ago

All Time

- Total issues: 68

- Total pull requests: 96

- Average time to close issues: 8 days

- Average time to close pull requests: 2 days

- Total issue authors: 51

- Total pull request authors: 9

- Average comments per issue: 2.9

- Average comments per pull request: 0.98

- Merged pull requests: 91

- Bot issues: 0

- Bot pull requests: 0

Past Year

- Issues: 17

- Pull requests: 7

- Average time to close issues: 14 days

- Average time to close pull requests: 4 days

- Issue authors: 15

- Pull request authors: 4

- Average comments per issue: 1.24

- Average comments per pull request: 2.57

- Merged pull requests: 5

- Bot issues: 0

- Bot pull requests: 0

Top Authors

Issue Authors

- M9JS (3)

- Atefeh-Yadegarifard (3)

- Yong-Q (3)

- janosh (3)

- bkmi (3)

- ajhoffman1229 (2)

- Turningl (2)

- mwolinska (2)

- msehabibur (2)

- chiang-yuan (2)

- shuix007 (1)

- ElliottKasoar (1)

- RylieWeaver (1)

- panmianzhi (1)

- zhiminzhang0830 (1)

Pull Request Authors

- janosh (89)

- DanielYang59 (8)

- lbluque (6)

- tsihyoung (6)

- AegisIK (5)

- Andrew-S-Rosen (2)

- BassemSboui (2)

- millet-brew (2)

- zhongpc (1)

- ajhoffman1229 (1)

- BowenD-UCB (1)

Top Labels

Issue Labels

Pull Request Labels

Packages

- Total packages: 3

-

Total downloads:

- pypi 17,228 last-month

-

Total dependent packages: 8

(may contain duplicates) -

Total dependent repositories: 2

(may contain duplicates) - Total versions: 41

- Total maintainers: 1

proxy.golang.org: github.com/CederGroupHub/chgnet

- Documentation: https://pkg.go.dev/github.com/CederGroupHub/chgnet#section-documentation

- License: other

-

Latest release: v0.4.0

published over 1 year ago

Rankings

proxy.golang.org: github.com/cedergrouphub/chgnet

- Documentation: https://pkg.go.dev/github.com/cedergrouphub/chgnet#section-documentation

- License: other

-

Latest release: v0.4.0

published over 1 year ago

Rankings

pypi.org: chgnet

Pretrained Universal Neural Network Potential for Charge-informed Atomistic Modeling

- Documentation: https://chgnet.readthedocs.io/

- License: Modified BSD

-

Latest release: 0.4.0

published over 1 year ago

Rankings

Maintainers (1)

Dependencies

- actions/checkout v4 composite

- actions/setup-python v4 composite

- actions/checkout v4 composite

- actions/download-artifact v3 composite

- actions/setup-python v4 composite

- actions/upload-artifact v3 composite

- codacy/codacy-coverage-reporter-action v1 composite

- pypa/cibuildwheel v2.15.0 composite

- pypa/gh-action-pypi-publish release/v1 composite

- @sveltejs/adapter-static ^2.0.3 development

- @sveltejs/kit ^1.25.0 development

- @sveltejs/vite-plugin-svelte ^2.4.6 development

- @typescript-eslint/eslint-plugin ^6.7.2 development

- @typescript-eslint/parser ^6.7.2 development

- eslint ^8.49.0 development

- eslint-plugin-svelte ^2.33.1 development

- hastscript ^8.0.0 development

- mdsvex ^0.11.0 development

- prettier ^3.0.3 development

- prettier-plugin-svelte ^3.0.3 development

- rehype-autolink-headings ^7.0.0 development

- rehype-slug ^6.0.0 development

- svelte ^4.2.0 development

- svelte-check ^3.5.1 development

- svelte-multiselect ^10.1.0 development

- svelte-preprocess ^5.0.4 development

- svelte-toc ^0.5.6 development

- svelte-zoo ^0.4.9 development

- svelte2tsx ^0.6.21 development

- tslib ^2.6.2 development

- typescript ^5.2.2 development

- vite ^4.4.9 development

- ase >=3.22.0

- cython >=0.29.26

- numpy >=1.21.6

- nvidia-ml-py3 >=7.352.0

- pymatgen >=2023.5.31

- torch >=1.11.0