https://github.com/beefathima-git/cloud-computing-for-real-time-surveillance-applications-

This project utilizes fog and cloud computing to enable low-latency, real-time surveillance by processing video and sensor data closer to the source. It improves responsiveness, reduces bandwidth usage, and enhances system scalability for smart surveillance applications.

https://github.com/beefathima-git/cloud-computing-for-real-time-surveillance-applications-

Science Score: 49.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

○CITATION.cff file

-

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

✓DOI references

Found 2 DOI reference(s) in README -

✓Academic publication links

Links to: sciencedirect.com -

○Academic email domains

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (10.1%) to scientific vocabulary

Repository

This project utilizes fog and cloud computing to enable low-latency, real-time surveillance by processing video and sensor data closer to the source. It improves responsiveness, reduces bandwidth usage, and enhances system scalability for smart surveillance applications.

Basic Info

- Host: GitHub

- Owner: beefathima-git

- Default Branch: main

- Size: 4.88 KB

Statistics

- Stars: 0

- Watchers: 0

- Forks: 0

- Open Issues: 0

- Releases: 0

Metadata Files

README (1).md

Fog-Based-Surveillance-Framework

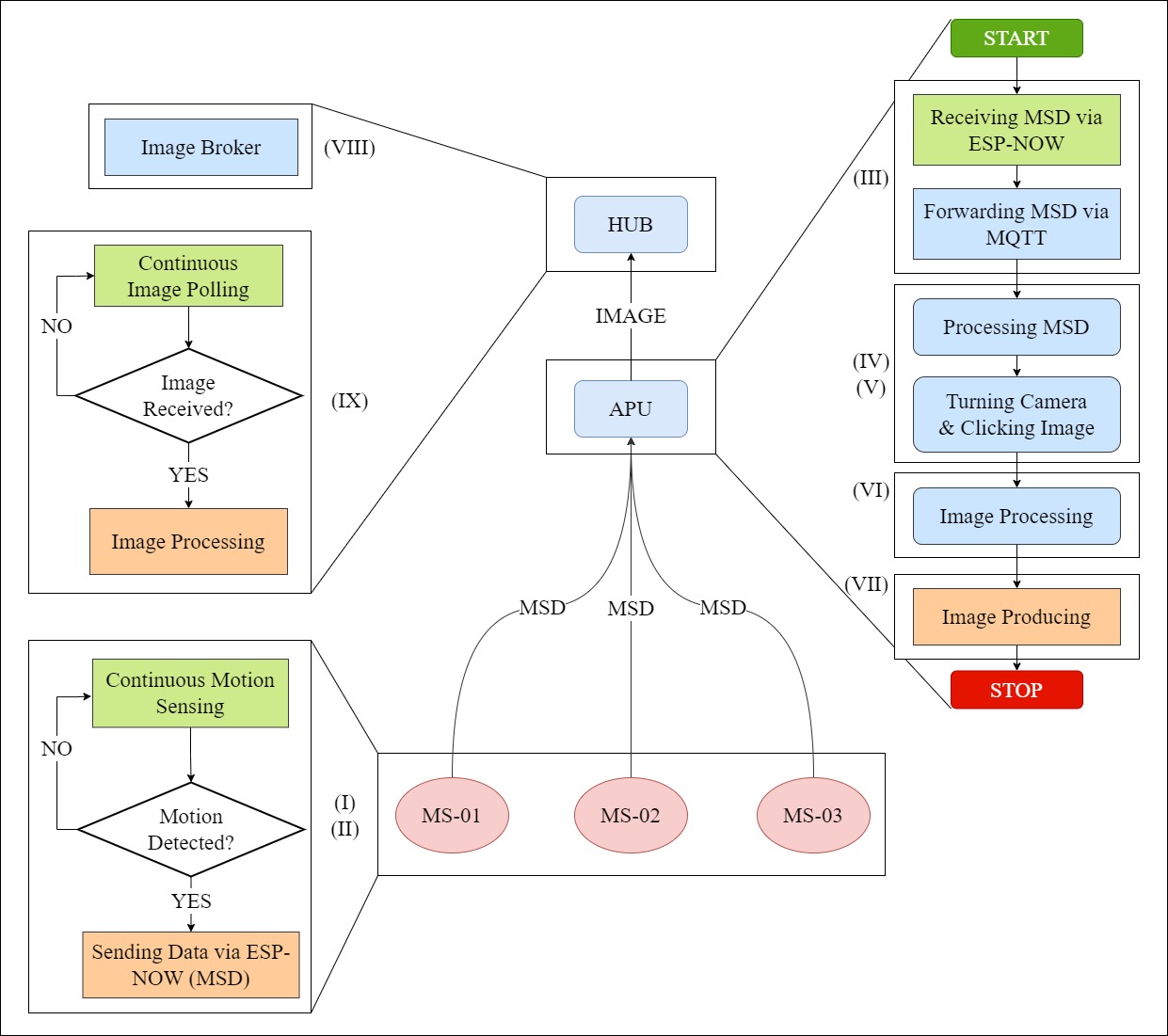

This IoT architecture utilizes energy-efficient and low-latency devices to monitor a specific area. It leverages ESP-NOW, MQTT, and Apache Kafka communication protocols to enable activity detection and monitoring. The architecture demonstrates the practical application of fog-based IoT architectures. It comprises multiple modules that work together, as shown in the flow diagram below.

Framework

Preprequisites

- Get familier with Apache Kafka, ESP-NOW, and MQTT.

- The architecture requires Soft Access Point and MAC address of the devices that are using ESP-NOW protocol. We used ESP8266 as the device for creating that network.

- Using the simulation, you can identify the most suitable devices for your architecture based on your specific requirements such as latency, energy consumption, and cost.

Modules

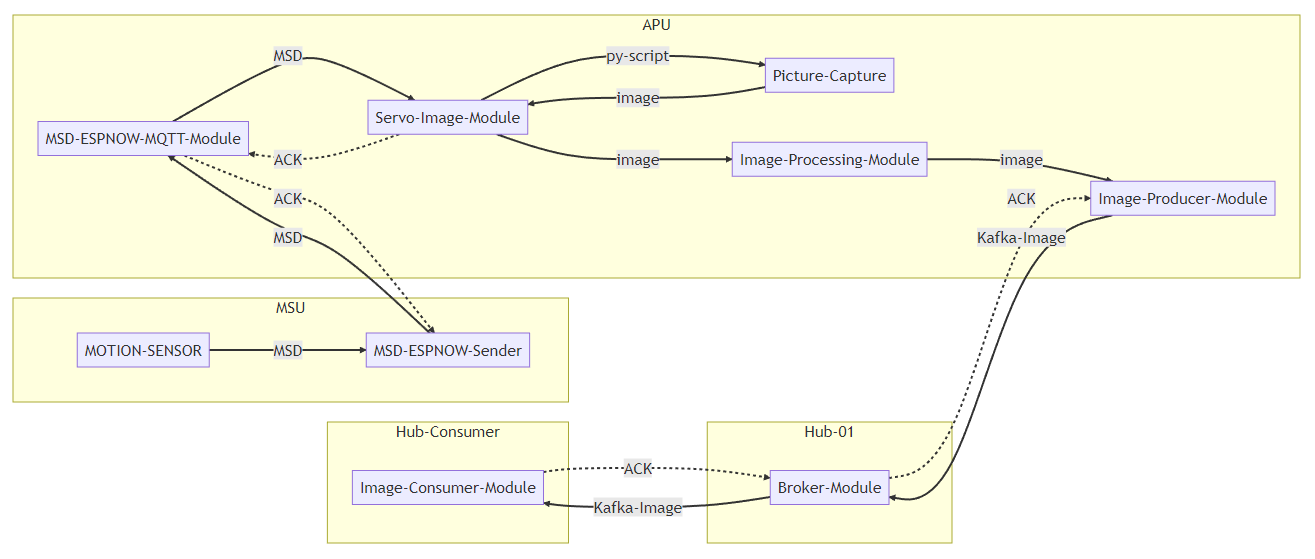

(Module Mapping; Rahul Siyanwal)

(Module Mapping; Rahul Siyanwal)

| Number | Module | Short Description | | ------------- | ------ | ----------------- | | I | Motion-Capture | Capturing a movement (MSD) | | II | MSD-ESP-Sender | Sending the MSD via ESP-NOW | | III | MSD-ESPNOW-MQTT-Forwarder | Forwarding MSD to a device for processing via MQTT | | IV | Servo-Image-Module | Adjusting the angle of servo motor in the direction where MSD is detected | | | V | Picture-Capture | Clicking an image in the direction of MSD | | | VI | Image-Processing | Preprocessing the Image / Run your own model | | VII | Image-Producer | Producing the Image via Kafka | | | VIII | Image-Broker | Kafka Broker | | | IX | Image-Consumer | Consuming the Image via End-User | |

The architecture consists of several modules: Motion-Capture, MSD-ESP-Sender, MSD-ESPNOW-MQTT-Forwarder, Servo-Image-Module, Picture-Capture, Image-Processing, Image-Producer, Image-Broker, and Image-Consumer. The Motion-Capture module uses PIR sensors to detect motion and the MSD-ESP-Sender module sends Motion Sensor Data (MSD) to another NodeMCU connected to APU via ESP-NOW. To forward the MSD packets to the microprocessor, which does not support ESP-NOW, the MSD-ESPNOW-MQTT-Forwarder module uses the MQTT protocol. Once the Servo-Image-Module rotates the camera toward the MSU that sent the MSD, the Picture-Capture module captures the image. After storing and processing the image, the Image-Producer module sends it to the broker using Apache Kafka. The Image-Broker module handles the broker, and the Image-Consumer module receives the image on the subscribed topic on the consumer device.

Cite this work:

@article{SIYANWAL2025101070, title = {An energy efficient fog-based internet of things framework to combat wildlife poaching}, journal = {Sustainable Computing: Informatics and Systems}, volume = {45}, pages = {101070}, year = {2025}, issn = {2210-5379}, doi = {https://doi.org/10.1016/j.suscom.2024.101070}, url = {https://www.sciencedirect.com/science/article/pii/S221053792400115X}, author = {Rahul Siyanwal and Arun Agarwal and Satish Narayana Srirama}, keywords = {Energy efficient wildlife monitoring, Internet of things, Fog computing, Sustainability, Distributed edge analytics, Simulation}, abstract = {Wildlife trafficking, a significant global issue driven by unsubstantiated medical claims and predatory lifestyle that can lead to zoonotic diseases, involves the illegal trade of endangered and protected species. While IoT-based solutions exist to make wildlife monitoring more widespread and precise, they come with trade-offs. For instance, UAVs cover large areas but cannot detect poaching in real-time once their power is drained. Similarly, using RFID collars on all wildlife is impractical. The wildlife monitoring system should be expeditious, vigilant, and efficient. Therefore, we propose a scalable, motion-sensitive IoT-based wildlife monitoring framework that leverages distributed edge analytics and fog computing, requiring no animal contact. The framework includes 1. Motion Sensing Units (MSUs), 2. Actuating and Processing Units (APUs) containing a camera, a processing unit (such as a single-board computer), and a servo motor, and 3. Hub containing a processing unit. For communication across these components, ESP-NOW, Apache Kafka, and MQTT were employed. Tailored applications (e.g. rare species detection utilizing ML) can then be deployed on these components. This paper details the framework’s implementation, validated through tests in semi-forest and dense forest environments. The system achieved real-time monitoring, defined as a procedure of detecting motion, turning the camera, capturing an image, and transmitting it to the Hub. We also provide a detailed model for implementing the framework, supported by 2800 simulated architectures. These simulations optimize device selection for wildlife monitoring based on latency, cost, and energy consumption, contributing to conservation efforts.} }

Owner

- Login: beefathima-git

- Kind: user

- Repositories: 1

- Profile: https://github.com/beefathima-git

GitHub Events

Total

- Create event: 2

Last Year

- Create event: 2