https://github.com/cptanalatriste/luke-the-reacher

A deep reinforcement-learning agent for a double-jointed robotic arm.

Science Score: 23.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

○CITATION.cff file

-

✓codemeta.json file

Found codemeta.json file -

○.zenodo.json file

-

○DOI references

-

✓Academic publication links

Links to: arxiv.org -

○Committers with academic emails

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (10.5%) to scientific vocabulary

Keywords

Repository

A deep reinforcement-learning agent for a double-jointed robotic arm.

Basic Info

- Host: GitHub

- Owner: cptanalatriste

- Language: Jupyter Notebook

- Default Branch: master

- Size: 2.17 MB

Statistics

- Stars: 1

- Watchers: 2

- Forks: 0

- Open Issues: 0

- Releases: 0

Topics

Metadata Files

README.md

luke-the-reacher

A deep reinforcement-learning agent for a double-jointed robotic arm, trained using the Deep Deterministic Policy Gradient (DDPG) algorithm.

Project Details:

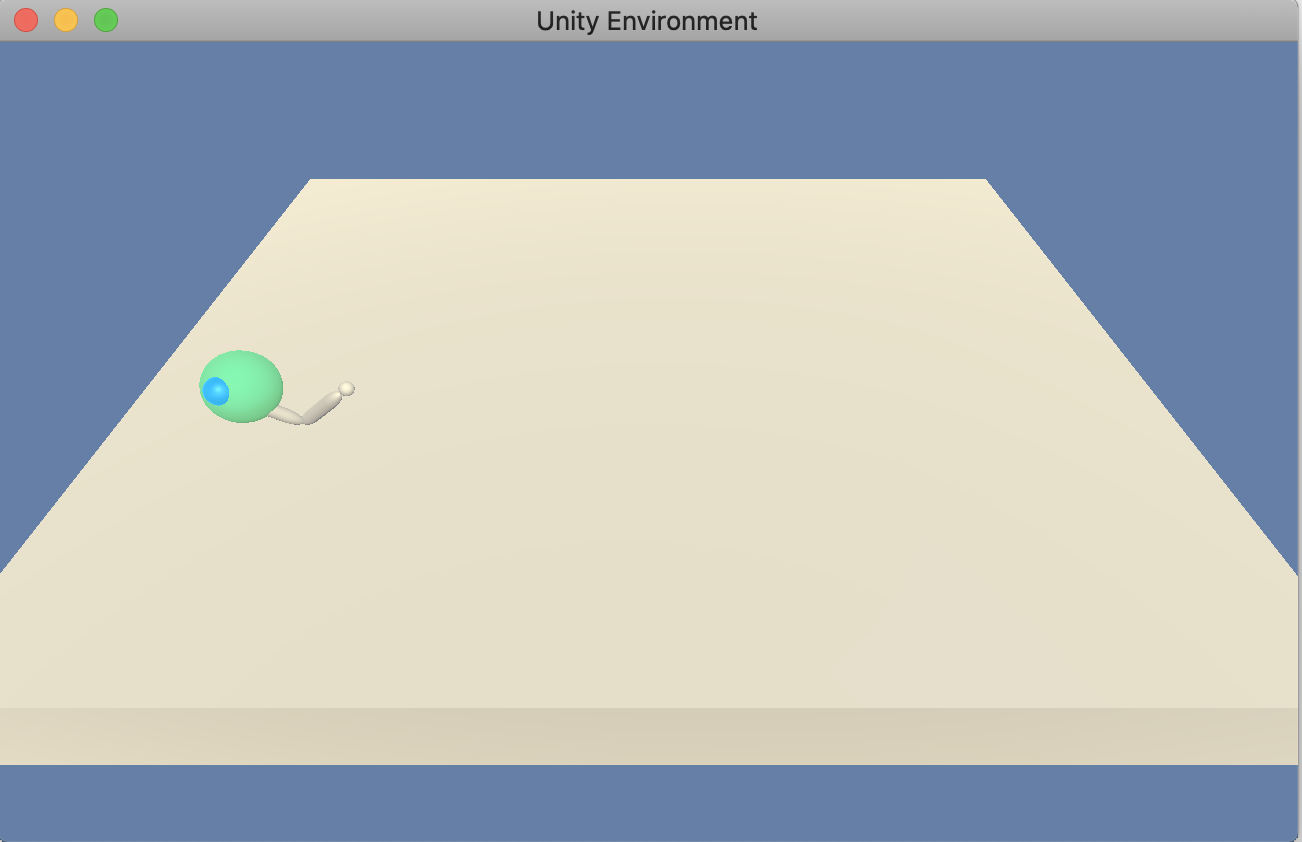

Luke-the-reacher is a deep-reinforcement learning agent designed for the Reacher environment from the Unity ML-Agents Toolkit.

The state is represented via a vector of 33 elements. They correspond to the position, rotation, velocity, and angular velocities of the double-jointed arm. The agent's actions are composed by vectors of 4 real-valued elements between -1 and 1. These values represent the torque to apply to its two joints.

The agent is rewarded with +0.1 points every time step the arm is in contact with the target. We consider our agent has mastered the task when he reaches an average score of 30, over 100 episodes.

Getting Started

Before running your agent, be sure to accomplish this first: 1. Clone this repository. 1. Download the reacher environment appropriate to your operating system (available here ). Be sure to select the file corresponding to Version 1: One(1) Agent. 1. Place the environment file in the cloned repository folder. 1. Setup an appropriate Python environment. Instructions available here.

Instructions

You can start running and training the agent by exploring Navigation.ipynb. Also available in the repository:

luke_reacher.pycontains the agent code.reacher_manager.pyhas the code for training the agent.

Owner

- Name: Carlos Gavidia-Calderon

- Login: cptanalatriste

- Kind: user

- Location: London, United Kingdom

- Company: @alan-turing-institute

- Website: https://carlos.gavidia.me/

- Twitter: cptan_alatriste

- Repositories: 74

- Profile: https://github.com/cptanalatriste

Systems engineer by training, software developer by trade. Research Software Engineer at @alan-turing-institute .

GitHub Events

Total

Last Year

Committers

Last synced: 7 months ago

Top Committers

| Name | Commits | |

|---|---|---|

| Carlos G. Gavidia | c****c@g****m | 21 |

Issues and Pull Requests

Last synced: 7 months ago

All Time

- Total issues: 0

- Total pull requests: 0

- Average time to close issues: N/A

- Average time to close pull requests: N/A

- Total issue authors: 0

- Total pull request authors: 0

- Average comments per issue: 0

- Average comments per pull request: 0

- Merged pull requests: 0

- Bot issues: 0

- Bot pull requests: 0

Past Year

- Issues: 0

- Pull requests: 0

- Average time to close issues: N/A

- Average time to close pull requests: N/A

- Issue authors: 0

- Pull request authors: 0

- Average comments per issue: 0

- Average comments per pull request: 0

- Merged pull requests: 0

- Bot issues: 0

- Bot pull requests: 0