summertime

An open-source text summarization toolkit for non-experts. EMNLP'2021 Demo

Science Score: 54.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

○DOI references

-

○Academic publication links

-

✓Committers with academic emails

4 of 13 committers (30.8%) from academic institutions -

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (16.2%) to scientific vocabulary

Keywords

Repository

An open-source text summarization toolkit for non-experts. EMNLP'2021 Demo

Basic Info

- Host: GitHub

- Owner: Yale-LILY

- License: apache-2.0

- Language: Python

- Default Branch: main

- Homepage: https://arxiv.org/abs/2108.12738

- Size: 9.59 MB

Statistics

- Stars: 279

- Watchers: 12

- Forks: 33

- Open Issues: 19

- Releases: 2

Topics

Metadata Files

README.md

SummerTime - Text Summarization Toolkit for Non-experts

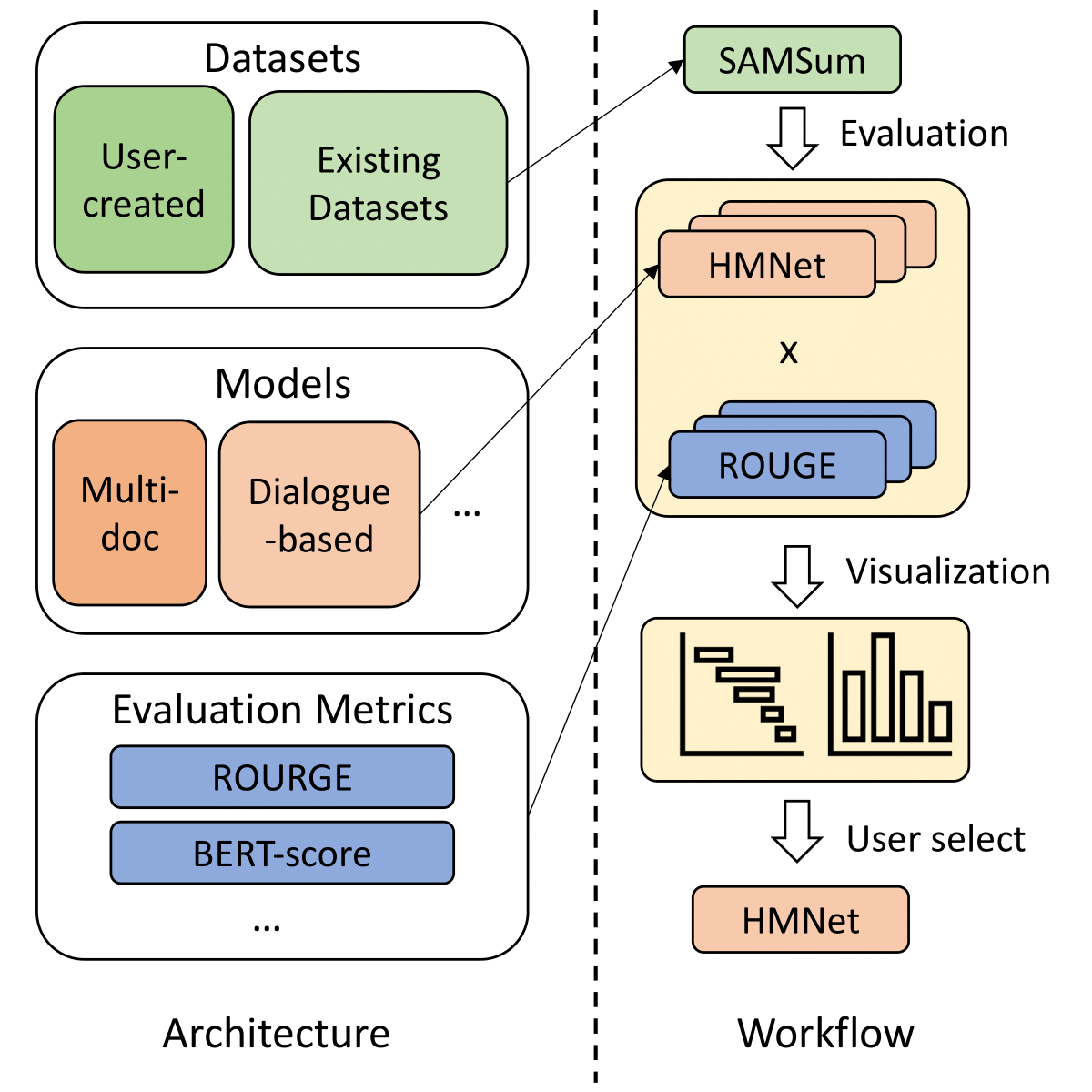

A library to help users choose appropriate summarization tools based on their specific tasks or needs. Includes models, evaluation metrics, and datasets.

The library architecture is as follows:

NOTE: SummerTime is in active development, any helpful comments are highly encouraged, please open an issue or reach out to any of the team members.

Installation and setup

Install from PyPI (recommended)

```bash

install extra dependencies first

pip install pyrouge@git+https://github.com/bheinzerling/pyrouge.git pip install encorewebsm@https://github.com/explosion/spacy-models/releases/download/encorewebsm-3.0.0/encoreweb_sm-3.0.0-py3-none-any.whl

install summertime from PyPI

pip install summertime

```

Local pip installation

Alternatively, to enjoy the most recent features, you can install from the source:

bash

git clone git@github.com:Yale-LILY/SummerTime

pip install -e .

Setup ROUGE (when using evaluation)

bash

export ROUGE_HOME=/usr/local/lib/python3.7/dist-packages/summ_eval/ROUGE-1.5.5/

Quick Start

Imports model, initializes default model, and summarizes sample documents. ```python from summertime import model

samplemodel = model.summarizer() documents = [ """ PG&E stated it scheduled the blackouts in response to forecasts for high winds amid dry conditions. The aim is to reduce the risk of wildfires. Nearly 800 thousand customers were scheduled to be affected by the shutoffs which were expected to last through at least midday tomorrow.""" ] samplemodel.summarize(documents)

["California's largest electricity provider has turned off power to hundreds of thousands of customers."]

```

Also, please run our colab notebook for a more hands-on demo and more examples.

Models

Supported Models

SummerTime supports different models (e.g., TextRank, BART, Longformer) as well as model wrappers for more complex summarization tasks (e.g., JointModel for multi-doc summarzation, BM25 retrieval for query-based summarization). Several multilingual models are also supported (mT5 and mBART).

| Models | Single-doc | Multi-doc | Dialogue-based | Query-based | Multilingual | | --------- | :------------------: | :------------------: | :------------------: | :------------------: | :------------------: | | BartModel | :heavycheckmark: | | | | | | BM25SummModel | | | | :heavycheckmark: | | | HMNetModel | | | :heavycheckmark: | | | | LexRankModel | :heavycheckmark: | | | | | | LongformerModel | :heavycheckmark: | | | | | | MBartModel | :heavycheckmark: | | | | 50 languages (full list here) | | MT5Model | :heavycheckmark: | | | | 101 languages (full list here) | | TranslationPipelineModel | :heavycheckmark: | | | | ~70 languages | | MultiDocJointModel | | :heavycheckmark: | | | | MultiDocSeparateModel | | :heavycheckmark: | | | | PegasusModel | :heavycheckmark: | | | | | TextRankModel | :heavycheckmark: | | | | | TFIDFSummModel | | | | :heavycheckmark: | |

To see all supported models, run:

python

from summertime.model import SUPPORTED_SUMM_MODELS

print(SUPPORTED_SUMM_MODELS)

Import and initialization:

```python from summertime import model

To use a default model

default_model = model.summarizer()

Or a specific model

bartmodel = model.BartModel() pegasusmodel = model.PegasusModel() lexrankmodel = model.LexRankModel() textrankmodel = model.TextRankModel() ```

Users can easily access documentation to assist with model selection

python

default_model.show_capability()

pegasus_model.show_capability()

textrank_model.show_capability()

To use a model for summarization, simply run: ```python documents = [ """ PG&E stated it scheduled the blackouts in response to forecasts for high winds amid dry conditions. The aim is to reduce the risk of wildfires. Nearly 800 thousand customers were scheduled to be affected by the shutoffs which were expected to last through at least midday tomorrow.""" ]

default_model.summarize(documents)

or

pegasus_model.summarize(documents) ```

All models can be initialized with the following optional options:

python

def __init__(self,

trained_domain: str=None,

max_input_length: int=None,

max_output_length: int=None,

):

All models will implement the following methods: ```python def summarize(self, corpus: Union[List[str], List[List[str]]], queries: List[str]=None) -> List[str]:

def show_capability(cls) -> None: ```

Datasets

Datasets supported

SummerTime supports different summarization datasets across different domains (e.g., CNNDM dataset - news article corpus, Samsum - dialogue corpus, QM-Sum - query-based dialogue corpus, MultiNews - multi-document corpus, ML-sum - multi-lingual corpus, PubMedQa - Medical domain, Arxiv - Science papers domain, among others.

| Dataset | Domain | # Examples | Src. length | Tgt. length | Query | Multi-doc | Dialogue | Multi-lingual | |-----------------|---------------------|-------------|-------------|-------------|--------------------|--------------------|--------------------|-------------------------------------------| | ArXiv | Scientific articles | 215k | 4.9k | 220 | | | | | | CNN/DM(3.0.0) | News | 300k | 781 | 56 | | | | | | MlsumDataset | Multi-lingual News | 1.5M+ | 632 | 34 | | :heavycheckmark: | | German, Spanish, French, Russian, Turkish | | Multi-News | News | 56k | 2.1k | 263.8 | | :heavycheckmark: | | | | SAMSum | Open-domain | 16k | 94 | 20 | | | :heavycheckmark: | | | Pubmedqa | Medical | 272k | 244 | 32 | :heavycheckmark: | | | | | QMSum | Meetings | 1k | 9.0k | 69.6 | :heavycheckmark: | | :heavycheckmark: | | | ScisummNet | Scientific articles | 1k | 4.7k | 150 | | | | | | SummScreen | TV shows | 26.9k | 6.6k | 337.4 | | | :heavycheckmark: | | | XSum | News | 226k | 431 | 23.3 | | | | | | XLSum | News | 1.35m | ??? | ??? | | | | 45 languages (see documentation) | | MassiveSumm | News | 12m+ | ??? | ??? | | | | 78 languages (see Multilingual Summarization section of README for details) |

To see all supported datasets, run:

```python from summertime import dataset

print(dataset.listalldataset()) ```

Dataset Initialization

```python from summertime import dataset

cnn_dataset = dataset.CnndmDataset()

or

xsum_dataset = dataset.XsumDataset()

..etc

```

Dataset Object

All datasets are implementations of the SummDataset class. Their data splits can be accessed as follows:

```python

dataset = dataset.CnndmDataset()

traindata = dataset.trainset

devdata = dataset.devset

testdata = dataset.testset

To see the details of the datasets, run:

python

dataset = dataset.CnndmDataset()

dataset.show_description() ```

Data instance

The data in all datasets is contained in a SummInstance class object, which has the following properties:

``python

data_instance.source = source # eitherList[str]orstr`, depending on the dataset itself, string joining may needed to fit into specific models.

datainstance.summary = summary # a string summary that serves as ground truth

datainstance.query = query # Optional, applies when a string query is present

print(data_instance) # to print the data instance in its entirety ```

Loading and using data instances

Data is loaded using a generator to save on space and time

To get a single instance

python

data_instance = next(cnn_dataset.train_set)

print(data_instance)

To get a slice of the dataset

```python import itertools

Get a slice from the train set generator - first 5 instances

trainset = itertools.islice(cnndataset.train_set, 5)

corpus = [instance.source for instance in train_set] print(corpus) ```

Loading a custom dataset

You can use custom data using the CustomDataset class that loads the data in the SummerTime dataset Class

```python

from summertime.dataset import CustomDataset

''' The trainset, testset and validationset have the following format: List[Dict], list of dictionaries that contain a data instance. The dictionary is in the form: {"source": "sourcedata", "summary": "summarydata", "query":"querydata"} * sourcedata is either of type List[str] or str * summarydata is of type str * querydata is of type str The list of dictionaries looks as follows: [dictionaryinstance1, dictionaryinstance_2, ...] '''

Create sample data

trainset = [

{

"source": "source1",

"summary": "summary1",

"query": "query1", # only included, if query is present

}

]

validationset = [

{

"source": "source2",

"summary": "summary2",

"query": "query2",

}

]

test_set = [

{

"source": "source3",

"summary": "summary3",

"query": "query3",

}

]

Depending on the dataset properties, you can specify the type of dataset

i.e multidoc, querybased, dialogue_based. If not specified, they default to false

customdataset = CustomDataset( trainset=trainset, validationset=validationset, testset=testset, querybased=True, multidoc=True dialoguebased=False) ```

Using the datasets with the models - Examples

```python import itertools from summertime import dataset, model

cnn_dataset = dataset.CnndmDataset()

Get a slice of the train set - first 5 instances

trainset = itertools.islice(cnndataset.train_set, 5)

corpus = [instance.source for instance in train_set]

Example 1 - traditional non-neural model

LexRank model

lexrank = model.LexRankModel(corpus) print(lexrank.show_capability())

lexranksummary = lexrank.summarize(corpus) print(lexranksummary)

Example 2 - A spaCy pipeline for TextRank (another non-neueral extractive summarization model)

TextRank model

textrank = model.TextRankModel() print(textrank.show_capability())

textranksummary = textrank.summarize(corpus) print(textranksummary)

Example 3 - A neural model to handle large texts

LongFormer Model

longformer = model.LongFormerModel() longformer.show_capability()

longformersummary = longformer.summarize(corpus) print(longformersummary) ```

Multilingual summarization

The summarize() method of multilingual models automatically checks for input document language.

Single-doc multilingual models can be initialized and used in the same way as monolingual models. They return an error if a language not supported by the model is input.

```python mbartmodel = stmodel.MBartModel() mt5model = stmodel.MT5Model()

load Spanish portion of MLSum dataset

mlsum = datasets.MlsumDataset(["es"])

corpus = itertools.islice(mlsum.trainset, 5) corpus = [instance.source for instance in trainset]

mt5 model will automatically detect Spanish as the language and indicate that this is supported!

mt5_model.summarize(corpus) ```

The following languages are currently supported in our implementation of the MassiveSumm dataset: Afrikaans, Amharic, Arabic, Assamese, Aymara, Azerbaijani, Bambara, Bengali, Tibetan, Bosnian, Bulgarian, Catalan, Czech, Welsh, Danish, German, Greek, English, Esperanto, Persian, Filipino, French, Fulah, Irish, Gujarati, Haitian, Hausa, Hebrew, Hindi, Croatian, Hungarian, Armenian,Igbo, Indonesian, Icelandic, Italian, Japanese, Kannada, Georgian, Khmer, Kinyarwanda, Kyrgyz, Korean, Kurdish, Lao, Latvian, Lingala, Lithuanian, Malayalam, Marathi, Macedonian, Malagasy, Mongolian, Burmese, South Ndebele, Nepali, Dutch, Oriya, Oromo, Punjabi, Polish, Portuguese, Dari, Pashto, Romanian, Rundi, Russian, Sinhala, Slovak, Slovenian, Shona, Somali, Spanish, Albanian, Serbian, Swahili, Swedish, Tamil, Telugu, Tetum, Tajik, Thai, Tigrinya, Turkish, Ukrainian, Urdu, Uzbek, Vietnamese, Xhosa, Yoruba, Yue Chinese, Chinese, Bislama, and Gaelic.

Evaluation

SummerTime supports different evaluation metrics including: BertScore, Bleu, Meteor, Rouge, RougeWe

To print all supported metrics: ```python from summertime.evaluation import SUPPORTEDEVALUATIONMETRICS

print(SUPPORTEDEVALUATIONMETRICS) ```

Import and initialization:

```python import summertime.evaluation as st_eval

berteval = steval.bertscore() bleueval = steval.bleueval() meteoreval = steval.bleueval() rougeeval = steval.rouge() rougeweeval = steval.rougewe() ```

Evaluation Class

All evaluation metrics can be initialized with the following optional arguments:

python

def __init__(self, metric_name):

All evaluation metric objects implement the following methods: ```python def evaluate(self, model, data):

def get_dict(self, keys): ```

Using evaluation metrics

Get sample summary data ```python from summertime.evaluation.base_metric import SummMetric from summertime.evaluation import Rouge, RougeWe, BertScore

import itertools

Evaluates model on subset of cnn_dailymail

Get a slice of the train set - first 5 instances

trainset = itertools.islice(cnndataset.train_set, 5)

corpus = [instance for instance in train_set] print(corpus)

articles = [instance.source for instance in corpus]

summaries = sample_model.summarize(articles) targets = [instance.summary for instance in corpus] ```

Evaluate the data on different metrics ```python from summertime.evaluation import BertScore, Rouge, RougeWe,

Calculate BertScore

bertmetric = BertScore() bertscore = bertmetric.evaluate(summaries, targets) print(bertscore)

Calculate Rouge

rougemetric = Rouge() rougescore = rougemetric.evaluate(summaries, targets) print(rougescore)

Calculate RougeWe

rougewemetric = RougeWe() rougwescore = rougewemetric.evaluate(summaries, targets) print(rougewescore) ```

Using automatic pipeline assembly

Given a SummerTime dataset, you may use the pipelines.assemble_model_pipeline function to retrieve a list of initialized SummerTime models that are compatible with the dataset provided.

```python from summertime.pipeline import assemblemodelpipeline from summertime.dataset import CnndmDataset, QMsumDataset

singledocmodels = assemblemodelpipeline(CnndmDataset)

[

(, 'BART'),

(, 'LexRank'),

(, 'Longformer'),

(, 'Pegasus'),

(, 'TextRank')

]

querybasedmultidocmodels = assemblemodelpipeline(QMsumDataset)

[

(, 'TF-IDF (HMNET)'),

(, 'BM25 (HMNET)')

]

```

=======

Visualizing performance of different models on your dataset

Given a SummerTime dataset, you may use the pipelines.assemblemodelpipeline function to retrieve a list of initialized SummerTime models that are compatible with the dataset provided.

```python

Get test data

import itertools from summertime.dataset import XsumDataset

Get a slice of the train set - first 5 instances

sampledataset = XsumDataset() sampledata = itertools.islice(sampledataset.trainset, 100) generator1 = iter(sampledata) generator2 = iter(sampledata)

bartmodel = BartModel() pegasusmodel = PegasusModel() models = [bartmodel, pegasusmodel] metrics = [metric() for metric in SUPPORTEDEVALUATIONMETRICS] ```

Create a radar plot

```python from summertime.evaluation.model_selector import ModelSelector

selector = ModelSelector(models, generator1, metrics) table = selector.run() print(table) visualization = selector.visualize(table) ```

```python from summertime.evaluation.model_selector import ModelSelector

newselector = ModelSelector(models, generator2, metrics) smarttable = newselector.runhalving(mininstances=2, factor=2) print(smarttable) visualizationsmart = selector.visualize(smarttable) ```

Create a scatter plot

```python from summertime.evaluation.modelselector import ModelSelector from summertime.evaluation.errorviz import scatter

keys = ("bertscoref1", "bleu", "rouge1fscore", "rouge2fscore", "rougelfscore", "rougewe3f", "meteor")

scatter(models, sampledata, metrics[1:3], keys=keys[1:3], maxinstances=5) ```

To contribute

Pull requests

Create a pull request and name it [your_gh_username]/[your_branch_name]. If needed, resolve your own branch's merge conflicts with main. Do not push directly to main.

Code formatting

If you haven't already, install black and flake8:

bash

pip install black

pip install flake8

Before pushing commits or merging branches, run the following commands from the project root. Note that black will write to files, and that you should add and commit changes made by black before pushing:

bash

black .

flake8 .

Or if you would like to lint specific files:

bash

black path/to/specific/file.py

flake8 path/to/specific/file.py

Ensure that black does not reformat any files and that flake8 does not print any errors. If you would like to override or ignore any of the preferences or practices enforced by black or flake8, please leave a comment in your PR for any lines of code that generate warning or error logs. Do not directly edit config files such as setup.cfg.

See the black docs and flake8 docs for documentation on installation, ignoring files/lines, and advanced usage. In addition, the following may be useful:

black [file.py] --diffto preview changes as diffs instead of directly making changesblack [file.py] --checkto preview changes with status codes instead of directly making changesgit diff -u | flake8 --diffto only run flake8 on working branch changes

Note that our CI test suite will include invoking black --check . and flake8 --count . on all non-unittest and non-setup Python files, and zero error-level output is required for all tests to pass.

Tests

Our continuous integration system is provided through Github actions. When any pull request is created or updated or whenever main is updated, the repository's unit tests will be run as build jobs on tangra for that pull request. Build jobs will either pass or fail within a few minutes, and build statuses and logs are visible under Actions. Please ensure that the most recent commit in pull requests passes all checks (i.e. all steps in all jobs run to completion) before merging, or request a review. To skip a build on any particular commit, append [skip ci] to the commit message. Note that PRs with the substring /no-ci/ anywhere in the branch name will not be included in CI.

Citation

This repository is built by the LILY Lab at Yale University, led by Prof. Dragomir Radev. The main contributors are Ansong Ni, Zhangir Azerbayev, Troy Feng, Murori Mutuma, Hailey Schoelkopf, and Yusen Zhang (Penn State).

If you use SummerTime in your work, consider citing:

@article{ni2021summertime,

title={SummerTime: Text Summarization Toolkit for Non-experts},

author={Ansong Ni and Zhangir Azerbayev and Mutethia Mutuma and Troy Feng and Yusen Zhang and Tao Yu and Ahmed Hassan Awadallah and Dragomir Radev},

journal={arXiv preprint arXiv:2108.12738},

year={2021}

}

For comments and question, please open an issue.

Owner

- Name: Yale-LILY

- Login: Yale-LILY

- Kind: organization

- Website: https://yale-lily.github.io/

- Repositories: 45

- Profile: https://github.com/Yale-LILY

Language, Information, and Learning at Yale

Citation (CITATION.cff)

# YAML 1.2

---

authors:

-

affiliation: "Yale University"

family-names: Ni

given-names: Ansong

-

affiliation: "Yale University"

family-names: Azerbayev

given-names: Zhangir

-

affiliation: "Yale University"

family-names: Mutuma

given-names: Mutethia

-

affiliation: "Yale University"

family-names: Feng

given-names: Troy

-

affiliation: "Penn State University"

family-names: Zhang

given-names: Yusen

-

affiliation: "Yale University"

family-names: Yu

given-names: Tao

-

affiliation: "Yale University"

family-names: Awadallah

given-names: Ahmed H.

-

affiliation: "Yale University"

family-names: Radev

given-names: Dragomir

cff-version: "1.1.0"

license: "Apache-2.0"

message: "If you use this software, please cite it using this metadata."

repository-code: "https://github.com/Yale-LILY/SummerTime"

title: "SummerTime - Text Summarization Toolkit for Non-experts"

version: "0.1"

# doi: "10.18653/v1/W18-2501"

...

GitHub Events

Total

- Watch event: 13

- Fork event: 3

Last Year

- Watch event: 13

- Fork event: 3

Committers

Last synced: about 3 years ago

All Time

- Total Commits: 582

- Total Committers: 13

- Avg Commits per committer: 44.769

- Development Distribution Score (DDS): 0.759

Top Committers

| Name | Commits | |

|---|---|---|

| Troy Feng | t****g@y****u | 140 |

| Murori | d****i@g****m | 133 |

| zhangir-azerbayev | z****v@g****m | 89 |

| NickSchoelkopf | n****f@y****u | 78 |

| niansong1996 | n****6@g****m | 59 |

| chatc | z****9@1****m | 29 |

| niansong1996 | n****6@1****m | 18 |

| Mutuma Murori | m****4@z****u | 16 |

| Murori Mutuma | m****4@t****a | 9 |

| Ansong Ni | n****6@u****m | 7 |

| Ansong Ni | a****9@t****a | 2 |

| Tao Yu | t****6@c****u | 1 |

| Arjun Nair | a****r@h****m | 1 |

Committer Domains (Top 20 + Academic)

Issues and Pull Requests

Last synced: 9 months ago

All Time

- Total issues: 48

- Total pull requests: 56

- Average time to close issues: about 1 month

- Average time to close pull requests: 17 days

- Total issue authors: 13

- Total pull request authors: 10

- Average comments per issue: 1.52

- Average comments per pull request: 3.23

- Merged pull requests: 45

- Bot issues: 0

- Bot pull requests: 0

Past Year

- Issues: 0

- Pull requests: 0

- Average time to close issues: N/A

- Average time to close pull requests: N/A

- Issue authors: 0

- Pull request authors: 0

- Average comments per issue: 0

- Average comments per pull request: 0

- Merged pull requests: 0

- Bot issues: 0

- Bot pull requests: 0

Top Authors

Issue Authors

- niansong1996 (17)

- MuroriM (16)

- epsilon-deltta (3)

- ismu (2)

- haileyschoelkopf (2)

- chatc (1)

- JingrongFeng (1)

- johnhutx (1)

- fabioperez (1)

- yungsinatra0 (1)

- AK391 (1)

- mterrestre01 (1)

- lewismc (1)

Pull Request Authors

- MuroriM (15)

- troyfeng116 (14)

- niansong1996 (7)

- haileyschoelkopf (6)

- zhangir-azerbayev (5)

- chatc (4)

- StanLebowski (2)

- AK391 (1)

- arjunvnair (1)

- 6wj (1)

Top Labels

Issue Labels

Pull Request Labels

Packages

- Total packages: 1

-

Total downloads:

- pypi 33 last-month

- Total dependent packages: 0

- Total dependent repositories: 1

- Total versions: 2

- Total maintainers: 1

pypi.org: summertime

Text summarization toolkit for non-experts

- Homepage: https://github.com/Yale-LILY/SummerTime

- Documentation: https://summertime.readthedocs.io/

- License: Apache Software License

-

Latest release: 1.2.1

published over 4 years ago

Rankings

Maintainers (1)

Dependencies

- beautifulsoup4 *

- black *

- click ==7.1.2

- datasets *

- easynmt ==2.0.1

- fasttext ==0.9.2

- flake8 *

- gdown *

- gensim ==3.8.3

- jupyter *

- lexrank *

- mpi4py ==3.0.3

- nltk *

- numpy *

- orjson *

- prettytable ==2.2.1

- progressbar *

- py7zr ==0.16.1

- pytextrank *

- readability-lxml *

- sentencepiece *

- sklearn *

- spacy ==3.0.6

- summ_eval ==0.70

- tensorboard ==2.4.1

- torch *

- tqdm ==4.49.0

- transformers *

- beautifulsoup4 *

- black *

- click ==7.1.2

- cython *

- datasets *

- easynmt *

- fasttext *

- flake8 *

- gdown *

- gensim *

- jupyter *

- lexrank *

- nltk ==3.6.2

- numpy *

- orjson *

- prettytable *

- progressbar *

- py7zr *

- pytextrank *

- readability-lxml *

- sentencepiece *

- sklearn *

- spacy ==3.0.6

- summ_eval ==0.70

- tensorboard *

- torch *

- tqdm *

- transformers *