yolov5-with-se

Science Score: 44.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

○DOI references

-

○Academic publication links

-

○Academic email domains

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (7.5%) to scientific vocabulary

Repository

Basic Info

- Host: GitHub

- Owner: yiminwangcsudh

- License: gpl-3.0

- Language: Python

- Default Branch: master

- Size: 975 KB

Statistics

- Stars: 0

- Watchers: 1

- Forks: 0

- Open Issues: 2

- Releases: 0

Metadata Files

README.md

yolov5-with-SE

The mechanism of attention stems from the study of human vision. In cognitive science, humans selectively focus on a portion of all information while ignoring other visible information due to bottlenecks in information processing. The reason for achieving this ability is that different parts of the human retina have different information processing capabilities, that is, different parts have different acuities, and the fovea of the human retina has the highest acuity. In order to make rational use of limited visual information processing resources, humans need to select a specific part of the visual area and then focus on it. For example, when people use the computer screen to watch movies, they will focus on and deal with the vision within the scope of the computer screen, and the vision outside the computer screen, such as the keyboard, computer background, etc., will be ignored.

There are many ways to introduce attention mechanisms in neural networks, taking convolutional neural networks as an example, you can increase the introduction of attention mechanisms in the spatial dimension and you can also increase the attention mechanism in the channel dimension, of course, there are also mixed dimensions, that is, adding attention mechanisms in the spatial dimension and channel dimensions at the same time.

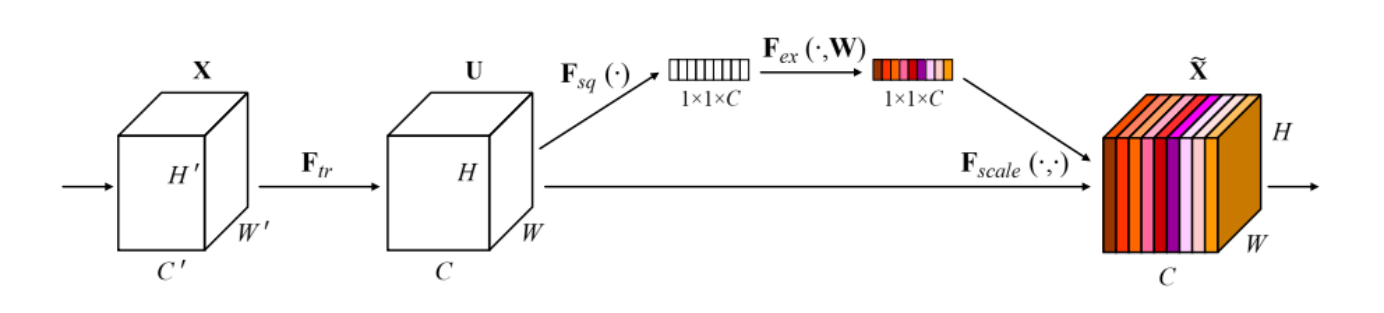

The structure of the SE building block

The structure of the SE building block

Squeeze: Encodes the entire spatial feature on a channel into a global feature, and uses global average pooling to compress the two-dimensional feature (H×W) of each channel into a real number.

Excitation: Dynamically generates a weight value for each feature channel. It uses two fully connected layers to form a Bottleneck structure to model the correlation between channels and outputs the same number of weight values as the input features.

Scale: The normalized weights learned by the excitation are weighted to the features of each channel.

Citation (CITATION.cff)

cff-version: 1.2.0

preferred-citation:

type: software

message: If you use YOLOv5, please cite it as below.

authors:

- family-names: Jocher

given-names: Glenn

orcid: "https://orcid.org/0000-0001-5950-6979"

title: "YOLOv5 by Ultralytics"

version: 7.0

doi: 10.5281/zenodo.3908559

date-released: 2020-5-29

license: GPL-3.0

url: "https://github.com/ultralytics/yolov5"

GitHub Events

Total

Last Year

Dependencies

- Pillow >=7.1.2

- PyYAML >=5.3.1

- gitpython *

- ipython *

- matplotlib >=3.2.2

- numpy >=1.18.5

- opencv-python >=4.1.1

- pandas >=1.1.4

- psutil *

- requests >=2.23.0

- scipy >=1.4.1

- seaborn >=0.11.0

- tensorboard >=2.4.1

- thop >=0.1.1

- torchvision >=0.8.1

- tqdm >=4.64.0

- actions/cache v3 composite

- actions/checkout v3 composite

- actions/setup-python v4 composite

- actions/checkout v3 composite

- github/codeql-action/analyze v2 composite

- github/codeql-action/autobuild v2 composite

- github/codeql-action/init v2 composite

- actions/checkout v3 composite

- docker/build-push-action v3 composite

- docker/login-action v2 composite

- docker/setup-buildx-action v2 composite

- docker/setup-qemu-action v2 composite

- actions/first-interaction v1 composite

- actions/stale v6 composite

- actions/checkout v3 composite

- actions/setup-node v3 composite

- dephraiim/translate-readme main composite

- nvcr.io/nvidia/pytorch 22.11-py3 build

- gcr.io/google-appengine/python latest build

- Flask ==1.0.2

- gunicorn ==19.9.0

- pip ==21.1