llms-from-scratch

Science Score: 44.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

○DOI references

-

○Academic publication links

-

○Academic email domains

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (13.1%) to scientific vocabulary

Repository

Basic Info

- Host: GitHub

- Owner: ua-datalab

- License: other

- Language: Jupyter Notebook

- Default Branch: main

- Size: 13.2 MB

Statistics

- Stars: 1

- Watchers: 3

- Forks: 0

- Open Issues: 0

- Releases: 0

Metadata Files

README.md

{A cloned repository from Sebastian Raschka with the same name (12/08/2024).}

Build a Large Language Model (From Scratch)

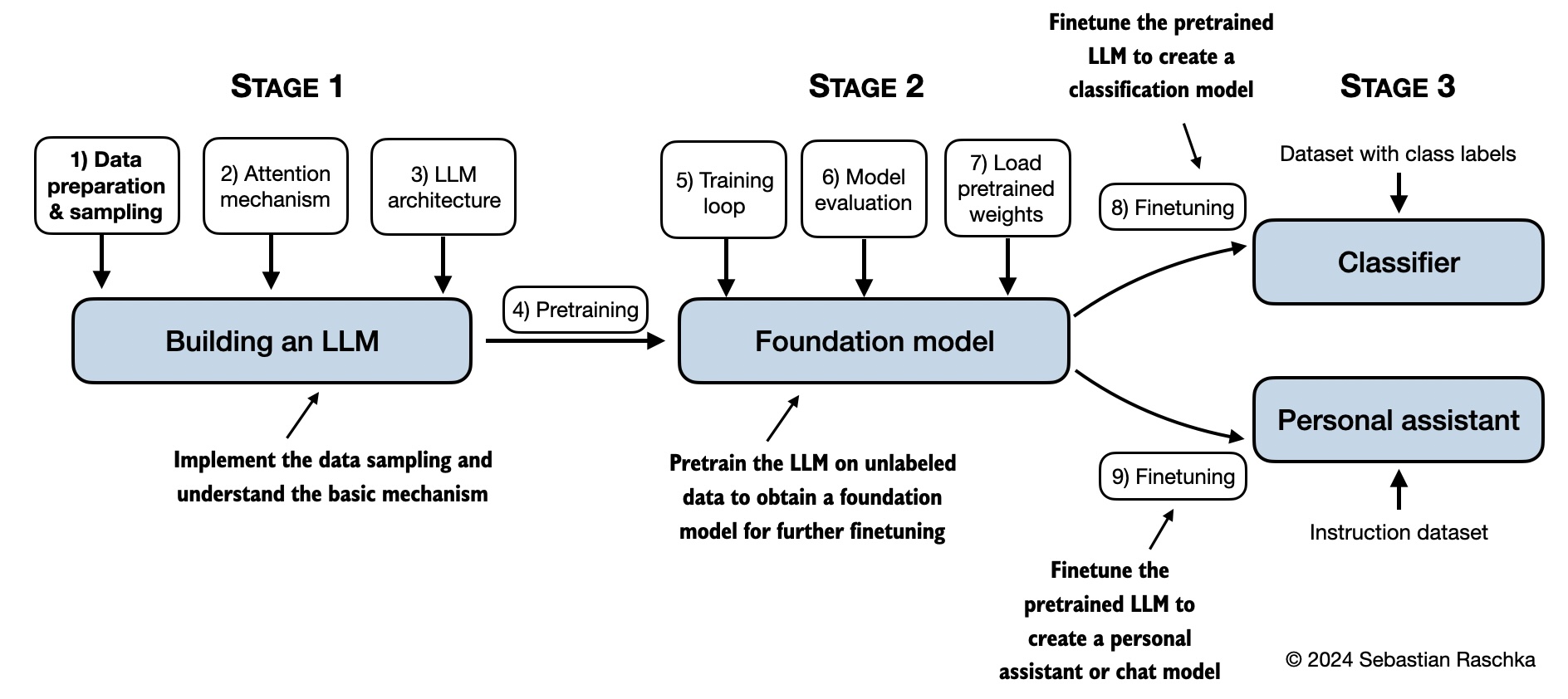

In Build a Large Language Model (From Scratch), you'll learn and understand how large language models (LLMs) work from the inside out by coding them from the ground up, step by step. In this book, I'll guide you through creating your own LLM, explaining each stage with clear text, diagrams, and examples.

The method described in this book for training and developing your own small-but-functional model for educational purposes mirrors the approach used in creating large-scale foundational models such as those behind ChatGPT. In addition, this book includes code for loading the weights of larger pretrained models for finetuning.

- Link to the official source code repository

- Link to the book at Manning (the publisher's website)

- Link to the book page on Amazon.com

- ISBN 9781633437166

Table of Contents

Please note that this README.md file is a Markdown (.md) file. If you have downloaded this code bundle from the Manning website and are viewing it on your local computer, I recommend using a Markdown editor or previewer for proper viewing. If you haven't installed a Markdown editor yet, MarkText is a good free option.

You can alternatively view this and other files on GitHub at https://github.com/rasbt/LLMs-from-scratch in your browser, which renders Markdown automatically.

<!-- -->

[!TIP] If you're seeking guidance on installing Python and Python packages and setting up your code environment, I suggest reading the README.md file located in the setup directory.

| Chapter Title | Main Code (for Quick Access) | All Code + Supplementary |

|------------------------------------------------------------|---------------------------------------------------------------------------------------------------------------------------------|-------------------------------|

| Setup recommendations | - | - |

| Ch 1: Understanding Large Language Models | No code | - |

| Ch 2: Working with Text Data | - ch02.ipynb

- dataloader.ipynb (summary)

- exercise-solutions.ipynb | ./ch02 |

| Ch 3: Coding Attention Mechanisms | - ch03.ipynb

- multihead-attention.ipynb (summary)

- exercise-solutions.ipynb| ./ch03 |

| Ch 4: Implementing a GPT Model from Scratch | - ch04.ipynb

- gpt.py (summary)

- exercise-solutions.ipynb | ./ch04 |

| Ch 5: Pretraining on Unlabeled Data | - ch05.ipynb

- gpt_train.py (summary)

- gpt_generate.py (summary)

- exercise-solutions.ipynb | ./ch05 |

| Ch 6: Finetuning for Text Classification | - ch06.ipynb

- gptclassfinetune.py

- exercise-solutions.ipynb | ./ch06 |

| Ch 7: Finetuning to Follow Instructions | - ch07.ipynb

- gptinstructionfinetuning.py (summary)

- ollama_evaluate.py (summary)

- exercise-solutions.ipynb | ./ch07 |

| Appendix A: Introduction to PyTorch | - code-part1.ipynb

- code-part2.ipynb

- DDP-script.py

- exercise-solutions.ipynb | ./appendix-A |

| Appendix B: References and Further Reading | No code | - |

| Appendix C: Exercise Solutions | No code | - |

| Appendix D: Adding Bells and Whistles to the Training Loop | - appendix-D.ipynb | ./appendix-D |

| Appendix E: Parameter-efficient Finetuning with LoRA | - appendix-E.ipynb | ./appendix-E |

The mental model below summarizes the contents covered in this book.

Hardware Requirements

The code in the main chapters of this book is designed to run on conventional laptops within a reasonable timeframe and does not require specialized hardware. This approach ensures that a wide audience can engage with the material. Additionally, the code automatically utilizes GPUs if they are available. (Please see the setup doc for additional recommendations.)

Bonus Material

Several folders contain optional materials as a bonus for interested readers:

- Setup

- Chapter 2: Working with text data

- Chapter 3: Coding attention mechanisms

- Chapter 4: Implementing a GPT model from scratch

- Chapter 5: Pretraining on unlabeled data:

- Alternative Weight Loading from Hugging Face Model Hub using Transformers

- Pretraining GPT on the Project Gutenberg Dataset

- Adding Bells and Whistles to the Training Loop

- Optimizing Hyperparameters for Pretraining

- Building a User Interface to Interact With the Pretrained LLM

- Converting GPT to Llama

- Llama 3.2 From Scratch

- Memory-efficient Model Weight Loading

- Chapter 6: Finetuning for classification

- Chapter 7: Finetuning to follow instructions

- Dataset Utilities for Finding Near Duplicates and Creating Passive Voice Entries

- Evaluating Instruction Responses Using the OpenAI API and Ollama

- Generating a Dataset for Instruction Finetuning

- Improving a Dataset for Instruction Finetuning

- Generating a Preference Dataset with Llama 3.1 70B and Ollama

- Direct Preference Optimization (DPO) for LLM Alignment

- Building a User Interface to Interact With the Instruction Finetuned GPT Model

Questions, Feedback, and Contributing to This Repository

I welcome all sorts of feedback, best shared via the Manning Forum or GitHub Discussions. Likewise, if you have any questions or just want to bounce ideas off others, please don't hesitate to post these in the forum as well.

Please note that since this repository contains the code corresponding to a print book, I currently cannot accept contributions that would extend the contents of the main chapter code, as it would introduce deviations from the physical book. Keeping it consistent helps ensure a smooth experience for everyone.

Citation

If you find this book or code useful for your research, please consider citing it.

Chicago-style citation:

Raschka, Sebastian. Build A Large Language Model (From Scratch). Manning, 2024. ISBN: 978-1633437166.

BibTeX entry:

@book{build-llms-from-scratch-book,

author = {Sebastian Raschka},

title = {Build A Large Language Model (From Scratch)},

publisher = {Manning},

year = {2024},

isbn = {978-1633437166},

url = {https://www.manning.com/books/build-a-large-language-model-from-scratch},

github = {https://github.com/rasbt/LLMs-from-scratch}

}

Owner

- Name: University of Arizona Data Lab

- Login: ua-datalab

- Kind: organization

- Location: United States of America

- Website: https://github.com/clizarraga-UAD7/DataScienceLab/wiki

- Repositories: 1

- Profile: https://github.com/ua-datalab

The University of Arizona Data Science Lab @ Data Science Institute

Citation (CITATION.cff)

cff-version: 1.2.0

message: "If you use this book or its accompanying code, please cite it as follows."

title: "Build A Large Language Model (From Scratch), Published by Manning, ISBN 978-1633437166"

abstract: "This book provides a comprehensive, step-by-step guide to implementing a ChatGPT-like large language model from scratch in PyTorch."

date-released: 2024-09-12

authors:

- family-names: "Raschka"

given-names: "Sebastian"

license: "Apache-2.0"

url: "https://www.manning.com/books/build-a-large-language-model-from-scratch"

repository-code: "https://github.com/rasbt/LLMs-from-scratch"

keywords:

- large language models

- natural language processing

- artificial intelligence

- PyTorch

- machine learning

- deep learning

GitHub Events

Total

- Watch event: 1

- Member event: 3

- Public event: 1

- Push event: 2

- Gollum event: 16

- Create event: 1

Last Year

- Watch event: 1

- Member event: 3

- Public event: 1

- Push event: 2

- Gollum event: 16

- Create event: 1

Dependencies

- actions/checkout v4 composite

- actions/setup-python v5 composite

- actions/checkout v4 composite

- actions/setup-python v5 composite

- actions/checkout v4 composite

- actions/setup-python v5 composite

- actions/checkout v4 composite

- actions/setup-python v5 composite

- actions/checkout v4 composite

- actions/setup-python v5 composite

- actions/checkout v4 composite

- actions/setup-python v5 composite

- actions/checkout v4 composite

- actions/setup-python v5 composite

- pytorch/pytorch 2.5.0-cuda12.4-cudnn9-runtime build

- requests *

- tqdm *

- transformers >=4.33.2

- thop *

- chainlit >=1.2.0

- blobfile >=3.0.0

- huggingface_hub >=0.24.7

- ipywidgets >=8.1.2

- safetensors >=0.4.4

- sentencepiece >=0.1.99

- pytest >=8.1.1 test

- transformers >=4.44.2 test

- scikit-learn >=1.3.0

- transformers >=4.33.2

- chainlit >=1.2.0

- openai >=1.30.3

- scikit-learn >=1.3.1

- tqdm >=4.65.0

- openai >=1.30.3

- tqdm >=4.65.0

- openai >=1.30.3

- tqdm >=4.65.0

- chainlit >=1.2.0

- jupyterlab >=4.0

- matplotlib >=3.7.1

- numpy >=1.25,<2.0

- pandas >=2.2.1

- psutil >=5.9.5

- tensorflow >=2.15.0

- tiktoken >=0.5.1

- torch >=2.0.1

- tqdm >=4.66.1