ml-project-object-detection-for-autonomous-vehicles

https://github.com/dheerajreddy258/ml-project-object-detection-for-autonomous-vehicles

Science Score: 54.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

○DOI references

-

✓Academic publication links

Links to: zenodo.org -

○Academic email domains

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (6.0%) to scientific vocabulary

Repository

Basic Info

- Host: GitHub

- Owner: dheerajreddy258

- License: gpl-3.0

- Language: Python

- Default Branch: master

- Size: 6.46 MB

Statistics

- Stars: 0

- Watchers: 1

- Forks: 0

- Open Issues: 0

- Releases: 0

Metadata Files

.github/README_cn.md

YOLOv5🚀是一个在COCO数据集上预训练的物体检测架构和模型系列,它代表了Ultralytics对未来视觉AI方法的公开研究,其中包含了在数千小时的研究和开发中所获得的经验和最佳实践。

文件

请参阅YOLOv5 Docs,了解有关训练、测试和部署的完整文件。

快速开始案例

安装

在[**Python>=3.7.0**](https://www.python.org/) 的环境中克隆版本仓并安装 [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt),包括[**PyTorch>=1.7**](https://pytorch.org/get-started/locally/)。 ```bash git clone https://github.com/ultralytics/yolov5 # 克隆 cd yolov5 pip install -r requirements.txt # 安装 ```推理

YOLOv5 [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) 推理. [模型](https://github.com/ultralytics/yolov5/tree/master/models) 自动从最新YOLOv5 [版本](https://github.com/ultralytics/yolov5/releases)下载。 ```python import torch # 模型 model = torch.hub.load('ultralytics/yolov5', 'yolov5s') # or yolov5n - yolov5x6, custom # 图像 img = 'https://ultralytics.com/images/zidane.jpg' # or file, Path, PIL, OpenCV, numpy, list # 推理 results = model(img) # 结果 results.print() # or .show(), .save(), .crop(), .pandas(), etc. ```用 detect.py 进行推理

`detect.py` 在各种数据源上运行推理, 其会从最新的 YOLOv5 [版本](https://github.com/ultralytics/yolov5/releases) 中自动下载 [模型](https://github.com/ultralytics/yolov5/tree/master/models) 并将检测结果保存到 `runs/detect` 目录。 ```bash python detect.py --source 0 # 网络摄像头 img.jpg # 图像 vid.mp4 # 视频 path/ # 文件夹 'path/*.jpg' # glob 'https://youtu.be/Zgi9g1ksQHc' # YouTube 'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP 流 ```训练

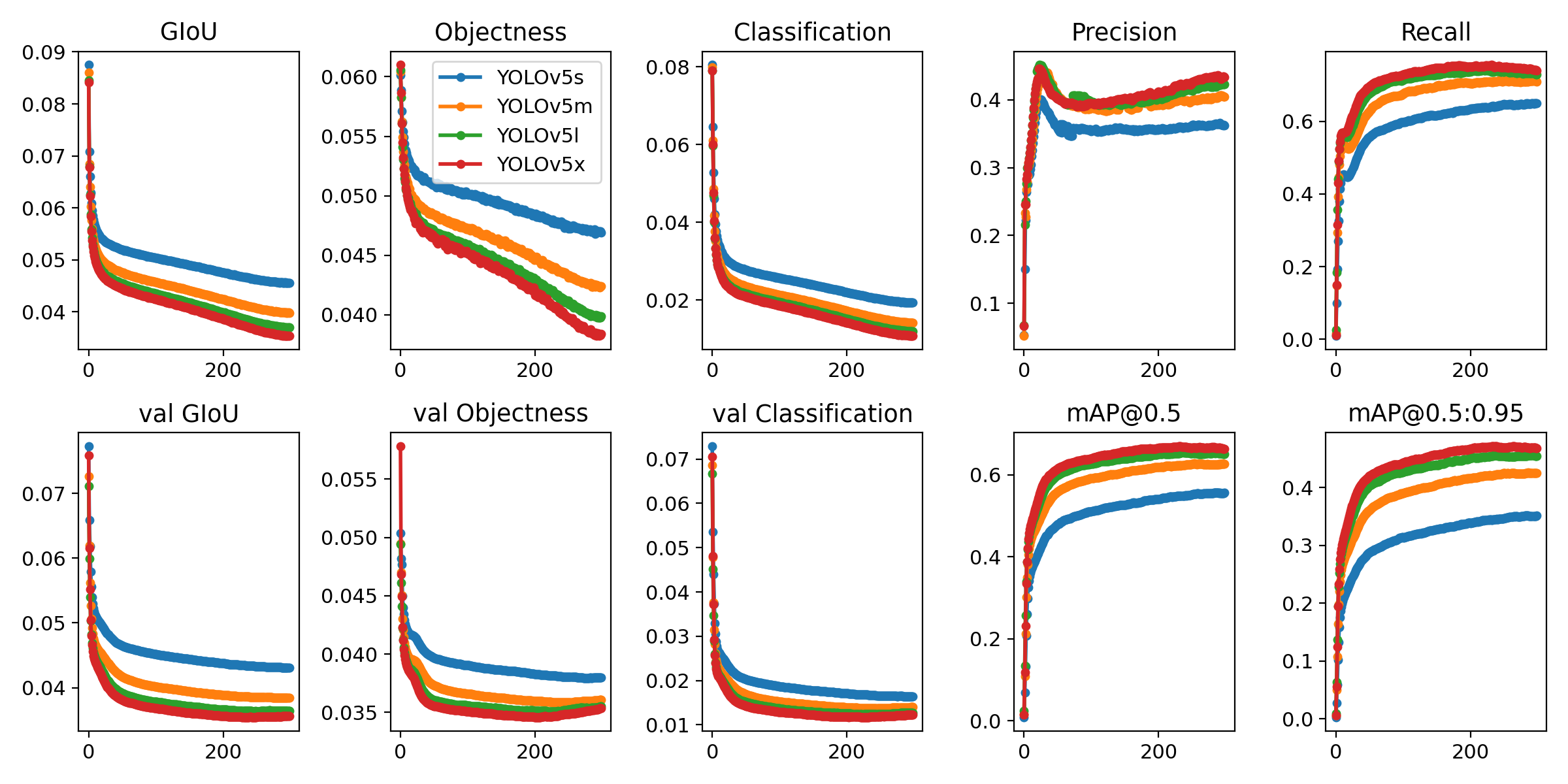

以下指令再现了 YOLOv5 [COCO](https://github.com/ultralytics/yolov5/blob/master/data/scripts/get_coco.sh) 数据集结果. [模型](https://github.com/ultralytics/yolov5/tree/master/models) 和 [数据集](https://github.com/ultralytics/yolov5/tree/master/data) 自动从最新的YOLOv5 [版本](https://github.com/ultralytics/yolov5/releases) 中下载。YOLOv5n/s/m/l/x的训练时间在V100 GPU上是 1/2/4/6/8天(多GPU倍速). 尽可能使用最大的 `--batch-size`, 或通过 `--batch-size -1` 来实现 YOLOv5 [自动批处理](https://github.com/ultralytics/yolov5/pull/5092). 批量大小显示为 V100-16GB。 ```bash python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5n.yaml --batch-size 128 yolov5s 64 yolov5m 40 yolov5l 24 yolov5x 16 ```

教程

- [训练自定义数据集](https://github.com/ultralytics/yolov5/wiki/Train-Custom-Data) 🚀 推荐 - [获得最佳训练效果的技巧](https://github.com/ultralytics/yolov5/wiki/Tips-for-Best-Training-Results) ☘️ 推荐 - [多GPU训练](https://github.com/ultralytics/yolov5/issues/475) - [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) 🌟 新 - [TFLite, ONNX, CoreML, TensorRT 输出](https://github.com/ultralytics/yolov5/issues/251) 🚀 - [测试时数据增强 (TTA)](https://github.com/ultralytics/yolov5/issues/303) - [模型集成](https://github.com/ultralytics/yolov5/issues/318) - [模型剪枝/稀疏性](https://github.com/ultralytics/yolov5/issues/304) - [超参数进化](https://github.com/ultralytics/yolov5/issues/607) - [带有冻结层的迁移学习](https://github.com/ultralytics/yolov5/issues/1314) - [架构概要](https://github.com/ultralytics/yolov5/issues/6998) 🌟 新 - [使用Weights & Biases 记录实验](https://github.com/ultralytics/yolov5/issues/1289) - [Roboflow:数据集,标签和主动学习](https://github.com/ultralytics/yolov5/issues/4975) 🌟 新 - [使用ClearML 记录实验](https://github.com/ultralytics/yolov5/tree/master/utils/loggers/clearml) 🌟 新 - [Deci 平台](https://github.com/ultralytics/yolov5/wiki/Deci-Platform) 🌟 新Integrations

|Roboflow|ClearML ⭐ NEW|Comet ⭐ NEW|Deci ⭐ NEW| |:-:|:-:|:-:|:-:| |Label and export your custom datasets directly to YOLOv5 for training with Roboflow|Automatically track, visualize and even remotely train YOLOv5 using ClearML (open-source!)|Free forever, Comet lets you save YOLOv5 models, resume training, and interactively visualise and debug predictions|Automatically compile and quantize YOLOv5 for better inference performance in one click at Deci|

Ultralytics HUB

Ultralytics HUB is our ⭐ NEW no-code solution to visualize datasets, train YOLOv5 🚀 models, and deploy to the real world in a seamless experience. Get started for Free now!

为什么选择 YOLOv5

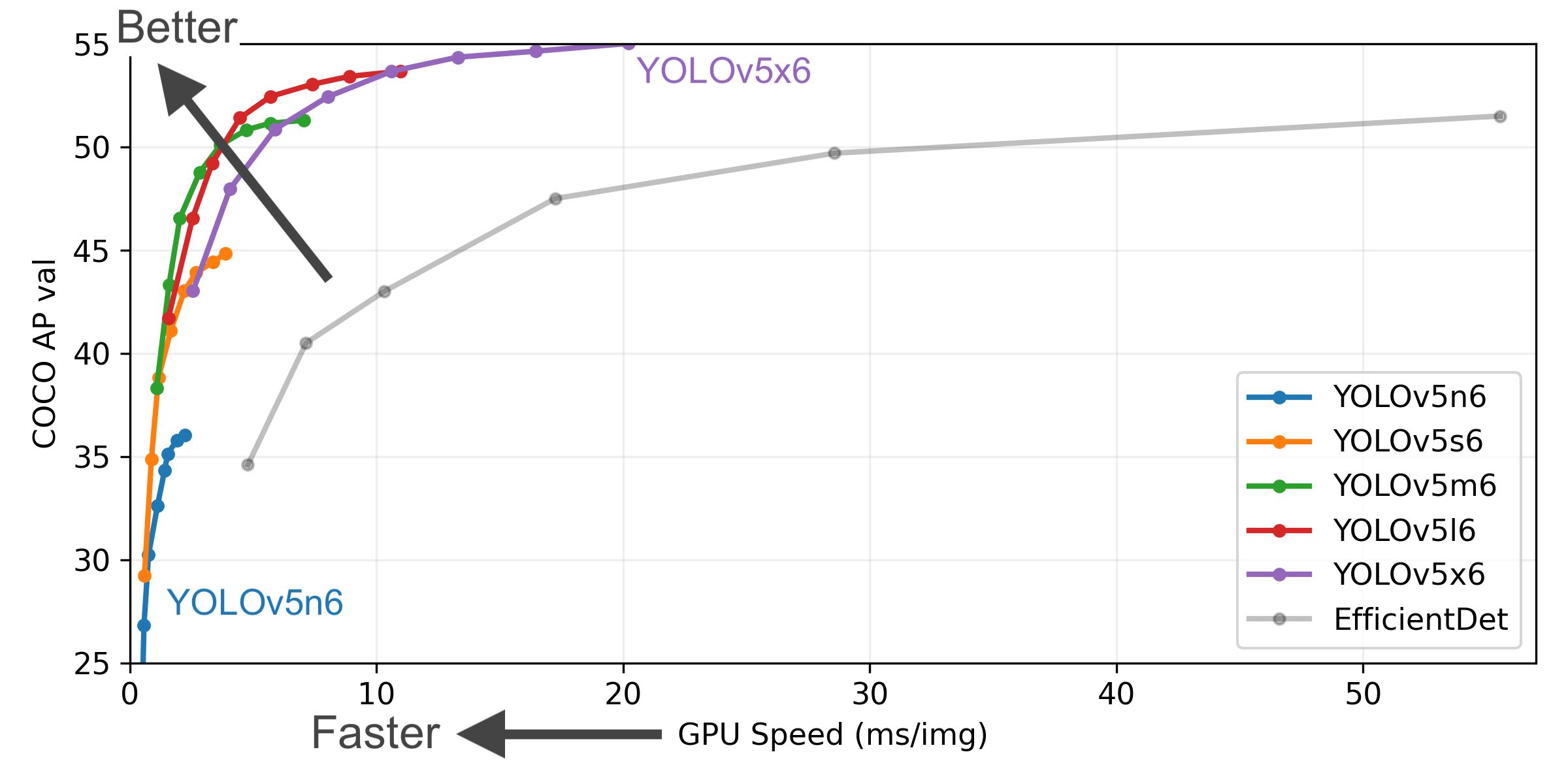

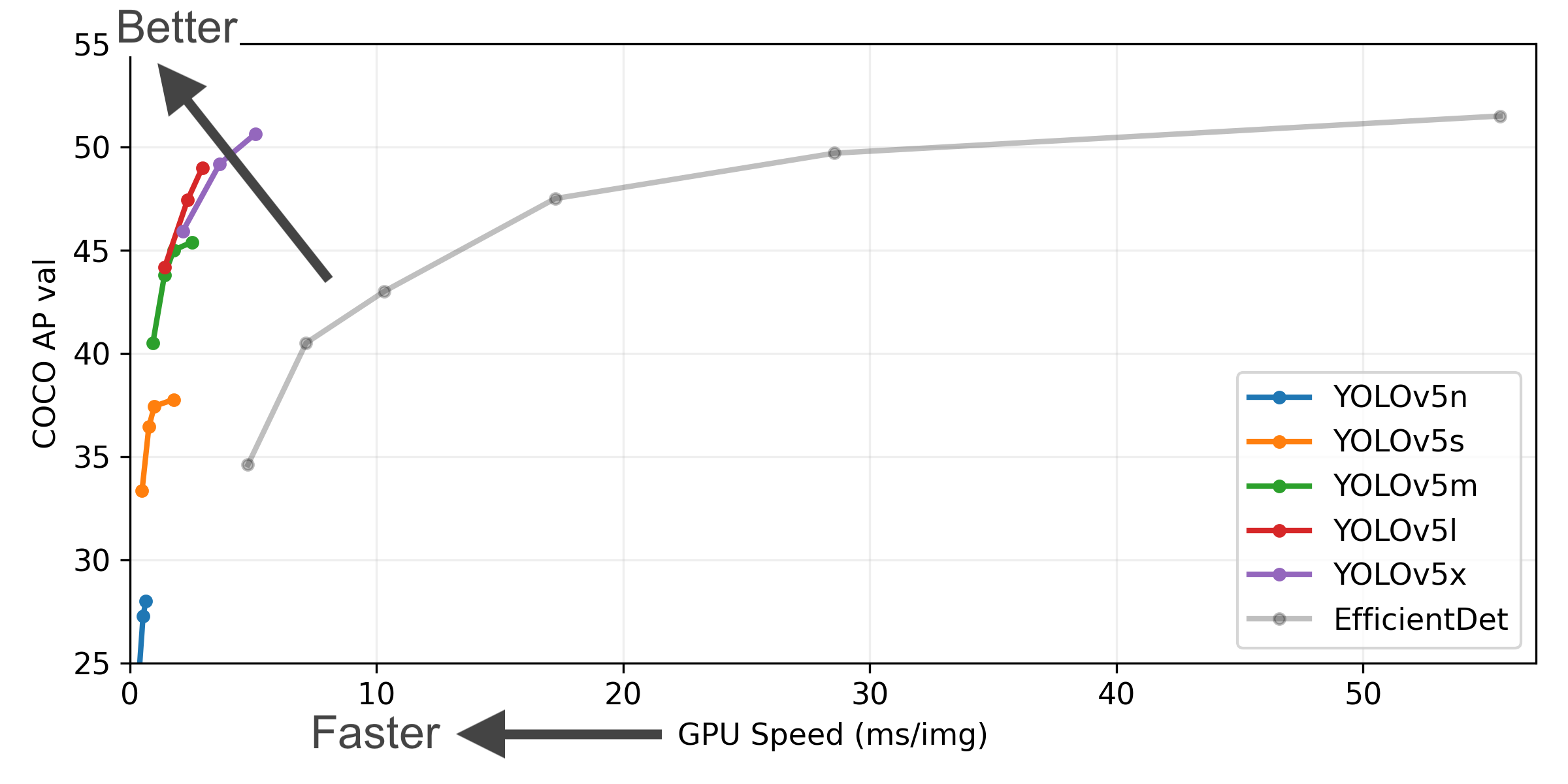

YOLOv5-P5 640 图像 (点击扩展)

图片注释 (点击扩展)

- **COCO AP val** 表示 mAP@0.5:0.95 在5000张图像的[COCO val2017](http://cocodataset.org)数据集上,在256到1536的不同推理大小上测量的指标。 - **GPU Speed** 衡量的是在 [COCO val2017](http://cocodataset.org) 数据集上使用 [AWS p3.2xlarge](https://aws.amazon.com/ec2/instance-types/p3/) V100实例在批量大小为32时每张图像的平均推理时间。 - **EfficientDet** 数据来自 [google/automl](https://github.com/google/automl) ,批量大小设置为 8。 - 复现 mAP 方法: `python val.py --task study --data coco.yaml --iou 0.7 --weights yolov5n6.pt yolov5s6.pt yolov5m6.pt yolov5l6.pt yolov5x6.pt`预训练检查点

| 模型 | 规模

(像素) | mAP验证

0.5:0.95 | mAP验证

0.5 | 速度

CPU b1

(ms) | 速度

V100 b1

(ms) | 速度

V100 b32

(ms) | 参数

(M) | 浮点运算

@640 (B) |

|------------------------------------------------------------------------------------------------------|-----------------------|-------------------------|--------------------|------------------------------|-------------------------------|--------------------------------|--------------------|------------------------|

| YOLOv5n | 640 | 28.0 | 45.7 | 45 | 6.3 | 0.6 | 1.9 | 4.5 |

| YOLOv5s | 640 | 37.4 | 56.8 | 98 | 6.4 | 0.9 | 7.2 | 16.5 |

| YOLOv5m | 640 | 45.4 | 64.1 | 224 | 8.2 | 1.7 | 21.2 | 49.0 |

| YOLOv5l | 640 | 49.0 | 67.3 | 430 | 10.1 | 2.7 | 46.5 | 109.1 |

| YOLOv5x | 640 | 50.7 | 68.9 | 766 | 12.1 | 4.8 | 86.7 | 205.7 |

| | | | | | | | | |

| YOLOv5n6 | 1280 | 36.0 | 54.4 | 153 | 8.1 | 2.1 | 3.2 | 4.6 |

| YOLOv5s6 | 1280 | 44.8 | 63.7 | 385 | 8.2 | 3.6 | 12.6 | 16.8 |

| YOLOv5m6 | 1280 | 51.3 | 69.3 | 887 | 11.1 | 6.8 | 35.7 | 50.0 |

| YOLOv5l6 | 1280 | 53.7 | 71.3 | 1784 | 15.8 | 10.5 | 76.8 | 111.4 |

| YOLOv5x6

+ TTA | 1280

1536 | 55.0

55.8 | 72.7

72.7 | 3136

- | 26.2

- | 19.4

- | 140.7

- | 209.8

- |

表格注释 (点击扩展)

- 所有检查点都以默认设置训练到300个时期. Nano和Small模型用 [hyp.scratch-low.yaml](https://github.com/ultralytics/yolov5/blob/master/data/hyps/hyp.scratch-low.yaml) hyps, 其他模型使用 [hyp.scratch-high.yaml](https://github.com/ultralytics/yolov5/blob/master/data/hyps/hyp.scratch-high.yaml). - **mAPval** 值是 [COCO val2017](http://cocodataset.org) 数据集上的单模型单尺度的值。复现方法: `python val.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65` - 使用 [AWS p3.2xlarge](https://aws.amazon.com/ec2/instance-types/p3/) 实例对COCO val图像的平均速度。不包括NMS时间(~1 ms/img)

复现方法: `python val.py --data coco.yaml --img 640 --task speed --batch 1` - **TTA** [测试时数据增强](https://github.com/ultralytics/yolov5/issues/303) 包括反射和比例增强.

复现方法: `python val.py --data coco.yaml --img 1536 --iou 0.7 --augment`

分类 ⭐ 新

YOLOv5发布的v6.2版本 支持训练,验证,预测和输出分类模型!这使得训练分类器模型非常简单。点击下面开始尝试!

分类检查点 (点击展开)

我们在ImageNet上使用了4xA100的实例训练YOLOv5-cls分类模型90个epochs,并以相同的默认设置同时训练了ResNet和EfficientNet模型来进行比较。我们将所有的模型导出到ONNX FP32进行CPU速度测试,又导出到TensorRT FP16进行GPU速度测试。最后,为了方便重现,我们在[Google Colab Pro](https://colab.research.google.com/signup)上进行了所有的速度测试。 | 模型 | 规模

(像素) | 准确度

第一 | 准确度

前五 | 训练

90 epochs

4xA100 (小时) | 速度

ONNX CPU

(ms) | 速度

TensorRT V100

(ms) | 参数

(M) | 浮点运算

@224 (B) | |----------------------------------------------------------------------------------------------------|-----------------------|------------------|------------------|----------------------------------------------|--------------------------------|-------------------------------------|--------------------|------------------------| | [YOLOv5n-cls](https://github.com/ultralytics/yolov5/releases/download/v6.2/yolov5n-cls.pt) | 224 | 64.6 | 85.4 | 7:59 | **3.3** | **0.5** | **2.5** | **0.5** | | [YOLOv5s-cls](https://github.com/ultralytics/yolov5/releases/download/v6.2/yolov5s-cls.pt) | 224 | 71.5 | 90.2 | 8:09 | 6.6 | 0.6 | 5.4 | 1.4 | | [YOLOv5m-cls](https://github.com/ultralytics/yolov5/releases/download/v6.2/yolov5m-cls.pt) | 224 | 75.9 | 92.9 | 10:06 | 15.5 | 0.9 | 12.9 | 3.9 | | [YOLOv5l-cls](https://github.com/ultralytics/yolov5/releases/download/v6.2/yolov5l-cls.pt) | 224 | 78.0 | 94.0 | 11:56 | 26.9 | 1.4 | 26.5 | 8.5 | | [YOLOv5x-cls](https://github.com/ultralytics/yolov5/releases/download/v6.2/yolov5x-cls.pt) | 224 | **79.0** | **94.4** | 15:04 | 54.3 | 1.8 | 48.1 | 15.9 | | | | [ResNet18](https://github.com/ultralytics/yolov5/releases/download/v6.2/resnet18.pt) | 224 | 70.3 | 89.5 | **6:47** | 11.2 | 0.5 | 11.7 | 3.7 | | [ResNet34](https://github.com/ultralytics/yolov5/releases/download/v6.2/resnet34.pt) | 224 | 73.9 | 91.8 | 8:33 | 20.6 | 0.9 | 21.8 | 7.4 | | [ResNet50](https://github.com/ultralytics/yolov5/releases/download/v6.2/resnet50.pt) | 224 | 76.8 | 93.4 | 11:10 | 23.4 | 1.0 | 25.6 | 8.5 | | [ResNet101](https://github.com/ultralytics/yolov5/releases/download/v6.2/resnet101.pt) | 224 | 78.5 | 94.3 | 17:10 | 42.1 | 1.9 | 44.5 | 15.9 | | | | [EfficientNet_b0](https://github.com/ultralytics/yolov5/releases/download/v6.2/efficientnet_b0.pt) | 224 | 75.1 | 92.4 | 13:03 | 12.5 | 1.3 | 5.3 | 1.0 | | [EfficientNet_b1](https://github.com/ultralytics/yolov5/releases/download/v6.2/efficientnet_b1.pt) | 224 | 76.4 | 93.2 | 17:04 | 14.9 | 1.6 | 7.8 | 1.5 | | [EfficientNet_b2](https://github.com/ultralytics/yolov5/releases/download/v6.2/efficientnet_b2.pt) | 224 | 76.6 | 93.4 | 17:10 | 15.9 | 1.6 | 9.1 | 1.7 | | [EfficientNet_b3](https://github.com/ultralytics/yolov5/releases/download/v6.2/efficientnet_b3.pt) | 224 | 77.7 | 94.0 | 19:19 | 18.9 | 1.9 | 12.2 | 2.4 |

表格注释 (点击扩展)

- 所有检查点都被SGD优化器训练到90 epochs, `lr0=0.001` 和 `weight_decay=5e-5`, 图像大小为224,全为默认设置。运行数据记录于 https://wandb.ai/glenn-jocher/YOLOv5-Classifier-v6-2。 - **准确度** 值为[ImageNet-1k](https://www.image-net.org/index.php)数据集上的单模型单尺度。

通过`python classify/val.py --data ../datasets/imagenet --img 224`进行复制。 - 使用Google [Colab Pro](https://colab.research.google.com/signup) V100 High-RAM实例得出的100张推理图像的平均**速度**。

通过 `python classify/val.py --data ../datasets/imagenet --img 224 --batch 1`进行复制。 - 用`export.py`**导出**到FP32的ONNX和FP16的TensorRT。

通过 `python export.py --weights yolov5s-cls.pt --include engine onnx --imgsz 224`进行复制。

分类使用实例 (点击展开)

### 训练 YOLOv5分类训练支持自动下载MNIST, Fashion-MNIST, CIFAR10, CIFAR100, Imagenette, Imagewoof和ImageNet数据集,并使用`--data` 参数. 打个比方,在MNIST上使用`--data mnist`开始训练。 ```bash # 单GPU python classify/train.py --model yolov5s-cls.pt --data cifar100 --epochs 5 --img 224 --batch 128 # 多-GPU DDP python -m torch.distributed.run --nproc_per_node 4 --master_port 1 classify/train.py --model yolov5s-cls.pt --data imagenet --epochs 5 --img 224 --device 0,1,2,3 ``` ### 验证 在ImageNet-1k数据集上验证YOLOv5m-cl的准确性: ```bash bash data/scripts/get_imagenet.sh --val # download ImageNet val split (6.3G, 50000 images) python classify/val.py --weights yolov5m-cls.pt --data ../datasets/imagenet --img 224 # validate ``` ### 预测 用提前训练好的YOLOv5s-cls.pt去预测bus.jpg: ```bash python classify/predict.py --weights yolov5s-cls.pt --data data/images/bus.jpg ``` ```python model = torch.hub.load('ultralytics/yolov5', 'custom', 'yolov5s-cls.pt') # load from PyTorch Hub ``` ### 导出 导出一组训练好的YOLOv5s-cls, ResNet和EfficientNet模型到ONNX和TensorRT: ```bash python export.py --weights yolov5s-cls.pt resnet50.pt efficientnet_b0.pt --include onnx engine --img 224 ```贡献

我们重视您的意见! 我们希望给大家提供尽可能的简单和透明的方式对 YOLOv5 做出贡献。开始之前请先点击并查看我们的 贡献指南,填写YOLOv5调查问卷 来向我们发送您的经验反馈。真诚感谢我们所有的贡献者!

联系

关于YOLOv5的漏洞和功能问题,请访问 GitHub Issues。商业咨询或技术支持服务请访问https://ultralytics.com/contact。

Owner

- Name: Pullela Dheeraj Reddy

- Login: dheerajreddy258

- Kind: user

- Repositories: 1

- Profile: https://github.com/dheerajreddy258

Citation (CITATION.cff)

cff-version: 1.2.0

preferred-citation:

type: software

message: If you use YOLOv5, please cite it as below.

authors:

- family-names: Jocher

given-names: Glenn

orcid: "https://orcid.org/0000-0001-5950-6979"

title: "YOLOv5 by Ultralytics"

version: 7.0

doi: 10.5281/zenodo.3908559

date-released: 2020-5-29

license: GPL-3.0

url: "https://github.com/ultralytics/yolov5"

GitHub Events

Total

Last Year

Dependencies

- actions/cache v3 composite

- actions/checkout v3 composite

- actions/setup-python v4 composite

- actions/checkout v3 composite

- github/codeql-action/analyze v2 composite

- github/codeql-action/autobuild v2 composite

- github/codeql-action/init v2 composite

- actions/checkout v3 composite

- docker/build-push-action v3 composite

- docker/login-action v2 composite

- docker/setup-buildx-action v2 composite

- docker/setup-qemu-action v2 composite

- actions/first-interaction v1 composite

- actions/stale v6 composite

- nvcr.io/nvidia/pytorch 22.11-py3 build

- gcr.io/google-appengine/python latest build

- Pillow >=7.1.2

- PyYAML >=5.3.1

- gitpython *

- ipython *

- matplotlib >=3.2.2

- numpy >=1.18.5

- opencv-python >=4.1.1

- pandas >=1.1.4

- psutil *

- requests >=2.23.0

- scipy >=1.4.1

- seaborn >=0.11.0

- tensorboard >=2.4.1

- thop >=0.1.1

- torchvision >=0.8.1

- tqdm >=4.64.0

- Flask ==1.0.2

- gunicorn ==19.9.0

- pip ==21.1