Recent Releases of openspg-kag

openspg-kag - Version 0.7.1

Version 0.7.1 (2025-04-25)

Dear Open Source Community,We are excited to officially announce the release of version 0.7.1 for OpenSPG/KAG! This release represents the collaborative efforts of both our core technical team and contributors from the global open source community. The update focuses on improving user experience, optimizing system performance, and addressing key issues based on your feedback. Below are the highlights of this release:

🛠️ Fixes & Optimization Highlights:

- Resolved source code compilation errors: Fixed

NoSuchBeanDefinitionExceptionissues duringopenspgcompilation to ensure a smooth development experience. - Enhanced error diagnostics: Resolved the issue where timeout errors for

vectorizercalls couldn't be traced, enabling quicker troubleshooting. - Improved task performance: Optimized small task construction times by adjusting the scheduling interval, allowing asynchronous tasks to trigger immediately without waiting.

- Enhanced container stability: Fixed the issue where container restarts caused scheduling states to hang or crash.

- Reasoning Q&A Stream output optimization: Improved the responsiveness and fluidity of streaming outputs for Reasoning Q&A scenarios.

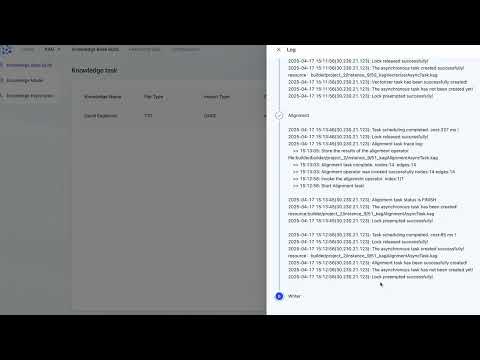

- Task details page upgrades: Added auto-refresh capabilities for logs and time cost statistics, allowing users to monitor system and task performance in real time.

- Simplified task splitting configuration: Disable the semantic segmentation module to avoid excessive token consumption caused by misoperations.

- Website updates: Added a new partner showcase section to the homepage and included a redirect to the

OpenKGofficial website to foster collaborative opportunities. - Bug fixes and product optimization: Implemented multiple fixes and refinements to address reported issues, enhancing product stability.

🌟 Join Our Community

The OpenSPG community thrives on collective wisdom and the shared mission of advancing open-source technology. We warmly invite you to contribute, share your insights, and collaborate on exciting projects. For more details about this release and user guides, please visit our official website.

Thank you for your continued support and enthusiasm for OpenSPG! If you have questions or suggestions, feel free to reach out through our community channels or via email.

Version 0.7.1 (2025-04-25)

亲爱的开源社区伙伴们,非常高兴地宣布 OpenSPG/KAG 发布了最新版本 0.7.1!这一版本是我们的技术团队与社区开发者们共同努力的成果,其目标旨在提升用户体验、优化性能,并解决用户反馈的若干问题。以下是本次版本更新的核心内容概要:

🛠️ 问题修复 & 功能优化

- 修复源码编译问题:解决

openspg编译时可能遇到的NoSuchBeanDefinitionException报错问题,确保开发者可以顺利构建项目。 - 诊断优化:解决

vectorizer调用超时异常信息无法透出的问题,帮助用户快速定位故障原因。 - 任务性能提升:优化小任务构建耗时,将任务调度间隔调整为更高效的频率,同时确保异步任务在无需等待的条件下立即触发。

- 镜像稳定性提升:解决镜像重启时调度状态异常卡死的问题,增强运行环境的稳定性。

- 推理问答流式输出优化:显著改善推理问答场景中流式输出的慢速和卡顿问题,提供更流畅的用户体验。

- 任务详情页面增强:新增日志自动刷新功能和任务耗时统计,帮助用户实时监控系统状态和任务性能。

- 构建任务切分调整:下线语义化切分的选项,避免误操作带来的大量tokens 消耗

- 官网功能更新:为官网主页新增合作伙伴信息板块,同时添加

OpenKG官网跳转链接以促进资源共享。 - Bug修复和产品优化:针对社区反馈的问题做出多项功能修复与细节优化,提高整体产品的稳定性。

🌟 加入我们的社区

我们的开源社区凝聚了多方智慧,推动了开源技术的发展。我们诚挚邀请您参与项目的共建,共同探索更优秀的解决方案。有关本次更新的更多信息和操作指南,请访问 官网。

感谢您对 OpenSPG 的关注和支持!如果有任何问题或建议,欢迎通过社区或邮件与我们联系。

- Java

Published by andylau-55 about 1 year ago

openspg-kag - Version 0.7

Version 0.7 (2025-04-17)

1、Overview

We are very pleased to announce the release of KAG 0.7. This update continues our commitment to increasing the consistency, rigor, and precision of large language models leveraging external knowledge bases, while introducing several important new features.

Firstly, we have completely refactored the framework. The update adds support for both static and iterative task planning modes, along with a more rigorous hierarchical knowledge mechanism during the reasoning phase. Additionally, the new multi-executor extension mechanism and MCP protocol integration enable horizontal scaling of various symbolic solvers (such as math-executor and cypher-executor). These improvements not only help users quickly build knowledge-augmented applications to validate innovative ideas or domain-specific solutions, but also support continuous optimization of KAG Solver's capabilities, thereby further enhancing reasoning rigor in vertical applications.

Secondly, we have comprehensively optimized the product experience: during the reasoning phase, we introduced dual modes "Simple Mode" and "Deep Reasoning" and added support for streaming reasoning output, significantly reducing user wait times. Particularly noteworthy is the introduction of the "Lightweight Construction" mode to better facilitate the large-scale business application of KAG and address the community's most pressing concern about high knowledge construction costs. As shown in the KAG-V0.7LC column of Figure 1, we tested a hybrid approach where a 7B model handles knowledge construction and a 72B model handles knowledge-based question answering. The results on the two_wiki, hotpotqa, and musique benchmarks showed only minor declines of 1.20%, 1.90%, and 3.11%, respectively. However, the token cost(Refer to Aliyun Bailian pricing)for constructing a 100,000-character document was reduced from 4.63¥ to 0.479¥, a 89% reduction, which substantially saves users both time and financial costs. Additionally, we will release a KAG-specific extraction model and a distributed offline batch construction version, continuously compressing model size and improving construction throughput to achieve daily construction capabilities for millions or even tens of millions of documents in a single scenario.

Finally, to better promote business applications, technological advancement, and community exchange for knowledge-augmented LLMs, we have added an open_benchmark directory at the root level of the KAG repository. This directory includes reproduction methods for various datasets to help users replicate and improve KAG's performance across different tasks. Moving forward, we will continue to expand with more vertical scenario task datasets to provide users with richer resources.

Beyond these framework and product optimizations, we've fixed several bugs in both reasoning and construction phases. This update uses Qwen2.5-72B as the base model, completing effect alignment across various RAG frameworks and partial KG datasets. For overall benchmark results, please refer to Figures 1 and 2, with detailed rankings available in the open_benchmark section.

Figure1. Performance of KAG V0.7 and baselines on Multi-hop QA benchmarks

Figure2. Performance of KAG V0.7 and baselines(from OpenKG OneEval) on _Knowledge based QA benchmarks_

Figure2. Performance of KAG V0.7 and baselines(from OpenKG OneEval) on _Knowledge based QA benchmarks_

2、Framework Enhancements

2.1、Hybrid Static-Dynamic Task Planning

This release introduces optimizations to the KAG-Solver framework implementation, providing more flexible architectural support for: "Retrieval during reasoning" workflows, Multi-scenario algorithm experimentation, LLM-symbolic engine integration (via MCP protocol).

The framework's Static/Iterative Planner transforms complex problems into directed acyclic graphs (DAGs) of interconnected Executors, enabling step-by-step resolution based on dependency relationships. We've implemented built-in Pipeline support for both Static and Iterative Planners, including a predefined NaiveRAG Pipeline - offering developers customizable solver chaining capabilities while maintaining implementation flexibility.

2.2、Extensible Symbolic Solvers

Leveraging LLM's FunctionCall capability, we have optimized the design of symbolic solvers (Executors) to enable more rational solver matching during complex problem planning. This release includes built-in solvers such as kaghybridexecutor, math_executor, and cypher_executor, while providing a flexible extension mechanism that allows developers to define custom solvers for personalized requirements.

2.3、Optimized Retrieval/Reasoning Strategies

Using the enhanced KAG-Solver framework, we have rewritten the logic of kaghybridexecutor to implement a more rigorous knowledge layering mechanism during reasoning. Based on business requirements for knowledge precision and following KAG's knowledge hierarchy definition, the system now sequentially retrieves three knowledge layers: (schema-constrained),

(schema-free), and

(raw context), subsequently performing reasoning to generate answers.

2.4、MCP Protocol Integration

This KAG release achieves compatibility with the MCP protocol, enabling the incorporation of external data sources and symbolic solvers into the KAG framework via MCP. We have included a baidumapmcp example in the example directory for developers' reference.

3、OpenBenchmark

To better facilitate academic exchange and accelerate the adoption and technological advancement of large language models with external knowledge bases in enterprise settings, KAG has released more detailed benchmark reproduction steps in this version, along with open-sourcing all code and data. This will enable developers and researchers to easily reproduce and align results across various datasets.

For more accurate quantification of reasoning performance, we have adopted multiple evaluation metrics, including EM (Exact Match), F1, and LLMAccuracy. In addition to existing datasets such as TwoWiki, Musique, and HotpotQA, this update introduces the OpenKG OneEval knowledge graph QA dataset (including AffairQA and PRQA) to evaluate the capabilities of both the **cypherexecutor** and KAG's default framework.

Building benchmarks is a time-consuming and complex endeavor. In future work, we will continue to expand benchmark datasets and provide domain-specific solutions to further enhance the accuracy, rigor, and consistency of large models in leveraging external knowledge. We warmly invite community members to collaborate with us in advancing the KAG framework's capabilities and real-world applications across diverse tasks.

3.1、Multi-hop QA Dataset

3.1.1、benchMark

- musique

| Method | em | f1 | llm_accuracy | | --- | --- | --- | --- | | Naive Gen | 0.033 | 0.074 | 0.083 | | Naive RAG | 0.248 | 0.357 | 0.384 | | HippoRAGV2 | 0.289 | 0.404 | 0.452 | | PIKE-RAG | 0.383 | 0.498 | 0.565 | | KAG-V0.6.1 | 0.363 | 0.481 | 0.547 | | KAG-V0.7LC | 0.379 | 0.513 | 0.560 | | KAG-V0.7 | 0.385 | 0.520 | 0.579 |

- hotpotqa

| Method | em | f1 | llm_accuracy | | --- | --- | --- | --- | | Naive Gen | 0.223 | 0.313 | 0.342 | | Naive RAG | 0.566 | 0.704 | 0.762 | | HippoRAGV2 | 0.557 | 0.694 | 0.807 | | PIKE-RAG | 0.558 | 0.686 | 0.787 | | KAG-V0.6.1 | 0.599 | 0.745 | 0.841 | | KAG-V0.7LC | 0.600 | 0.744 | 0.828 | | KAG-V0.7 | 0.603 | 0.748 | 0.844 |

- twowiki

| Method | em | f1 | llm_accuracy | | --- | --- | --- | --- | | Naive Gen | 0.199 | 0.310 | 0.382 | | Naive RAG | 0.448 | 0.512 | 0.573 | | HippoRAGV2 | 0.542 | 0.618 | 0.684 | | PIKE-RAG | 0.63 | 0.72 | 0.81 | | KAG-V0.6.1 | 0.666 | 0.755 | 0.811 | | KAG-V0.7LC | 0.683 | 0.769 | 0.826 | | KAG-V0.7 | 0.684 | 0.770 | 0.836 |

3.1.2、params for each method

| Method | dataset | LLM(Build/Reason) | embed | param |

| --- | --- | --- | --- | --- |

| Naive Gen | 10k docs、1k questions provided by HippoRAG | qwen2.5-72B | bge-m3 | 无 |

| Naive RAG | same as above | qwen2.5-72B | bge-m3 | numdocs: 10 |

| HippoRAGV2 | same as above | qwen2.5-72B | bge-m3 | retrievaltopk=200

linkingtopk=5

maxqasteps=3

qatopk=5

graphtype=factsandsimpassagenodeunidirectional

embeddingbatchsize=8 |

| PIKE-RAG | same as above | qwen2.5-72B | bge-m3 | taggingllmtemperature: 0.7

qallmtemperature: 0.0

chunkretrievek: 8

chunkretrievescorethreshold: 0.5

atomretrievek: 16

atomicretrievescorethreshold: 0.2

maxnumquestion: 5

numparallel: 5 |

| KAG-V0.6.1 | same as above | qwen2.5-72B | bge-m3 | refer to the kag_config.yaml files in each subdirectory under https://github.com/OpenSPG/KAG/tree/v0.6/kag/examples. |

| KAG-V0.7 | same as above | qwen2.5-72B | bge-m3 | refer to the kag_config.yaml files in each subdirectory under https://github.com/OpenSPG/KAG/tree/master/kag/open_benchmark |

3.2、Structured Datasets

PeopleRelQA (Person Relationship QA) and AffairQA (Government Affairs QA) are datasets provided by Alibaba Tianchi Competition and Zhejiang University respectively on the OpenKG OneEval benchmark. KAG delivers a streamlined implementation paradigm for vertical domain applications through its "semantic modeling + structured graph construction + NL2Cypher retrieval" approach. Moving forward, we will continue optimizing structured data QA performance by enhancing the integration between large language models and knowledge engines.

The OpenKG OneEval Benchmark primarily evaluates large language models' (LLMs) capabilities in comprehending and utilizing diverse knowledge domains. As documented in OpenKG's official description, the benchmark employs relatively simple retrieval strategies that may introduce noise in recalled results, while simultaneously assessing LLMs' robustness when processing imperfect or redundant knowledge. KAG's performance improvements in these scenarios stem from its effective retrieval strategies that ensure strong relevance between retrieved content and query intent.

In this update, KAG has validated its retrieval and reasoning capabilities on traditional knowledge graph tasks using the AffairQA and PRQA datasets. Future developments will focus on advancing schema standardization and reasoning framework alignment, along with releasing additional evaluation metrics to support broader application scenarios.

- PeopleRelQA

| Method | em | f1 | llm_accuracy | Methodology | Metric Sources |

| --- | --- | --- | --- | --- | --- |

| deepseek-v3(OpenKG oneEval) | - | 2.60% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| qwen2.5-72B(OpenKG oneEval) | - | 2.50% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| GPT-4o(OpenKG oneEval) | - | 3.20% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| QWQ-32B(OpenKG oneEval) | - | 3.00% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| Grok 3(OpenKG oneEval) | - | 4.70% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| KAG-V0.7 | 45.5% | 86.6% | 84.8% | Custom PRQA Pipeline with Cypher Solver Based on KAG Framework | Ant Group

KAG Team |

- AffairQA

| Method | em | f1 | llm_accuracy | Methodology | Metric Sources |

| --- | --- | --- | --- | --- | --- |

| deepseek-v3 | - | 42.50% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| qwen2.5-72B | - | 45.00% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| GPT-4o | - | 41.00% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| QWQ-32B | - | 45.00% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| Grok 3 | - | 45.50% | - | Dense Retrieval + LLM Generation | OpenKG WeChat |

| KAG-V0.7 | 77.5% | 83.1% | 88.2% | Custom PRQA Pipeline with Cypher Solver Based on KAG Framework | Ant Group

KAG Team |

4、Product and platform optimization

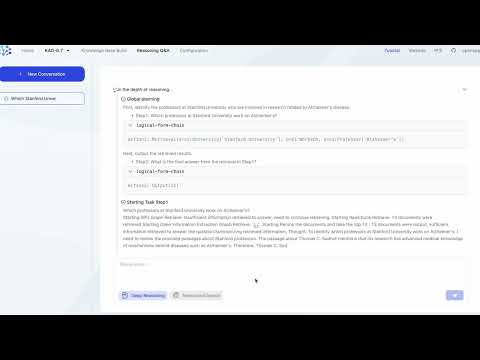

This update enhances the knowledge Q&A product experience. Users can refer to the KAG User Manual and access our demo files under the Quick Start -> Product Mode section to reproduce the results shown in the following video.

4.1、Enhanced Q&A Experience

By optimizing the planning, execution, and generation capabilities of the KAG-Solver framework—leveraging Qwen2.5-72B and DeepSeek-V3 models—the system now achieves deep reasoning performance comparable to DeepSeek-R1. Three key features have been introduced:

- Streaming output for dynamic delivery of reasoning results

- Auto-rendering of Markdown-formatted graph indices

- Intelligent citation linking between generated content and source references

4.2、Dual-Mode Retrieval

The new Deep Reasoning Toggle allows users to balance answer accuracy against computational costs by enabling/disabling advanced reasoning as needed. (Note: Web-augmented search is currently in testing—stay tuned for updates in future KAG releases.)

4.3、Indexing Infrastructure Upgrades

- Data Import

- Expanded structured data support for CSV/ODPS/SLS sources

- Optimized ingestion pipelines for improved usability

- Hybrid Processing

- Unified handling of structured and unstructured data

- Enhanced task management via: Job scheduling、Execution logging、Data sampling for diagnostics

5、Roadmap

In upcoming iterations, We are continuously committed to enhancing the capability of large models to utilize external knowledge bases, achieving bidirectional enhancement and organic integration between large models and symbolic knowledge. This effort aims to consistently improve the factual accuracy, rigor, and coherence of reasoning and question-answering in specialized scenarios. We will also continue to release updates, constantly raising the upper limits of these capabilities and advancing their implementation in vertical domains.

6、Acknowledgments

This release addresses several issues in the hierarchical retrieval module, and we extend our sincere gratitude to the community developers who reported these problems.

The framework upgrade has received tremendous support from the following experts and colleagues, to whom we are deeply grateful:

- Tongji University: Prof. Haofen Wang, Prof. Meng Wang

- Institute of Computing Technology, CAS: Dr. Long Bai

- Hunan KeChuang Information: R&D Expert Ling Liu

- Open Source Community: Senior Developer Yunpeng Li

- Bank of Communications: R&D Engineer Chenxing Gao

Version 0.7 (2025-01-07)

1、总体摘要

我们正式发布KAG 0.7版本,本次更新旨在持续提升大模型利用知识库推理问答的一致性、严谨性和精准性,并引入了多项重要功能特性。

首先,我们对框架进行了全面重构。新增了对static和iterative两种任务规划模式的支持,同时实现了更严谨的推理阶段知识分层机制。此外,新增的multi-executor扩展机制以及MCP协议的接入,使用户能够横向扩展多种符号求解器(如math-executor和cypher-executor等)。这些改进不仅帮助用户快速搭建外挂知识库应用以验证创新想法或领域解决方案,还支持用户持续优化KAG Solver的能力,从而进一步提升垂直领域应用的推理严谨性。

其次,我们对产品体验进行了全面优化:在推理阶段新增"简易模式"和"深度推理"双模式,并支持流式推理输出,显著缩短了用户等待时间;特别值得关注的是,为更好的促进KAG的规模化业务应用,同时也回应社区最为关切的知识构建成本高的问题,本次发布提供了"轻量级构建"模式,如图1中KAG-V0.7LC列所示,我们测试了7B模型做知识构建、72B模型做知识问答的混合方案,在two_wiki、hotpotqa和musique三个榜单上的效果仅小幅下降1.20%、1.90%和3.11%,但十万字文档的构建token成本(参考阿里云百炼定价)从4.63¥减少到0.479¥, 降低89%,可大幅节约用户的时间和资金成本;我们还将发布KAG专用抽取模型和分布式离线批量构建版本,持续压缩模型尺寸提升构建吞吐,以实现单场景百万级甚至千万级文档的日构建能力。

最后,为了更好地推动大模型外挂知识库的业务应用、技术进步和社区交流,我们在KAG仓库的一级目录中新增了open_benchmark目录。该目录内置了各数据集的复现方法,帮助用户复现并提升KAG在各类任务上的效果。未来,我们将持续扩充更多垂直场景的任务数据集,为用户提供更丰富的资源。

除了上述框架和产品优化外,我们还修复了推理和构建阶段的若干Bug。本次更新以Qwen2.5-72B为基础模型,完成了各RAG框架及部分KG数据集的效果对齐。发布的整体榜单效果可参考图1和图2,榜单细节详见open_benchmark部分。

图1 Performance of KAG V0.7 and baselines on Multi-hop QA benchmarks

图2 Performance of KAG V0.7 and baselines(from OpenKG OneEval) on _Knowledge based QA benchmarks_

图2 Performance of KAG V0.7 and baselines(from OpenKG OneEval) on _Knowledge based QA benchmarks_

2、框架优化

2.1、静态与动态结合的任务规划

本次发布对KAG-Solver框架的实现进行了优化,为“边推理边检索”、“多场景算法实验”以及“大模型与符号引擎结合(基于MCP协议)”提供了更加灵活的框架支持。

通过Static/Iterative Planner,复杂问题可以被转换为多个Executor之间的有向无环图(DAG),并根据依赖关系逐步求解。框架内置了Static/Iterative Planner的Pipeline实现,并预定义了NaiveRAG Pipeline,方便开发者灵活自定义求解链路。

2.2、支持可扩展的符号求解器

基于LLM对FunctionCall的支持,我们优化了符号求解器(Executor)的设计,使其在复杂问题规划时能够更合理地匹配相应的求解器。本次更新内置了kaghybridexecutor、math_executor、cypher_executor等求解器,同时提供了灵活的扩展机制,支持开发者定义新的求解器以满足个性化需求。

2.3、显性知识分层及分层检索、推理策略优化

基于优化后的KAG-Solver框架,我们重写了kaghybridexecutor的逻辑,实现了更严谨的推理阶段知识分层机制。根据业务场景对知识精准性的要求,按照KAG的知识分层定义,依次检索三层知识:(基于schema-constraint)、

(基于schema-free)和

(原始上下文),并在此基础上进行推理生成答案。

2.4、拥抱MCP协议

KAG本次发版实现了对MCP协议的兼容,支持在KAG框架中通过MCP协议引入外部数据源和外部符号求解器。在example目录中,我们内置了baidumapmcp示例,供开发者参考使用。

3、OpenBenchMark

为更好地促进学术交流,加速大模型外挂知识库在企业中的落地和技术进步,KAG在本次发版中发布了更详细的Benchmark复现步骤,并开源了全部代码和数据。这将方便开发者和科研人员复现并对齐各数据集的结果。为了更准确地量化推理效果,我们采用了EM(Exact Match)、F1和LLMAccuracy等多项评估指标。在原有TwoWiki、Musique、HotpotQA等数据集的基础上,本次更新新增了OpenKG OneEval知识图谱类问答数据集(如AffairQA和PRQA),以分别验证**cypherexecutor**及KAG默认框架的能力。

搭建Benchmark是一个耗时且复杂的工程。在未来的工作中,我们将持续扩充更多Benchmark数据集,并提供针对不同领域的解决方案,进一步提升大模型利用外部知识的准确性、严谨性和一致性。我们也诚邀社区同仁共同参与,携手推进KAG框架在各类任务中的能力提升与实际应用落地。

3.1、多跳事实问答数据集

3.1.1、benchMark

- musique

| Method | em | f1 | llm_accuracy | | --- | --- | --- | --- | | Naive Gen | 0.033 | 0.074 | 0.083 | | Naive RAG | 0.248 | 0.357 | 0.384 | | HippoRAGV2 | 0.289 | 0.404 | 0.452 | | PIKE-RAG | 0.383 | 0.498 | 0.565 | | KAG-V0.6.1 | 0.363 | 0.481 | 0.547 | | KAG-V0.7LC | 0.379 | 0.513 | 0.560 | | KAG-V0.7 | 0.385 | 0.520 | 0.579 |

- hotpotqa

| Method | em | f1 | llm_accuracy | | --- | --- | --- | --- | | Naive Gen | 0.223 | 0.313 | 0.342 | | Naive RAG | 0.566 | 0.704 | 0.762 | | HippoRAGV2 | 0.557 | 0.694 | 0.807 | | PIKE-RAG | 0.558 | 0.686 | 0.787 | | KAG-V0.6.1 | 0.599 | 0.745 | 0.841 | | KAG-V0.7LC | 0.600 | 0.744 | 0.828 | | KAG-V0.7 | 0.603 | 0.748 | 0.844 |

- twowiki

| Method | em | f1 | llm_accuracy | | --- | --- | --- | --- | | Naive Gen | 0.199 | 0.310 | 0.382 | | Naive RAG | 0.448 | 0.512 | 0.573 | | HippoRAGV2 | 0.542 | 0.618 | 0.684 | | PIKE-RAG | 0.63 | 0.72 | 0.81 | | KAG-V0.6.1 | 0.666 | 0.755 | 0.811 | | KAG-V0.7LC | 0.683 | 0.769 | 0.826 | | KAG-V0.7 | 0.684 | 0.770 | 0.836 |

3.1.2、各种方法的参数配置

| Method | 数据集 | 基模(构建/推理) | 向量模型 | 参数设置 |

| --- | --- | --- | --- | --- |

| Naive Gen | hippoRAG 论文提供的1万 docs、1千 questions; | qwen2.5-72B | bge-m3 | 无 |

| Naive RAG | 同上 | qwen2.5-72B | bge-m3 | numdocs: 10 |

| HippoRAGV2 | 同上 | qwen2.5-72B | bge-m3 | retrievaltopk=200

linkingtopk=5

maxqasteps=3

qatopk=5

graphtype=factsandsimpassagenodeunidirectional

embeddingbatchsize=8 |

| PIKE-RAG | 同上 | qwen2.5-72B | bge-m3 | taggingllmtemperature: 0.7

qallmtemperature: 0.0

chunkretrievek: 8

chunkretrievescorethreshold: 0.5

atomretrievek: 16

atomicretrievescorethreshold: 0.2

maxnumquestion: 5

numparallel: 5 |

| KAG-V0.6.1 | 同上 | qwen2.5-72B | bge-m3 | 参见https://github.com/OpenSPG/KAG/tree/v0.6 examples 各子目录的kagconfig.yaml |

| KAG-V0.7LC | 同上 | 构建:qwen2.5-7B

问答:qwen2.5-72B | bge-m3 | 参见https://github.com/OpenSPG/KAG openbenchmarks 各子目录kagconfig.yaml |

| KAG-V0.7 | 同上 | qwen2.5-72B | bge-m3 | 参见https://github.com/OpenSPG/KAG openbenchmarks 各子目录kag_config.yaml |

3.2、结构化数据集

PeopleRelQA(人物关系问答) 和 AffairQA(政务问答) 分别是OpenKG OneEval榜单上阿里云天池大赛和浙江大学提供的数据集。KAG通过“语义化建模 + 结构化构图 + NL2Cypher检索”的方式,为垂直领域应用提供了一个简洁的落地范式。未来,我们将围绕大模型与知识引擎的结合,持续优化结构化数据问答的效果。

OpenKG OneEval 榜单的重点在于评估大语言模型(LLM)对各类知识的理解与运用能力。参考OpenKG官方描述,该榜单在知识检索方面采用了较为简单的策略,该方法召回结果可能引入噪声,同时也评估LLM在面对不完美或冗余知识时的鲁棒性。KAG在这些场景中的指标提升得益于有效的检索策略保证了检索结果与问题之间的相关性。

本次更新中,KAG在AffairQA和PRQA数据集上验证了其针对传统知识图谱类任务的检索与推理能力。未来,KAG将进一步推动Schema的标准化和推理框架的对齐,并发布更多测试指标以支持更广泛的应用场景。

- PeopleRelQA(人物关系问答)

| Method | em | f1 | llm_accuracy | 方法论 | 指标来源 | | --- | --- | --- | --- | --- | --- | | deepseek-v3(OpenKG oneEval) | - | 2.60% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | qwen2.5-72B(OpenKG oneEval) | - | 2.50% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | GPT-4o(OpenKG oneEval) | - | 3.20% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | QWQ-32B(OpenKG oneEval) | - | 3.00% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | Grok 3(OpenKG oneEval) | - | 4.70% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | KAG-V0.7 | 45.5% | 86.6% | 84.8% | 基于KAG 框架自定义AffairQA pipeline + cypher_solver | 蚂蚁KAG 团队 |

- AffairQA(政务信息问答)

| Method | em | f1 | llm_accuracy | 方法论 | 指标提供者 | | --- | --- | --- | --- | --- | --- | | deepseek-v3 | - | 42.50% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | qwen2.5-72B | - | 45.00% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | GPT-4o | - | 41.00% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | QWQ-32B | - | 45.00% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | Grok 3 | - | 45.50% | - | Dense Retrieval + LLM Generation | OpenKG 公众号 | | KAG-V0.7 | 77.5% | 83.1% | 88.2% | 基于KAG 框架自定义AffairQA pipeline | 蚂蚁KAG 团队 |

4、产品及平台优化

本次更新优化了知识问答的产品体验,用户可访问 KAG 用户手册,在快速开始->产品模式一节,获取我们的语料文件以复现以下视频中的结果。

4.1、问答体验优化

通过优化KAG-Solver框架的规划、执行与生成功能,基于Qwen2.5-72B和DeepSeek-V3模型的应用,可实现与DeepSeek-R1相当的深度推理效果。在此基础上,产品新增三项能力:支持推理结果的流式动态输出、实现Markdown格式的图索引自动渲染,以及生成内容与原始文献引用的智能关联功能。

4.2、支持深度推理与普通检索

新增深度推理开关功能,用户可根据需求灵活启用或关闭,以平衡回答准确率与计算资源消耗;联网搜索的能力当前测试中,请关注KAG框架的后续版本更新。

4.3、索引构建能力完善

本次更新提升结构化数据导入能力,支持从 CSV、ODPS、SLS 等多种数据源导入结构化数据,优化数据加载流程,提升使用体验;可同时处理"结构化"和"非结构化"数据,满足多样性需求。同时,增强了知识构建的任务管理能力,提供任务调度、执行日志、数据抽样 等功能,便于问题追踪与分析。

5、后续计划

近期版本迭代中,我们持续致力于持续提升大模型利用外部知识库的能力,实现大模型与符号知识的双向增强和有机融合,不断提升专业场景推理问答的事实性、严谨性和一致性等,我们也将持续发布,不断提升能力的上限,不断推进垂直领域的落地。

6、致谢

本次发布修复了分层检索模块中的若干问题,在此特别感谢反馈这些问题的社区开发者们。

此次框架升级得到了以下专家和同仁的鼎力支持,我们深表感激:

- 同济大学:王昊奋教授、王萌教授

- 中科院计算所:白龙博士

- 湖南科创信息:研发专家刘玲

- 开源社区:资深开发者李云鹏

- 交通银行:研发工程师高晨星

- Java

Published by andylau-55 about 1 year ago

openspg-kag - Version 0.6

Version 0.6 (2025-01-07)

On January 7, 2025, OpenSPG officially released version 0.6, bringing updates across multiple areas, including domain knowledge mounting, vertical domain schema management, visual knowledge exploration, and support for summary generation tasks. In terms of user experience, it offers a mechanism for resuming knowledge base tasks from breakpoints, introduces a user login and permission system, and optimizes task scheduling for building processes. In developer mode, it supports configuring different models for different stages and enables schema-constraint mode for extraction, significantly enhancing the system's flexibility, usability, performance, and security. This release provides users with a more powerful knowledge management platform that adapts to diverse application scenarios.

🌟 New Features

Support for Summary Generation Tasks

- Native support for abstractive summarization tasks without sacrificing multi-hop factual reasoning accuracy. On the CSQA dataset, while comprehensiveness, diversity, and empowerment metrics are slightly lower than LightRAG (-1.2/10), the factual accuracy metric is better than LightRAG (+0.1/10). On multi-hop question answering datasets such as HotpotQA, TwoWiki, and MuSiQue, since LightRAG and GraphRAG do not provide a factual QA evaluation entry, the EM metric using the default entry is close to 0. For quantitative evaluation results, please refer to the KAG code repository under

examples/csqa/README.mdand follow the steps to reproduce.

- Native support for abstractive summarization tasks without sacrificing multi-hop factual reasoning accuracy. On the CSQA dataset, while comprehensiveness, diversity, and empowerment metrics are slightly lower than LightRAG (-1.2/10), the factual accuracy metric is better than LightRAG (+0.1/10). On multi-hop question answering datasets such as HotpotQA, TwoWiki, and MuSiQue, since LightRAG and GraphRAG do not provide a factual QA evaluation entry, the EM metric using the default entry is close to 0. For quantitative evaluation results, please refer to the KAG code repository under

Domain Schema Management

- The product provides SPG schema management capabilities, allowing users to optimize knowledge base construction and inference Q&A performance by customizing schemas.

Knowledge Exploration

- Added a knowledge exploration feature to enable visual query and analysis of knowledge base data, and provided an HTTP API for integration with other systems.

Support for Mounting Domain Knowledge in KAG-Builder(Developer Mode)

- In developer mode, the system supports injecting domain knowledge (domain vocabulary, relationships between terms) into the knowledge base, which can significantly improve knowledge base construction and inference Q&A performance (with a 10%+ improvement in the medical domain).

Adding Knowledge Alignment Component to the KAG-Builder Pipeline

- Kag-Builder provides a default knowledge alignment component that includes features such as filtering out invalid data and linking similar entities. This optimizes the structure and data quality of the graph.

⚙️ User Experience Optimizations

Resumable Tasks

- Provide resumable capabilities for knowledge base construction tasks at the file level and chunk level in both product mode and developer mode, to reduce the time and token consumption caused by full re-runs after task failures.

User Login & Permission System

- Implement a user login and permission system to prevent unauthorized access and operations on the knowledge base data.

Optimized Knowledge Base Construction Task Scheduling

- Provide database-based knowledge base construction task scheduling to avoid task anomalies or interruptions after container restarts.

Support for Configuring Different Models at Different Stages (Developer Mode)

- The system provides a component management mechanism based on a registry, allowing users to instantiate component objects via configuration files. This supports users in developing and embedding custom components into the KAG-Builder and KAG-Solver workflows. Additionally, it enables the configuration of different-sized models at different stages of the workflow, thereby enhancing the overall reasoning and question-answering performance.

Optimization of Layout Analysis for Markdown, PDF, and Word Files

- For Markdown, PDF, and Word files, the system prioritizes dividing the content into chunks based on the file's sections. This ensures that the content within each chunk is more cohesive.**

Global Configuration and Knowledge Base Configuration

- Provide global configuration for the knowledge base, allowing unified settings for storage engines, generation models, and representation model access information.

Support for Schema-Constrained Extraction and Linking (Developer Mode)

- Provide a schema-constraint mode that strictly adheres to schema definitions during the knowledge base construction phase, enabling finer-grained and more complex knowledge extraction.

Version 0.6 (2025-01-07)

2025 年 1 月 7 日,OpenSPG 正式发布 v0.6 版本,此次发布带来多方面更新,包括领域知识挂载、垂域schema 管理、可视化知识探查、摘要生成类任务支持等;用户体验上,提供知识库任务的断点续跑机制,新增用户登录与权限体系、优化构建任务调度;开发者模式下支持不同阶段配置不同模型、支持 schema-constraint 模式抽取等,极大地提升了系统的灵活性、易用性、性能和安全性,为用户提供了一个更加强大且适应多样化应用场景的知识管理平台。

🌟 新增功能

摘要生成类任务支持

- 不牺牲多跳事实推理精度的情况下,原生支持摘要生成任务。 在CSQA 数据集上,全面性、多样性、赋权性 等指标弱于LightRAG (-1.2/10)情况下,事实性指标优于 LightRAG(+0.1/10);在hotpotqa, twowiki, musique 等多跳问答数据集上,鉴于LightRAG & GraphRAG均未提供事实问答的测评入口,使用默认入口测试EM指标接近0。 KAG 量化评测结果,可参考 KAG 代码仓库 examples/csqa/READEME.md 按步骤复现。

领域 Schema 管理

- 产品侧提供spg schema 管理能力,支持用户根据通过自定义schema 以优化知识库构建&推理问答的效果。

知识探查

- 新增知识探查功能,实现知识库数据的可视化查询分析,并提供HttpAPI 与其它系统对接。

知识库构建支持挂载领域知识 (开发者模式)

- 开发者模式下,支持将领域知识(领域词汇、词条间关系)注入知识库中,可显著提升知识库构建、推理问答效果(医疗场景下有10%+ 的提升)。

构建链路增加知识对齐组件

- Kag-Builder 提供默认的知识对齐组件,并内嵌无效数据过滤、相似实体链指等功能,以优化图谱的结构和数据质量。

⚙️ 用户体验优化

断点续跑

- 产品模式、开发者模式下,分别提供文件级别、Chunk 级别的知识库构建任务的断点续跑能力,以降低任务失败后全量重跑所带来的时间和tokens 消耗。

用户登录&权限体系

- 提供 用户登录&权限体系,防止未经授权的知识库数据访问和操作。

知识库构建任务调度优化

- 提供基于数据库的知识库构建任务调度能力,避免容器重启后任务异常或者中断。

支持不同阶段配置不同模型(开发者模式)

- 提供基于注册器的组件管理机制,允许用户通过配置文件实例化组件对象,支持用户开发&嵌入自定义组件到KAG-Builder、KAG-Solver 工作流 中,同时在工作流的不同阶段配置不同规模的大模型,以提升整体的推理问答性能。

Markdown、PDF、Word 文件版面分析优化

- Markdown、pdf、word 等文件优先根据文件章节划分Chunk,以实现同一chunk 的内容更内聚。

项目全局配置及知识库配置

- 提供知识库全局配置功能,统一设置存储引擎、生成模型、表示模型的访问信息。

支持 schema-constraint 模式的抽取链接(开发者模式)

- 提供schema-constraint 模式,知识库构建阶段,严格按照 Schema 的定义进行操作,从而实现更细粒度和更复杂的知识抽取。

- Java

Published by andylau-55 over 1 year ago

openspg-kag - Version 0.5.1

Version 0.5.1 (2024-11-21)

OpenSPG released version v0.5.1 on November 21, 2024. This version focuses on addressing user feedback and introduces a series of new features and user experience optimizations.

🌟 New Features

Support for Word Documents

- Users can now directly upload

.docor.docxfiles to streamline the knowledge base construction process.

- Users can now directly upload

New Project Deletion API

- Quickly clear and delete projects and related data through an API, compatible with the latest Neo4j image version.

- Quickly clear and delete projects and related data through an API, compatible with the latest Neo4j image version.

Model Call Concurrency Setting

- Added the

builder.model.execute.numparameter, with a default concurrency of 5, to improve efficiency in large-scale knowledge base construction.

- Added the

Improved Logging

- Added a startup success marker in the logs to help users quickly verify if the service is running correctly.

- Added a startup success marker in the logs to help users quickly verify if the service is running correctly.

⚙️ User Experience Optimizations

- Neo4j Memory Overflow Issues

- Addressed memory overflow problems in Neo4j during large-scale data processing, ensuring stable operation for extensive datasets.

- Addressed memory overflow problems in Neo4j during large-scale data processing, ensuring stable operation for extensive datasets.

- Concurrent Neo4j Query Execution Issues

- Optimized execution strategies to resolve Graph Data Science (GDS) library conflicts or failures in high-concurrency scenarios.

- Optimized execution strategies to resolve Graph Data Science (GDS) library conflicts or failures in high-concurrency scenarios.

- Schema Preview Prefix Issue

- Fixed issues where extracted schema preview entities lacked necessary prefixes, ensuring consistency between extracted entities and predefined schemas.

- Fixed issues where extracted schema preview entities lacked necessary prefixes, ensuring consistency between extracted entities and predefined schemas.

- Default Neo4j Password for Project Creation/Modification

- Automatically fills a secure default password if none is specified during project creation or modification, simplifying the configuration process.

- Automatically fills a secure default password if none is specified during project creation or modification, simplifying the configuration process.

- Frontend Bug Fixes

- Resolved issues with JS dependencies relying on external addresses and embedded all frontend files into the image. Improved the knowledge base management interface for a smoother user experience.

- Resolved issues with JS dependencies relying on external addresses and embedded all frontend files into the image. Improved the knowledge base management interface for a smoother user experience.

- Empty Node/Edge Type in Neo4j Writes

- Enhanced writing logic to handle empty node or edge types during knowledge graph construction, preventing errors or data loss in such scenarios.

- Enhanced writing logic to handle empty node or edge types during knowledge graph construction, preventing errors or data loss in such scenarios.

Version 0.5.1 (2024-11-21)

OpenSPG 在 2024 年 11 月 21 日发布了 v0.5.1 版本。此版本重点解决了用户反馈的问题,并带来了一系列新功能和用户体验的优化。

🌟 新增功能

支持 word 文档的构建

- 用户现可通过知识库管理页面直接上传 .doc 或 .docx 后缀的文件,进行知识库的构建流程。这一更新使得知识内容的导入更加便捷,提高效率。

- 用户现可通过知识库管理页面直接上传 .doc 或 .docx 后缀的文件,进行知识库的构建流程。这一更新使得知识内容的导入更加便捷,提高效率。

提供项目删除接口

- 为了帮助用户更高效地管理项目,我们新增了一个项目删除接口。用户可以通过访问 http://127.0.0.1:8887/project/api/delete?projectId=xx 完成项目的快速清空与删除操作。该接口会同步清理项目下的所有schema、知识库任务、知识库问答任务以及关联的 Neo4j 数据库。 Tips:使用此功能前,需确保已将 openspg-neo4j 镜像更新至最新版本

支持模型调用并发度设置

- 在大规模知识库构建过程中,为了提高构建效率,我们引入了模型调用的并发控制机制。用户可以通过设置 builder.model.execute.num 参数来调整并发数量,默认值设定为5。这有助于避免因模型服务性能瓶颈而导致的任务失败或系统卡顿。

- 在大规模知识库构建过程中,为了提高构建效率,我们引入了模型调用的并发控制机制。用户可以通过设置 builder.model.execute.num 参数来调整并发数量,默认值设定为5。这有助于避免因模型服务性能瓶颈而导致的任务失败或系统卡顿。

日志中添加启动成功标识

- 为了让用户能够更直观地判断 OpenSPG 服务是否启动成功,我们在日志输出中加入了明确的启动成功标识。openspg-server 成功启动后,会输出这一标识。

- 为了让用户能够更直观地判断 OpenSPG 服务是否启动成功,我们在日志输出中加入了明确的启动成功标识。openspg-server 成功启动后,会输出这一标识。

⚙️ 用户体验优化

- 解决大规模数据构建下 Neo4j 调用内存超限问题

- 针对在处理大规模数据集时出现的 Neo4j 内存溢出问题,我们进行了深入分析并实施了有效的解决方案。现在,面对大规模数据集Neo4j 能保持稳定运行,有效防止了因内存不足而导致的服务中断。

- 解决多并发下执行 Neo4j 查询导致的 GDS 加载问题

- 在多并发场景下执行 Neo4j 查询时,图数据科学 (GDS) 库的加载会出现冲突或失败的情况。为此,我们优化了查询执行策略,确保了在高并发环境下的查询性能和稳定性。

- 解决抽取结果 Schema 预览实体无前缀问题

- 在之前版本中,部分用户反馈在查看抽取结果的 Schema 预览时,实体名称缺少必要的前缀信息导致抽取的实体和预定义的Schema不一致。此次更新修正了这一问题,保证了所有实体名称的完整性和准确性。

- 创建修改项目时 Neo4j 无密码时填充默认值

- 当用户在创建或修改项目时,如果未指定 Neo4j 密码,系统将自动填充一个安全的默认值,从而简化了配置流程,减少了用户的输入负担。

- 前端 bugfix

- 修复了JS依赖外部地址问题,已将前端文件全部内置到镜像内;同时针对知识库管理页面进行了多项改进,以提供更加流畅的操作体验。

- 解决点边类型为空导致的 Neo4j 写入失败问题

- 对于在构建知识图谱时可能出现的节点或关系类型为空的情况,我们优化了写入逻辑,确保即便在这些特殊情况下也能顺利完成数据的写入操作,避免了因类型缺失而引发的数据丢失或错误。

- Java

Published by andylau-55 over 1 year ago

openspg-kag - Version 0.5

Version 0.5 (2024-10-25)

retrieval Augmentation Generation (RAG) technology promotes the integration of domain applications with large models. However, RAG has problems such as a large gap between vector similarity and knowledge reasoning correlation, and insensitivity to knowledge logic (such as numerical values, time relationships, expert rules, etc.), which hinder the implementation of professional knowledge services. On October 25, OpenSPG released version V0.5, officially releasing the professional domain knowledge Service Framework for knowledge enhancement generation (KAG) .

Highlights of the Release Version:

1. KAG: Knowledge Augmented Generation

KAG aims to make full use of the advantages of Knowledge Graph and vector retrieval, and bi-directionally enhance large language models and knowledge graphs through four aspects to solve RAG challenges (1) LLM-friendly semantic knowledge management (2) Mutual indexing between the knowledge map and the original snippet. (3) Logical symbol-guided hybrid inference engine (4) Knowledge alignment based on semantic reasoning KAG is significantly better than NaiveRAG, HippoRAG and other methods in multi-hop question and answer tasks. The F1 score on hotpotQA is relatively improved by 19.6, and the F1 score on 2wiki is relatively improved by 33.5

2. Knowledge base management

OpenSPG also provides a user-friendly product interface for KAG, allowing users to upload and manage documents, preview extraction results, and quiz through the visual interface after local deployment. In the knowledge question and answer session, the system not only shows the final answer, but also presents the reasoning process, thus enhancing the transparency and interpretability of the whole question and answer process. Through this product interface, users can use KAG more intuitively and easily

3. Continuous Optimization and Bug Fixes

- feat(schema): support maintenance of simplified DSL in https://github.com/OpenSPG/openspg/pull/335

- feat(reasoner): support thinker in knext in https://github.com/OpenSPG/openspg/pull/344

- feat(reasoner): support ProntoQA and ProofWriter. in https://github.com/OpenSPG/openspg/pull/352

- feat(reasoner): thinker support deduction expression in https://github.com/OpenSPG/openspg/pull/369

- feat(openspg): support kag in https://github.com/OpenSPG/openspg/pull/372

- feat(reasoner): add udf split_part in https://github.com/OpenSPG/openspg/pull/378

- fix(reasoner): support triple in thinker context in https://github.com/OpenSPG/openspg/pull/341

- fix(reasoner): bugfix in graph store. in https://github.com/OpenSPG/openspg/pull/346

- fix(reasoner): fix pattern schema extra in https://github.com/OpenSPG/openspg/pull/351

- fix(knext): add remote client addr in https://github.com/OpenSPG/openspg/pull/376

- fix(knext): reasoner command add default cfg config in https://github.com/OpenSPG/openspg/pull/377

Version 0.5 (2024-10-25)

检索增强生成(RAG)技术推动了领域应用与大模型结合。然而,RAG 存在着向量相似度与知识推理相关性差距大、对知识逻辑(如数值、时间关系、专家规则等)不敏感等问题,这些都阻碍了专业知识服务的落地。10 月 25 日,OpenSPG 发布 V0.5 版本,正式发布了知识增强生成(KAG)的专业领域知识服务框架

版本亮点

1. KAG 专业领域知识服务框架

KAG 旨在充分利用知识图谱和向量检索的优势,并通过四个方面双向增强大型语言模型和知识图谱,以解决 RAG 挑战 (1) 对 LLM 友好的语义化知识管理 (2) 知识图谱与原文片段之间的互索引 (3) 逻辑符号引导的混合推理引擎 (4) 基于语义推理的知识对齐 KAG 在多跳问答任务中显著优于 NaiveRAG、HippoRAG 等方法,在 hotpotQA 上的 F1 分数相对提高了 19.6%,在 2wiki 上的 F1 分数相对提高了33.5%

2. 知识库管理

OpenSPG针对KAG 还提供了一个用户友好的产品界面,支持用户在本地部署后,通过可视化界面进行文档上传和管理、预览抽取结果、以及知识问答。在知识问答环节,系统不仅展示最终答案,还会呈现推理过程,从而增强了整个问答流程的透明度和可解释性。通过这个产品界面,用户能够更直观、更轻松地上手使用 KAG

3. 持续优化与问题修复

- feat(schema): support maintenance of simplified DSL in https://github.com/OpenSPG/openspg/pull/335

- feat(reasoner): support thinker in knext in https://github.com/OpenSPG/openspg/pull/344

- feat(reasoner): support ProntoQA and ProofWriter. in https://github.com/OpenSPG/openspg/pull/352

- feat(reasoner): thinker support deduction expression in https://github.com/OpenSPG/openspg/pull/369

- feat(openspg): support kag in https://github.com/OpenSPG/openspg/pull/372

- feat(reasoner): add udf split_part in https://github.com/OpenSPG/openspg/pull/378

- fix(reasoner): support triple in thinker context in https://github.com/OpenSPG/openspg/pull/341

- fix(reasoner): bugfix in graph store. in https://github.com/OpenSPG/openspg/pull/346

- fix(reasoner): fix pattern schema extra in https://github.com/OpenSPG/openspg/pull/351

- fix(knext): add remote client addr in https://github.com/OpenSPG/openspg/pull/376

- fix(knext): reasoner command add default cfg config in https://github.com/OpenSPG/openspg/pull/377

- Java

Published by andylau-55 over 1 year ago

openspg-kag - Version 0.0.3

Version 0.0.3 (2024-08-15)

Knowledge graphs have become a crucial bridge between LLMs and AI Agents. the OpenSPG project officially released its first stable version. This release not only inherits all the powerful features of the previous beta version but also brings comprehensive improvements in stability, compatibility, and user experience, aiming to provide a more mature and reliable knowledge construction solution for enterprises and developers.

Highlights of the Release Version:

1. Unified Knowledge Extraction with LLMs

The first stable version of OpenSPG inherits and optimizes the unified knowledge extraction feature from the beta version. This feature is based on OneKE, a Chinese-English bilingual knowledge extraction grand model jointly released by Ant Group and Zhejiang University. Through techniques like hard negative sampling and schema-rotation-based instruction construction, it enhances the generalization capability of structured information extraction.

2. Product Visualization Interface

The release further strengthens the visualization interface, offering users a more intuitive data exploration and analysis experience. You can now visually inspect modeling results on the page and conduct interactive analysis and reasoning queries.

3. Continuous Optimization and Bug Fixes

Bugfix 1: Initialization exception in knext builder (issue #236 #246) Bugfix 2: Fixed error in reasoner transform ListOpExpr (#328) Bugfix 3: Front-end canvas display issue in analysis reasoning (Issue #269)

The release version of OpenSPG is applicable to multiple domains, including but not limited to financial risk control, healthcare, enterprise knowledge management, and intelligent customer service. By constructing high-quality knowledge graphs, it empowers various application scenarios such as decision analysis, recommendation systems, and natural language understanding.

Version 0.0.3 (2024-08-15)

知识图谱已成为连接大模型与智能体的重要桥梁。OpenSPG 项目正式发布首个 Release 版本。这一版本承袭了此前 beta 版本的所有强大功能,在稳定性、兼容性和用户体验方面进行了全面提升,旨在为企业和开发者提供更加成熟可靠的知识构建解决方案。

版本亮点

1. 大模型统一知识抽取

OpenSPG 首个 Release 版本继承并优化了 beta 版本的大模型统一知识抽取功能。这一功能基于蚂蚁集团与浙江大学联合发布的 OneKE 大模型,专注于 Schema 可泛化的信息抽取,通过难负采样和 Schema 轮训式指令构造技术,提升了结构化信息抽取的泛化能力。

2. 产品可视化界面

Release 版本进一步强化了可视化界面,为用户提供了更加直观的数据探索和分析体验。用户现在可以在页面上直观地查看建模结果,并进行交互式分析推理查询。

3. 持续优化与问题修复

Bugfix 1:knext builder初始化异常 (issue #236 #246) Bugfix 2:修复 reasoner transform ListOpExpr 报错 (#328) Bugfix 3:分析推理前端画布展示问题 (Issue #269)

OpenSPG 的 Release 版本适用于多个领域,包括但不限于金融风控、医疗健康、企业知识管理、智能客服等,通过构建高质量的知识图谱,赋能决策分析、推荐系统、自然语言理解等多种应用场景。

- Java

Published by andylau-55 almost 2 years ago