Recent Releases of evalplus

evalplus - EvalPlus v0.3.1

For the past 6+ months, we have been actively maintaining and improving the EvalPlus repository. Now we are thrilled to announce a new release!

🔥 EvalPerf for Code Efficiency Evaluation

Based on our COLM'24 paper, we integrated the EvalPerf dataset into the EvalPlus repository. EvalPerf is a dataset curated using the Differential Performance Evaluation methodology proposed by the paper, which argues that effective code efficiency evaluation requires:

- Performance-exercising tasks -- our tasks are testified to be code efficiency challenging!

- Performance-exercising inputs -- For each task, we generate performance-challenging test input!

- Compound metric: Differential Performance Score (DPS) -- inspired by LeetCode's efficiency ranking of submissions, it tells conclusions like "your submission can outperform 80% of LLM solutions..."

The EvalPerf dataset initially has 118 coding tasks^ (a subset of the latest HumanEval+ and MBPP+) -- running EvalPerf is as simple as running the following commands:

```bash pip install "evalplus[perf,vllm]" --upgrade

Or: pip install "evalplus[perf,vllm] @ git+https://github.com/evalplus/evalplus" --upgrade

sudo sh -c 'echo 0 > /proc/sys/kernel/perfeventparanoid' # Enable perf evalplus.evalperf --model "ise-uiuc/Magicoder-S-DS-6.7B" --backend vllm ```

At evaluation time we by default perform the following steps:

- Correctness sampling: We sample LLMs for 100 solutions (

n_samples) and perform correctness checking - Efficiency evaluation: For tasks with 10+ passing solutions, we evaluate the code efficiency of (at most 20) passing solutions:

- Primitive metric: # CPU instructions

- We profile the # CPU instructions over (i) new LLM solutions; and (ii) representative performance reference solutions; running through the performance-challenging test input

- We match the profiled new solution to the reference solution with comparative code efficiency as calculate $DPS$ and $DPS_{norm}$

- e.g., Given 10 reference samples in 4 clusters: [3, 2, 3, 2], matching the 3rd cluster leads to $DPS = \frac{sample\ rank}{total\ samples} = \frac{3+2+3}{10}=80$% and $DPS_{norm} = \frac{cluster\ rank}{total\ cluster} = \frac{1+1+1}{4}=75$%

Collaborated work with @soryxie @FatPigeorz !

🔥 Command-line Interface (CLI) Simplification

We largely simplified the evaluation pipelines:

- Previously: run

evalplus.codegen, and thenevalplus.sanitize, and thenevalplus.evaluatewith different parameters

```bash evalplus.codegen --model "ise-uiuc/Magicoder-S-DS-6.7B" \ --dataset [humaneval|mbpp] \ --backend vllm \ --greedy

evalplus.sanitize --samples [path/to/samples]

evalplus.evaluate --samples [path/to/samples] ```

- Now:

bash

evalplus.evaluate --model "ise-uiuc/Magicoder-S-DS-6.7B" \

--dataset [humaneval|mbpp] \

--backend vllm \

--greedy

Other notable updates

- Sanitizer improvements (#189, #190) -- thanks to @Co1lin

- Fixing edge case of

is_float(#196) - HumanEval+ maintenance:

v0.1.10by improving contracts & oracle (#186, #201) -- thanks to @Kristoff-starling @Co1lin - MBPP+ maintenance:

v0.2.1by improving contracts & oracle (#211, #212) - Default behavior change: code generation results saved as

.jsonalrather than massive individual files and folders - Using the official tree-sitter package and Python binary

- Prompt: adding a new liner after stripping the prompt as some models are more familiar with

"""\nover""" - Configurable maximum evaluation process memory via environmental variable

EVALPLUS_MAX_MEMORY_BYTES - When sampling size per task > 1, batch size is automatically set as

min(n_samples, 32)if--bsis not set - Sanitizer behavior: when the code is too broken to be sanitized, return the broken code rather than an empty string for debuggability.

- vLLM: automatic prefix caching is enabled to accelerate sampling (hopefully)

- Setting

top_p = 0.95for OpenAI, Google, and Anthropic backends - New arguments:

--trust-remote-code

PyPI: https://pypi.org/project/evalplus/0.3.1/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.3.1/images/sha256-26b118098bef281fe8dfe999bf05f1d5b45374b4e6c00161ec0f30592aef4740

^In our COLM paper, we presented 121 tasks based on the February version of MBPP+ (v0.1.0) which by then there were 399 MBPP+ tasks -- in MBPP+ (v0.2.0) we removed some broken tasks (399 -> 378) leading a slight cut in the number of EvalPerf tasks as well.

^We skipped/yanked the release of v0.3.0 and directly released v0.3.1 due to broken dependency in v0.3.0.

- Python

Published by ganler over 1 year ago

evalplus - EvalPlus v0.2.1

Main updates

- HumanEval+ and MBPP+ datasets are on the hub now:

- HumanEval+: https://huggingface.co/datasets/evalplus/humanevalplus

- MBPP+: https://huggingface.co/datasets/evalplus/mbppplus

- HumanEval+ is ported to original HumanEval format. Release files have a new home now:

- HumanEval+: https://github.com/evalplus/humanevalplus_release

- MBPP+: https://github.com/evalplus/mbppplus_release

- You can use EvalPlus through bigcode-evaluation-harness now

- Docker image now uses Python 3.10 since some model might generate Python code using latest syntax, leading to false positive using older Python

- Sanitizer is now merged into the pacakge

- Several improvements and bug fixes to the sanitizer

- Test suite reduction now moved to

tools - Fixes the

CACHE_DIRnonexistance issue - Simplified the format of

eval_results.jsonfor readability - Use

EVALPLUS_TIMEOUT_PER_TASKenv var to set the maximum testing time for each task - Timeout per test is set to 0.5s by default

- Fixes argument validity for

inputgen.py

Dataset maintainence

HumanEval/32: fixes the oracle

Supported codegen models

- Now EvalPlus leaderboard lists 82 models

- WizardCoders

- Stable Code

- OpenCodeInterpreter

- antropic API

- mistral API

- CodeLlama instruct

- Phi-2

- Solar

- Dophin

- OpenChat

- CodeMillenials

- Speechless

- xdan-l1-chat

- etc.

PyPI: https://pypi.org/project/evalplus/0.2.1/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.2.1/images/sha256-2bb315e40ea502b4f47ebf1f93561ef88280d251bdc6f394578c63d90e1825d7

- Python

Published by ganler about 2 years ago

evalplus - EvalPlus v0.2.0

🔥 Announcing MBPP+

MBPP is a dataset curated by Google. Its full set includes around 1000 crowd-sourced Python programming problems. However, certain amount of problems can be noisy (e.g., prompts make no sense or tests are broken). Consequently, a subset (~427 problems) of the data has been hand-verified by original author -- MBPP-sanitized.

MBPP+ improves MBPP based on its sanitized version (MBPP-sanitized):

- We further hand-verify the problems to trim ill-formed problems to keep 399 problems

- We also fix the problems whose implementation is wrong (more details can be found here)

- We perform test augmentation to improve the number of tests by 35x (on avg from 3.1 to 108.5)

- We mantain the scripting compatibility against HumanEval+ where one simply needs to toggle the switch by --dataset mbpp for evalplus.evaluate, codegen/generate.py, tools/checker.py as well as tools/sanitize.py

- Initial leaderboard is made available on https://evalplus.github.io/leaderboard.html and we will keep updating

A typical workflow to use MBPP+:

```python

Step 1: Generate MBPP solutions

from evalplus.data import getmbppplus, write_jsonl

def GEN_SOLUTION(prompt: str) -> str: # LLM produce the whole solution based on prompt

samples = [ dict(taskid=taskid, solution=GENSOLUTION(problem["prompt"])) for taskid, problem in getmbppplus().items() ] write_jsonl("samples.jsonl", samples)

May perform some post-processing to sanitize LLM produced code

e.g., https://github.com/evalplus/evalplus/blob/master/tools/sanitize.py

```

```bash

Step 2: Evaluation on MBPP+

docker run -v $(pwd):/app ganler/evalplus:latest --dataset mbpp --samples samples.jsonl

STDOUT will display the scores for "base" (with MBPP tests) and "base + plus" (with additional MBPP+ tests)

```

🔥 HumanEval+ Maintainance

- Leaderboard updates (now 38 models!): https://evalplus.github.io/leaderboard.html

- DeepSeek Coder series

- Phind-CodeLlama

- Mistral and Zephyr series

- Smaller StarCoders

- HumanEval+ now upgrades to

v0.1.9fromv0.1.6- Test-case fixes: 0, 3, 9, 148

- Prompt fixes: 114

- Contract fixes: 1, 2, 99, 35, 28, 32, 160

PyPI: https://pypi.org/project/evalplus/0.2.0/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.2.0/images/sha256-6f1b9bd13930abfb651a99d4c6a55273271f73e5b44c12dcd959a00828782dd6

- Python

Published by ganler over 2 years ago

evalplus - EvalPlus v0.1.7

- EvalPlus leader board: https://evalplus.github.io/leaderboard.html

- Evaluated CodeLlama, CodeT5+ and WizardCoder

- Fixed contract (HumanEval+): 116, 126, 006

- Removed extreme inputs (HumanEval+): 32

- Established

HUMANEVAL_OVERRIDE_PATHwhich allows to override the original dataset with customized dataset

PyPI: https://pypi.org/project/evalplus/0.1.7/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.1.7/images/sha256-69fe87df89b8c1545ff7e3b20232ac6c4841b43c20f22f4a276ba03f1b0d79ae

- Python

Published by ganler over 2 years ago

evalplus - EvalPlus v0.1.6

- Supporting configurable timeouts $T=\max(T{base}, T{gt}\times k)$, where:

- $T_{base}$ is the minimal timeout (configurable by

--min-time-limit; default to 0.2s); - $T_{gt}$ is the runtime of the ground-truth solutions (achieved via profiling);

- $k$ is a configurable factor

--gt-time-limit-factor(default to 4);

- $T_{base}$ is the minimal timeout (configurable by

- Using a more conservative timeout setting to mitigate test-beds with weak performance ($T_{base}: 0.05s \to 0.2s$ and $k: 2\to 4$).

HumanEval+dataset bug fixes:- Medium contract fixesL P129 (#4), P148 (self-identified)

- Minor contract fixes: P75 (#4), P53 (#8), P0 (self-identified), P3 (self-identified), P9 (self-identified)

- Minor GT fixes: P140 (#3)

PyPI: https://pypi.org/project/evalplus/0.1.6/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.1.6/images/sha256-5913b95172962ad61e01a5d5cf63b60e1140dd547f5acc40370af892275e777c

- Python

Published by ganler almost 3 years ago

evalplus - EvalPlus v0.1.5

🚀 HumanEval+[mini] -- 47x smaller while equivalently effective as HumanEval+

- Add

--minitoevalplus.evaluate ...you can use a minimal and best-quality set of extra tests to accelerate evaluation! HumanEval+[mini](avg 16.5 tests) is smaller thanHumanEval+(avg 774.8 tests) by 47x.- This is achieved via test-suite reduction -- we run a set covering algorithm to preserve the same coverage (coverage analysis), mutant-killings (mutation analysis) and sample-killings (pass-fail status of each sample-test pair).

PyPI: https://pypi.org/project/evalplus/0.1.5/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.1.5/images/sha256-01ef3275ab02776e94edd4a436a3cd33babfaaf7a81e7ae44f895c2794f4c104

- Python

Published by ganler almost 3 years ago

evalplus - EvalPlus v0.1.4

- Performance:

- Lazy loading of the cache of evaluation results

- Use

ProcessPoolExecutoroverThreadPoolExecutor - Caching groudtruth outputs

- Observability:

- Concurrent logger when a task gets stuck for 10s

- New models:

- CodeGen2 (infill)

- StarCoder (infill)

- Fixes:

- Deterministic hashing of input problem

PyPI: https://pypi.org/project/evalplus/0.1.4/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.1.4/images/sha256-a0ea8279c71afa9418808326412b1e5cd11f44b3b59470477ecf4ba999d4b73a

- Python

Published by ganler about 3 years ago

evalplus - EvalPlus v0.1.3

- Fixes evaluation when input sample format is

.jsonl

PyPI: https://pypi.org/project/evalplus/0.1.3/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.1.3/images/sha256-fd13ab6ee2aa313eb160fc29debe8c761804cb6af7309280b4e200b6549bd75a

- Python

Published by ganler about 3 years ago

evalplus - EvalPlus v0.1.2

- Fix the bug induced by using

--base-only - Build docker image locally instead of simply doing a

pip install

PyPI: https://pypi.org/project/evalplus/0.1.2/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.1.2/images/sha256-747ae02f0bfbd300c0205298113006203d984373e6ab6b8fb3048626f41dbe08

- Python

Published by ganler about 3 years ago

evalplus - EvalPlus v0.1.1

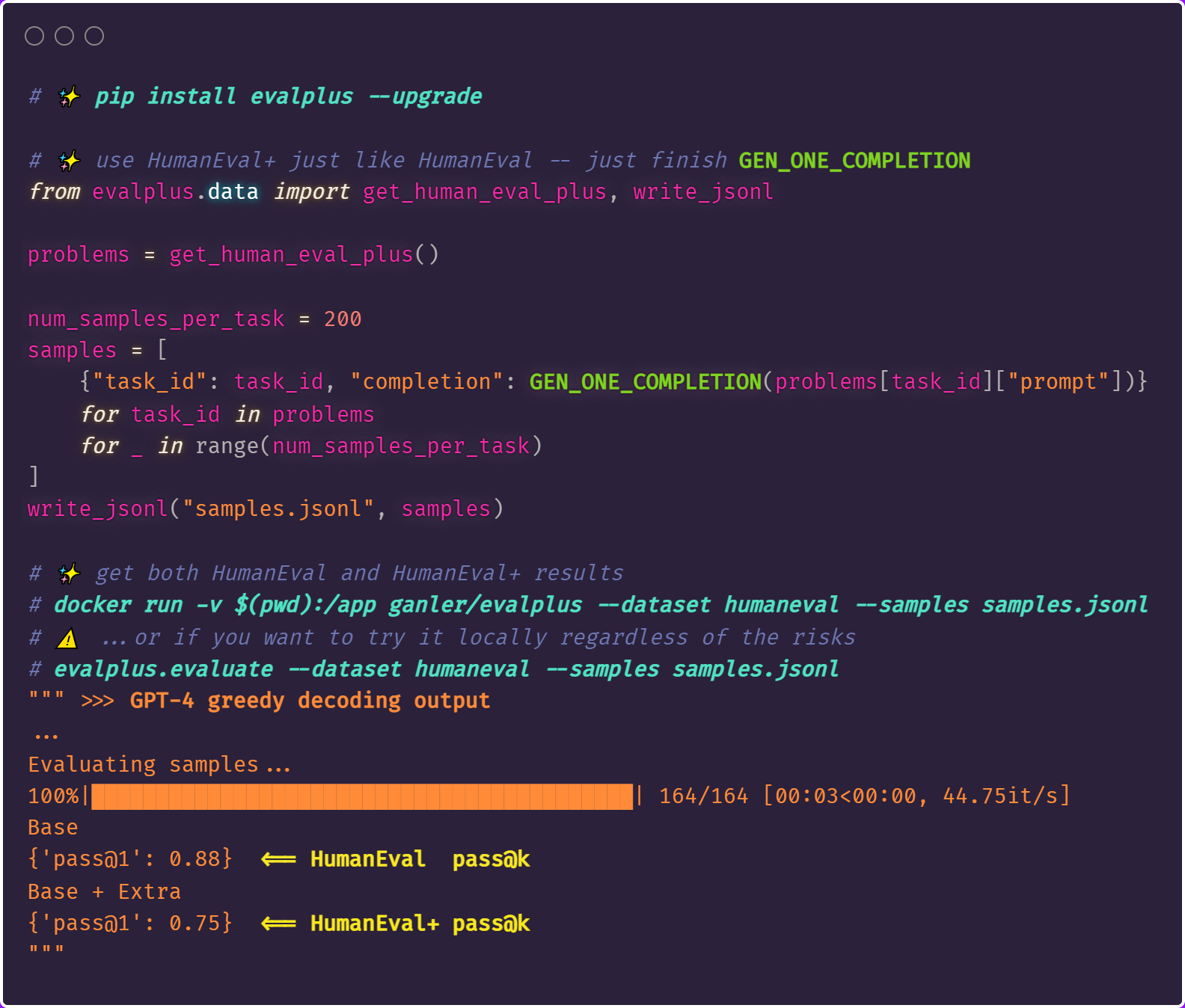

In this version, efforts are mainly made to sanitize and standardize code in evalplus. Most importantly, evalplus strictly follows the dataset usage style of HumanEval. As a result, users can use evalplus in this way:

For more details, the main changes are (tracked in #1):

- Package build and pypi setup

- (HumanEval Compatibility) Support sample files as .jsonl

- (HumanEval Compatibility) get_human_eval_plus() returns a dict instead of list

- (HumanEval Compatibility) Use HumanEval task ID splitter "/" over "_"

- Optimize the evaluation parallelism scheme to the sample-level granularity (original: task level)

- Optimize IPC via shared memory

- Remove groundtruth solutions to avoid data leakage

- Use docker the sandboxing mechanism

- Support Codegen2 in generation

- Split dependency into multiple categories

PyPI: https://pypi.org/project/evalplus/0.1.1/ Docker Hub: https://hub.docker.com/layers/ganler/evalplus/v0.1.1/images/sha256-4993a0dc0ec13d6fe88eb39f94dd0a927e1f26864543c8c13e2e8c5d5c347af0

- Python

Published by ganler about 3 years ago

evalplus - EvalPlus v0.1.0 and Pre-Generated LLM Code Samples for HumanEval+

What is this?

In addition to the initial version of EvalPlus source-code, we release the pre-generated code of LLMs on HumanEval+ (also applicable for base HumanEval) and regularized ground-truth solutions. With these we hope to accelerate future research where research may try to reuse our pre-generated code instead of generating them from scratch.

${MODEL_NAME}_temp_${TEMPERATURE}.zip: LLM-produced program samplesHumanEvalPlusGT.zip: The re-implemented ground-truth solutions

Data sources

The configuration of the pre-generated code follows our pre-print paper: https://arxiv.org/abs/2305.01210

- We evaluated it over:

- x 14 models (10 model types)

- x 5 temperature settings including zero temperature (for greedy decoding) as well as

{0.2, 0.4, 0.6, 0.8} - x 200 code samples used random sampling (i.e., non-greedy decoding settings)

- We use nucleus sampling with top p = 0.95 for all hugging-face based model

- Codegen6B and Codegen16B is accelerated by FauxPilot (thanks!)

Evaluated results

We draw the results from the samples and test-cases from the base HumanEval and our enhanced HumanEval+:

Call for contribution

We also encourage open-source developers to contributing to LLM4Code research by: (i) reproducing and validating our results; (ii) uploading LLM-generated samples and reproducing the results of new models; and of course (iii) trying out our enhanced dataset to get more accurate and trustworthy results!

- Python

Published by ganler about 3 years ago