Science Score: 77.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

✓DOI references

Found 2 DOI reference(s) in README -

✓Academic publication links

Links to: zenodo.org -

✓Committers with academic emails

1 of 1 committers (100.0%) from academic institutions -

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (16.6%) to scientific vocabulary

Repository

Gaussian Mixture Models in Jax

Basic Info

Statistics

- Stars: 22

- Watchers: 2

- Forks: 3

- Open Issues: 3

- Releases: 7

Metadata Files

README.md

GMMX: Gaussian Mixture Models in Jax

A minimal implementation of Gaussian Mixture Models in Jax

- Github repository: https://github.com/adonath/gmmx/

- Documentation https://adonath.github.io/gmmx/

Installation

gmmx can be installed via pip:

bash

pip install gmmx

Or alternatively you can use conda/mamba:

bash

conda install gmmx

Usage

```python from gmmx import GaussianMixtureModelJax, EMFitter

Create a Gaussian Mixture Model with 16 components and 32 features

gmm = GaussianMixtureModelJax.create(ncomponents=16, nfeatures=32)

Draw samples from the model

nsamples = 10000 x = gmm.sample(n_samples)

Fit the model to the data

emfitter = EMFitter(tol=1e-3, maxiter=100) gmmfitted = emfitter.fit(x=x, gmm=gmm) ```

If you use the code in a scientific publication, please cite the Zenodo DOI from the badge above.

Why Gaussian Mixture models?

What are Gaussian Mixture Models (GMM) useful for in the age of deep learning? GMMs might have come out of fashion for classification tasks, but they still have a few properties that make them useful in certain scenarios:

- They are universal approximators, meaning that given enough components they can approximate any distribution.

- Their likelihood can be evaluated in closed form, which makes them useful for generative modeling.

- They are rather fast to train and evaluate.

I would strongly recommend to read In Depth: Gaussian Mixture Models from the Python Data Science Handbook for a more in-depth introduction to GMMs and their application as density estimators.

One of these applications in my research is the context of image reconstruction, where GMMs can be used to model the distribution and pixel correlations of local (patch based)

image features. This can be useful for tasks like image denoising or inpainting. One of these methods I have used them for is Jolideco.

Speed up the training of O(10^6) patches was the main motivation for gmmx.

Benchmarks

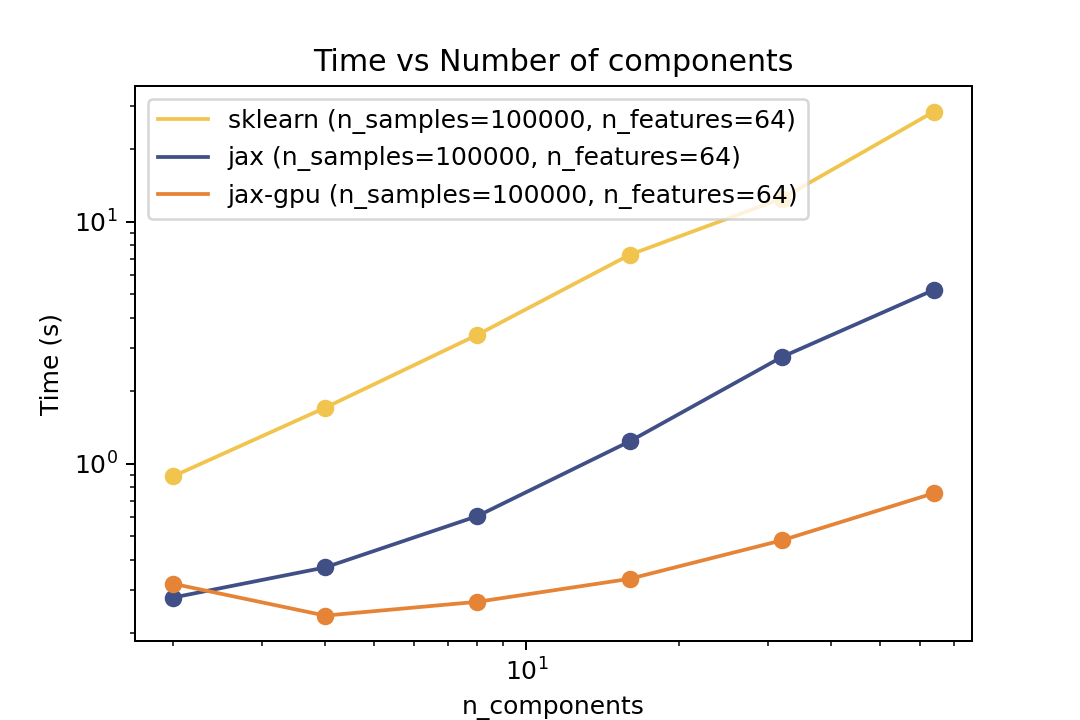

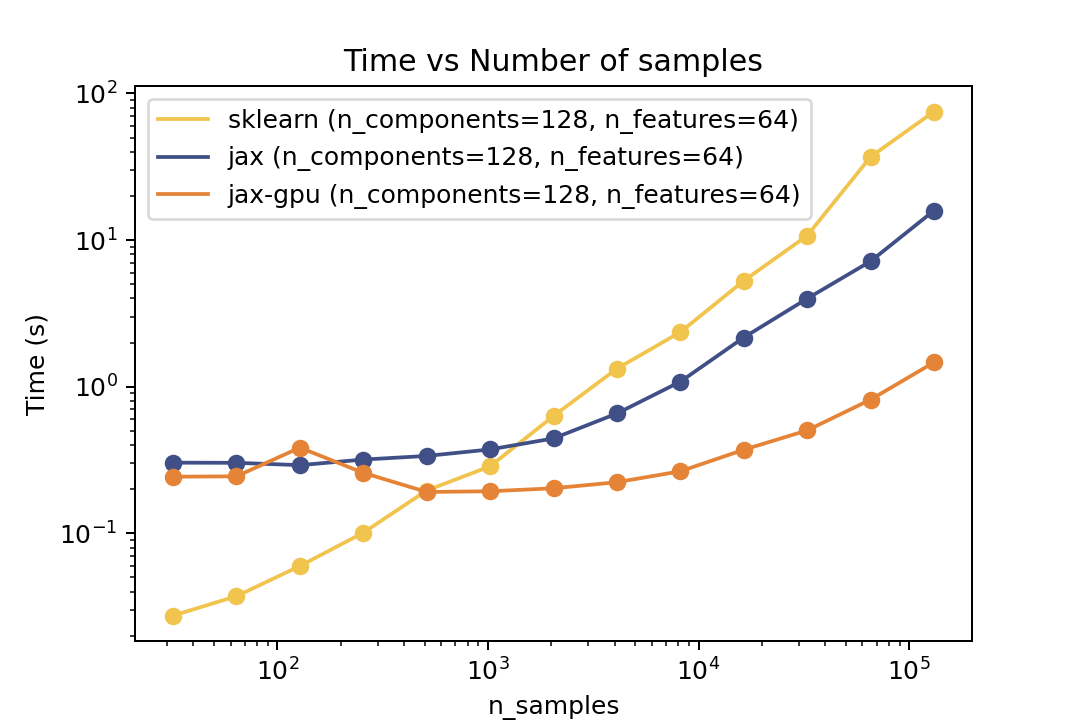

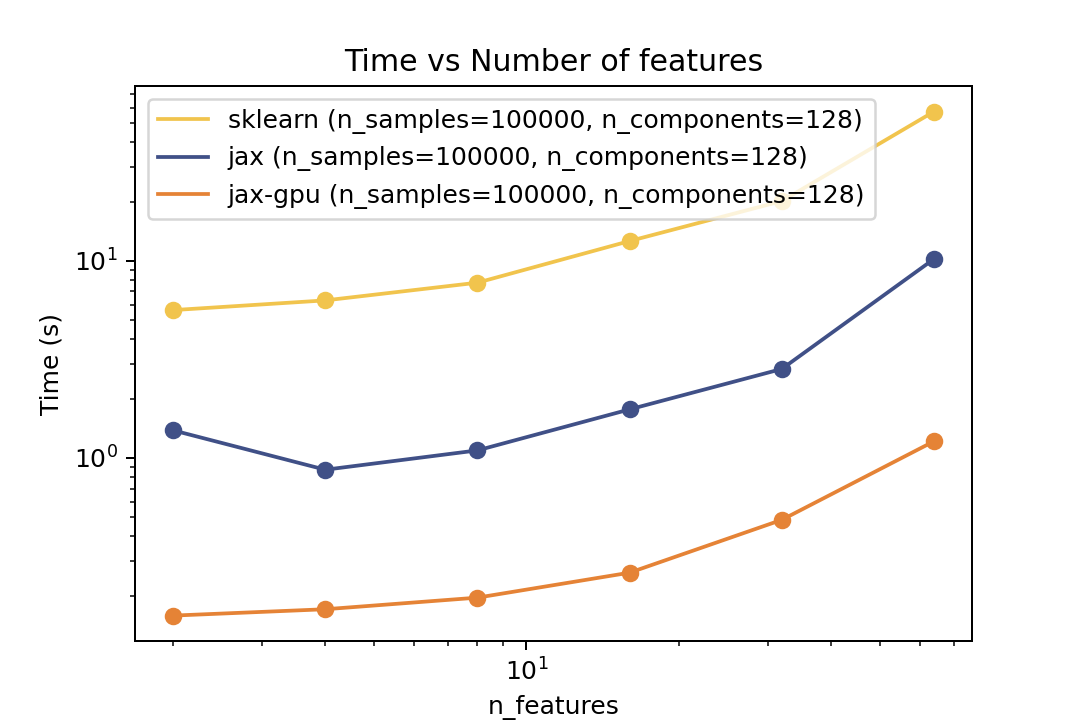

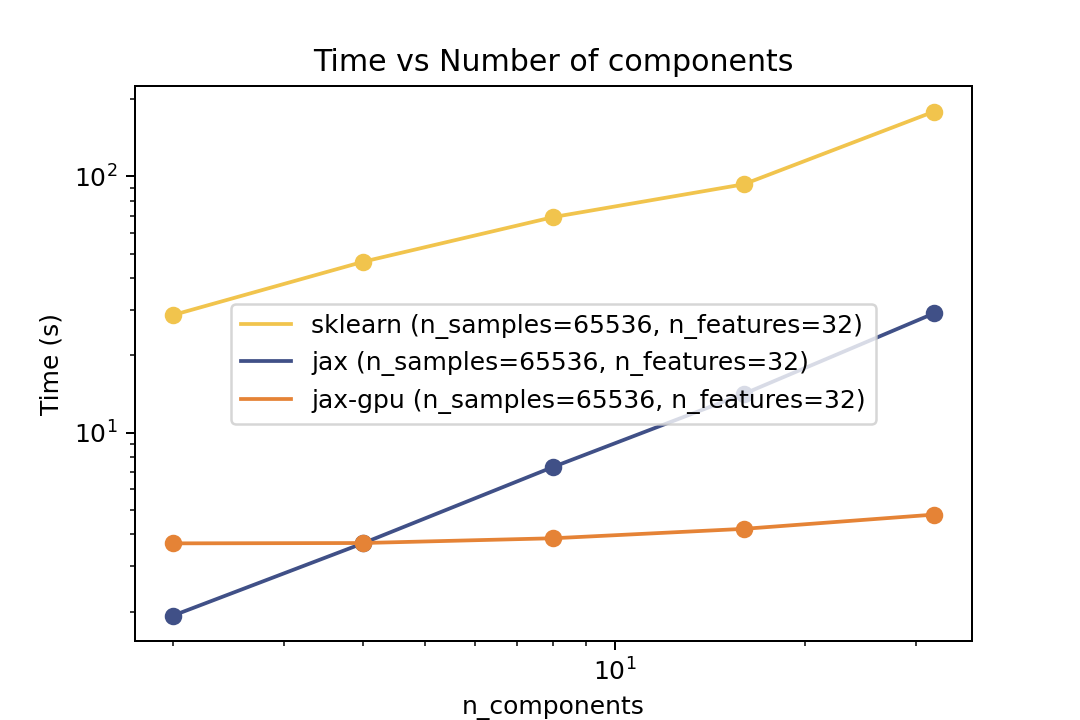

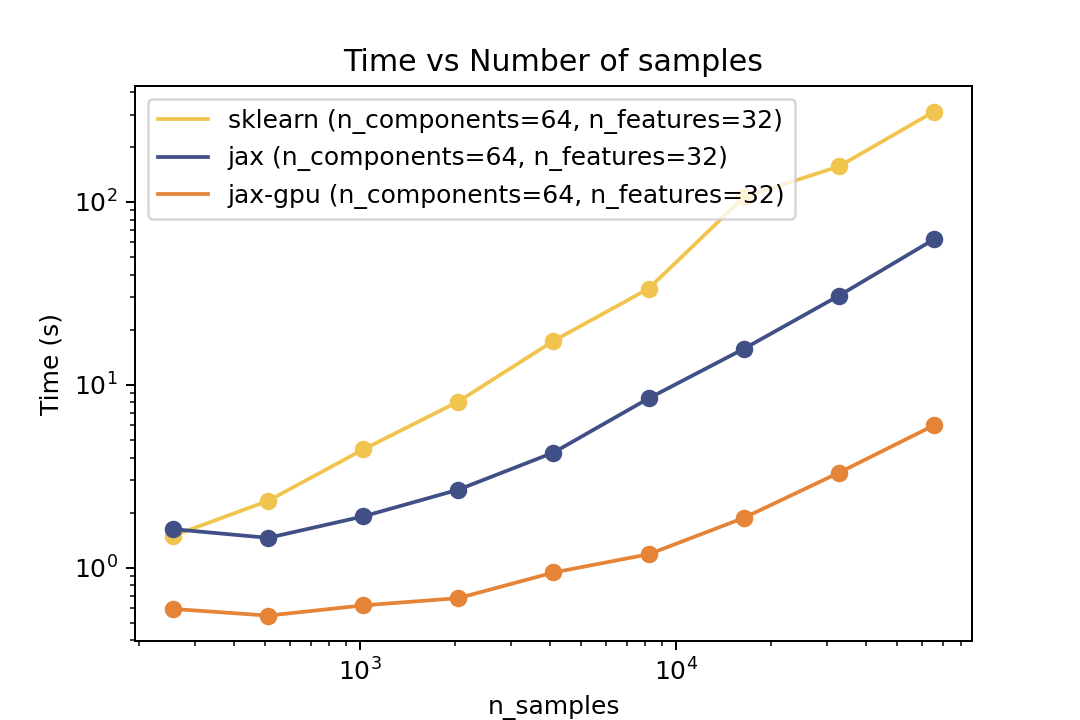

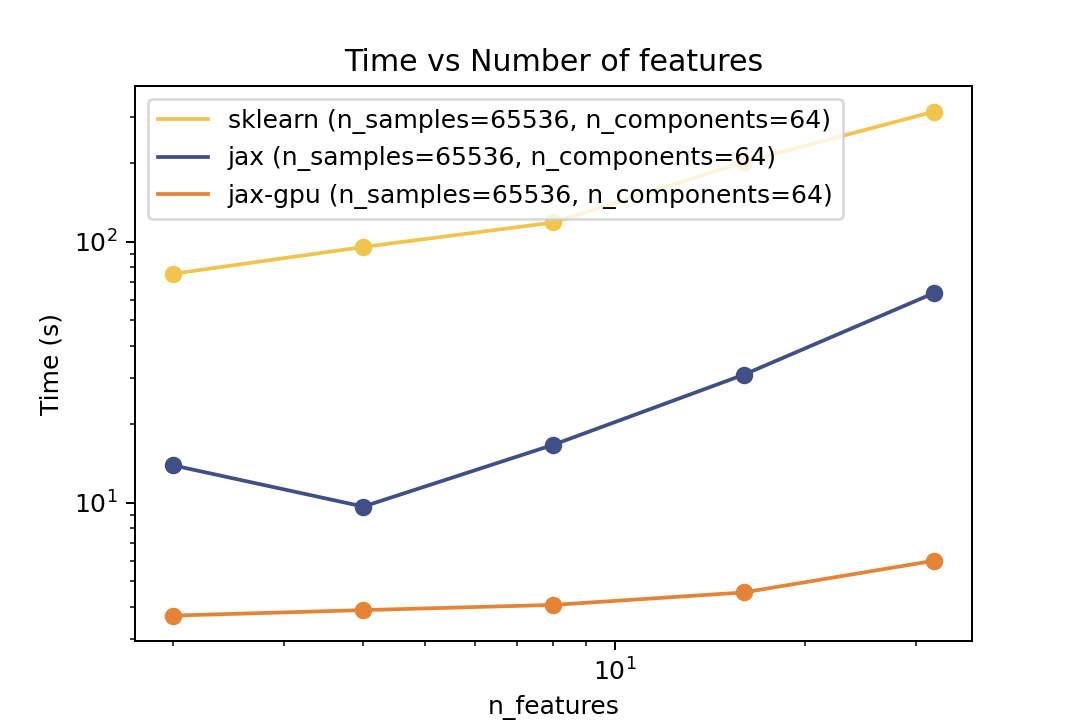

Here are some results from the benchmarks in the examples/benchmarks folder comparing against Scikit-Learn. The benchmarks were run on an "Intel(R) Xeon(R) Gold 6338" CPU and a single "NVIDIA L40S" GPU.

Prediction Time

| Time vs. Number of Components | Time vs. Number of Samples | Time vs. Number of Features |

| ----------------------------------------------------------------------------------------------------------------------------------- | ----------------------------------------------------------------------------------------------------------------------------- | ------------------------------------------------------------------------------------------------------------------------------- |

|  |

|  |

|  |

|

For prediction the speedup is around 5-6x for varying number of components and features and ~50x speedup on the GPU. For the number of samples the cross-over point is around O(10^3) samples.

Training Time

| Time vs. Number of Components | Time vs. Number of Samples | Time vs. Number of Features |

| ------------------------------------------------------------------------------------------------------------------------------- | ------------------------------------------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------------------------------------------- |

|  |

|  |

|  |

|

For training the speedup is around ~5-6x on the same architecture and ~50x speedup on the GPU. In the bechmark I have forced both fitters to evaluate exactly the same number of iterations. However in general there is no guarantee that GMMX converges to the same solution as Scikit-Learn. But there are some tests in the tests folder that compare the results of the two implementations which shows good agreement.

Owner

- Name: Axel Donath

- Login: adonath

- Kind: user

- Location: Cambridge, MA

- Company: Center for Astrophysics | Havard & Smithonian

- Website: https://axeldonath.com

- Repositories: 68

- Profile: https://github.com/adonath

I'm a Postdoc researcher at Center for Astrophysics. I work on statistical methods for analysis of low counts astronomical data.

Citation (CITATION.cff)

cff-version: 1.2.0

message: "If you use this software, please cite it as below."

authors:

- family-names: "Axel"

given-names: "Donath"

orcid: "https://orcid.org/0000-0003-4568-7005"

title: "GMMX: Gaussian Mixture Models in Jax"

version: v0.1

doi: 10.5281/zenodo.14515326

date-released: 2024-12-18

url: "https://github.com/adonath/gmmx"

GitHub Events

Total

- Create event: 11

- Issues event: 8

- Release event: 6

- Watch event: 24

- Delete event: 1

- Issue comment event: 10

- Push event: 80

- Pull request event: 5

- Fork event: 4

Last Year

- Create event: 11

- Issues event: 8

- Release event: 6

- Watch event: 24

- Delete event: 1

- Issue comment event: 10

- Push event: 80

- Pull request event: 5

- Fork event: 4

Committers

Last synced: about 1 year ago

Top Committers

| Name | Commits | |

|---|---|---|

| Axel Donath | a****h@c****u | 113 |

Committer Domains (Top 20 + Academic)

Issues and Pull Requests

Last synced: 9 months ago

All Time

- Total issues: 6

- Total pull requests: 9

- Average time to close issues: 7 days

- Average time to close pull requests: about 1 hour

- Total issue authors: 3

- Total pull request authors: 2

- Average comments per issue: 1.33

- Average comments per pull request: 0.67

- Merged pull requests: 6

- Bot issues: 0

- Bot pull requests: 0

Past Year

- Issues: 6

- Pull requests: 9

- Average time to close issues: 7 days

- Average time to close pull requests: about 1 hour

- Issue authors: 3

- Pull request authors: 2

- Average comments per issue: 1.33

- Average comments per pull request: 0.67

- Merged pull requests: 6

- Bot issues: 0

- Bot pull requests: 0

Top Authors

Issue Authors

- adonath (3)

- matthewfeickert (2)

- Qazalbash (1)

Pull Request Authors

- adonath (6)

- JohannesBuchner (2)

Top Labels

Issue Labels

Pull Request Labels

Packages

- Total packages: 1

-

Total downloads:

- pypi 333 last-month

- Total dependent packages: 0

- Total dependent repositories: 0

- Total versions: 7

- Total maintainers: 1

pypi.org: gmmx

A minimal implementation of Gaussian Mixture Models in Jax

- Homepage: https://adonath.github.io/software#gmmx

- Documentation: https://adonath.github.io/gmmx/

- License: bsd-3-clause

-

Latest release: 0.7

published 11 months ago

Rankings

Maintainers (1)

Dependencies

- actions/setup-python v5 composite

- astral-sh/setup-uv v2 composite

- ./.github/actions/setup-python-env * composite

- actions/cache v4 composite

- actions/checkout v4 composite

- codecov/codecov-action v4 composite

- ./.github/actions/setup-python-env * composite

- actions/checkout v4 composite

- actions/download-artifact v4 composite

- actions/upload-artifact v4 composite

- actions/checkout v4 composite

- jax >=0.4.30

- numpy >=2.0.2

- actions/checkout v4 composite

- hynek/build-and-inspect-python-package v2 composite