torchxrayvision

TorchXRayVision: A library of chest X-ray datasets and models. Classifiers, segmentation, and autoencoders.

Science Score: 67.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

✓DOI references

Found 2 DOI reference(s) in README -

✓Academic publication links

Links to: arxiv.org, ncbi.nlm.nih.gov -

○Committers with academic emails

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (13.9%) to scientific vocabulary

Keywords

Repository

TorchXRayVision: A library of chest X-ray datasets and models. Classifiers, segmentation, and autoencoders.

Basic Info

- Host: GitHub

- Owner: mlmed

- License: apache-2.0

- Language: Jupyter Notebook

- Default Branch: master

- Homepage: https://mlmed.org/torchxrayvision

- Size: 45.7 MB

Statistics

- Stars: 1,026

- Watchers: 18

- Forks: 233

- Open Issues: 27

- Releases: 19

Topics

Metadata Files

README.md

🚨 Paper now online! https://arxiv.org/abs/2111.00595

🚨 Documentation now online! https://mlmed.org/torchxrayvision/

TorchXRayVision

|  | (🎬 promo video)

| (🎬 promo video)  ) |

|---|---|

) |

|---|---|

What is it?

A library for chest X-ray datasets and models. Including pre-trained models.

TorchXRayVision is an open source software library for working with chest X-ray datasets and deep learning models. It provides a common interface and common pre-processing chain for a wide set of publicly available chest X-ray datasets. In addition, a number of classification and representation learning models with different architectures, trained on different data combinations, are available through the library to serve as baselines or feature extractors.

- In the case of researchers addressing clinical questions it is a waste of time for them to train models from scratch. To address this, TorchXRayVision provides pre-trained models which are trained on large cohorts of data and enables 1) rapid analysis of large datasets 2) feature reuse for few-shot learning.

- In the case of researchers developing algorithms it is important to robustly evaluate models using multiple external datasets. Metadata associated with each dataset can vary greatly which makes it difficult to apply methods to multiple datasets. TorchXRayVision provides access to many datasets in a uniform way so that they can be swapped out with a single line of code. These datasets can also be merged and filtered to construct specific distributional shifts for studying generalization.

Twitter: @torchxrayvision

Getting started

$ pip install torchxrayvision

```python3 import torchxrayvision as xrv import skimage, torch, torchvision

Prepare the image:

img = skimage.io.imread("1674731.jpg") img = xrv.datasets.normalize(img, 255) # convert 8-bit image to [-1024, 1024] range img = img.mean(2)[None, ...] # Make single color channel

transform = torchvision.transforms.Compose([xrv.datasets.XRayCenterCrop(),xrv.datasets.XRayResizer(224)])

img = transform(img) img = torch.from_numpy(img)

Load model and process image

model = xrv.models.DenseNet(weights="densenet121-res224-all") outputs = model(img[None,...]) # or model.features(img[None,...])

Print results

dict(zip(model.pathologies,outputs[0].detach().numpy()))

{'Atelectasis': 0.32797316, 'Consolidation': 0.42933336, 'Infiltration': 0.5316924, 'Pneumothorax': 0.28849724, 'Edema': 0.024142697, 'Emphysema': 0.5011832, 'Fibrosis': 0.51887786, 'Effusion': 0.27805611, 'Pneumonia': 0.18569896, 'Pleural_Thickening': 0.24489835, 'Cardiomegaly': 0.3645515, 'Nodule': 0.68982, 'Mass': 0.6392845, 'Hernia': 0.00993878, 'Lung Lesion': 0.011150705, 'Fracture': 0.51916164, 'Lung Opacity': 0.59073937, 'Enlarged Cardiomediastinum': 0.27218717}

```

A sample script to process images usings pretrained models is process_image.py

``` $ python3 processimage.py ../tests/00000001000.png -resize {'preds': {'Atelectasis': 0.50577986, 'Cardiomegaly': 0.62151504, 'Consolidation': 0.3124331, 'Edema': 0.21286564, 'Effusion': 0.39427388, 'Emphysema': 0.503361, 'Enlarged Cardiomediastinum': 0.4313866, 'Fibrosis': 0.5401596, 'Fracture': 0.28907478, 'Hernia': 0.012677962, 'Infiltration': 0.5220189, 'Lung Lesion': 0.21828467, 'Lung Opacity': 0.36826086, 'Mass': 0.4104132, 'Nodule': 0.5091791, 'Pleural_Thickening': 0.5104176, 'Pneumonia': 0.18006423, 'Pneumothorax': 0.30677897}}

```

Models (demo notebook)

Specify weights for pretrained models (currently all DenseNet121)

Note: Each pretrained model has 18 outputs. The all model has every output trained. However, for the other weights some targets are not trained and will predict randomly becuase they do not exist in the training dataset. The only valid outputs are listed in the field {dataset}.pathologies on the dataset that corresponds to the weights.

```python3

224x224 models

model = xrv.models.DenseNet(weights="densenet121-res224-all") model = xrv.models.DenseNet(weights="densenet121-res224-rsna") # RSNA Pneumonia Challenge model = xrv.models.DenseNet(weights="densenet121-res224-nih") # NIH chest X-ray8 model = xrv.models.DenseNet(weights="densenet121-res224-pc") # PadChest (University of Alicante) model = xrv.models.DenseNet(weights="densenet121-res224-chex") # CheXpert (Stanford) model = xrv.models.DenseNet(weights="densenet121-res224-mimicnb") # MIMIC-CXR (MIT) model = xrv.models.DenseNet(weights="densenet121-res224-mimicch") # MIMIC-CXR (MIT)

512x512 models

model = xrv.models.ResNet(weights="resnet50-res512-all")

DenseNet121 from JF Healthcare for the CheXpert competition

model = xrv.baseline_models.jfhealthcare.DenseNet()

Official Stanford CheXpert model

model = xrv.baselinemodels.chexpert.DenseNet(weightszip="chexpert_weights.zip")

Emory HITI lab race prediction model

model = xrv.baselinemodels.emoryhiti.RaceModel() model.targets -> ["Asian", "Black", "White"]

Riken age prediction model

model = xrv.baseline_models.riken.AgeModel()

```

Benchmarks of the modes are here: BENCHMARKS.md and the performance of some of the models can be seen in this paper arxiv.org/abs/2002.02497.

Autoencoders

You can also load a pre-trained autoencoder that is trained on the PadChest, NIH, CheXpert, and MIMIC datasets.

python3

ae = xrv.autoencoders.ResNetAE(weights="101-elastic")

z = ae.encode(image)

image2 = ae.decode(z)

Segmentation

You can load pretrained anatomical segmentation models. Demo Notebook

python3

seg_model = xrv.baseline_models.chestx_det.PSPNet()

output = seg_model(image)

output.shape # [1, 14, 512, 512]

seg_model.targets # ['Left Clavicle', 'Right Clavicle', 'Left Scapula', 'Right Scapula',

# 'Left Lung', 'Right Lung', 'Left Hilus Pulmonis', 'Right Hilus Pulmonis',

# 'Heart', 'Aorta', 'Facies Diaphragmatica', 'Mediastinum', 'Weasand', 'Spine']

Datasets

View docstrings for more detail on each dataset and Demo notebook and Example loading script

```python3 transform = torchvision.transforms.Compose([xrv.datasets.XRayCenterCrop(), xrv.datasets.XRayResizer(224)])

RSNA Pneumonia Detection Challenge. https://pubs.rsna.org/doi/full/10.1148/ryai.2019180041

dkaggle = xrv.datasets.RSNAPneumoniaDataset(imgpath="path to stage2trainimages_jpg", transform=transform)

CheXpert: A Large Chest Radiograph Dataset with Uncertainty Labels and Expert Comparison. https://arxiv.org/abs/1901.07031

dchex = xrv.datasets.CheXDataset(imgpath="path to CheXpert-v1.0-small", csvpath="path to CheXpert-v1.0-small/train.csv", transform=transform)

National Institutes of Health ChestX-ray8 dataset. https://arxiv.org/abs/1705.02315

dnih = xrv.datasets.NIHDataset(imgpath="path to NIH images")

A relabelling of a subset of NIH images from: https://pubs.rsna.org/doi/10.1148/radiol.2019191293

dnih2 = xrv.datasets.NIHGoogle_Dataset(imgpath="path to NIH images")

PadChest: A large chest x-ray image dataset with multi-label annotated reports. https://arxiv.org/abs/1901.07441

dpc = xrv.datasets.PCDataset(imgpath="path to image folder")

COVID-19 Image Data Collection. https://arxiv.org/abs/2006.11988

dcovid19 = xrv.datasets.COVID19Dataset() # specify imgpath and csvpath for the dataset

SIIM Pneumothorax Dataset. https://www.kaggle.com/c/siim-acr-pneumothorax-segmentation

dsiim = xrv.datasets.SIIMPneumothorax_Dataset(imgpath="dicom-images-train/", csvpath="train-rle.csv")

VinDr-CXR: An open dataset of chest X-rays with radiologist's annotations. https://arxiv.org/abs/2012.15029

dvin = xrv.datasets.VinBrainDataset(imgpath=".../train", csvpath=".../train.csv")

National Library of Medicine Tuberculosis Datasets. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4256233/

dnlmtb = xrv.datasets.NLMTBDataset(imgpath="path to MontgomerySet or ChinaSet_AllFiles") ```

Dataset fields

Each dataset contains a number of fields. These fields are maintained when xrv.datasets.SubsetDataset and xrv.datasets.MergeDataset are used.

.pathologiesThis field is a list of the pathologies contained in this dataset that will be contained in the.labelsfield ]..labelsThis field contains a 1,0, or NaN for each label defined in.pathologies..csvThis field is a pandas DataFrame of the metadata csv file that comes with the data. Each row aligns with the elements of the dataset so indexing using.ilocwill work.

If possible, each dataset's .csv will have some common fields of the csv. These will be aligned when The list is as follows:

csv.patientidA unique id that will uniqely identify samples in this datasetcsv.offset_day_intAn integer time offset for the image in the unit of days. This is expected to be for relative times and has no absolute meaning although for some datasets it is the epoch time.csv.age_yearsThe age of the patient in years.csv.sex_maleIf the patient is malecsv.sex_femaleIf the patient is female

Dataset tools

relabeldataset will align labels to have the same order as the pathologies argument. ```python3 xrv.datasets.relabeldataset(xrv.datasets.defaultpathologies , dnih) # has side effects ```

specify a subset of views (demo notebook)

python3

d_kaggle = xrv.datasets.RSNA_Pneumonia_Dataset(imgpath="...",

views=["PA","AP","AP Supine"])

specify only 1 image per patient

python3

d_kaggle = xrv.datasets.RSNA_Pneumonia_Dataset(imgpath="...",

unique_patients=True)

obtain summary statistics per dataset ```python3 dchex = xrv.datasets.CheXDataset(imgpath="CheXpert-v1.0-small", csvpath="CheXpert-v1.0-small/train.csv", views=["PA","AP"], unique_patients=False)

CheXDataset numsamples=191010 views=['PA', 'AP'] {'Atelectasis': {0.0: 17621, 1.0: 29718}, 'Cardiomegaly': {0.0: 22645, 1.0: 23384}, 'Consolidation': {0.0: 30463, 1.0: 12982}, 'Edema': {0.0: 29449, 1.0: 49674}, 'Effusion': {0.0: 34376, 1.0: 76894}, 'Enlarged Cardiomediastinum': {0.0: 26527, 1.0: 9186}, 'Fracture': {0.0: 18111, 1.0: 7434}, 'Lung Lesion': {0.0: 17523, 1.0: 7040}, 'Lung Opacity': {0.0: 20165, 1.0: 94207}, 'Pleural Other': {0.0: 17166, 1.0: 2503}, 'Pneumonia': {0.0: 18105, 1.0: 4674}, 'Pneumothorax': {0.0: 54165, 1.0: 17693}, 'Support Devices': {0.0: 21757, 1.0: 99747}} ```

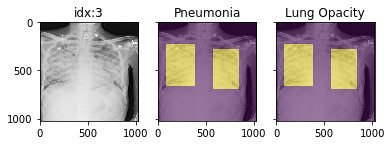

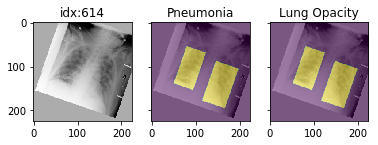

Pathology masks (demo notebook)

Masks are available in the following datasets:

python3

xrv.datasets.RSNA_Pneumonia_Dataset() # for Lung Opacity

xrv.datasets.SIIM_Pneumothorax_Dataset() # for Pneumothorax

xrv.datasets.NIH_Dataset() # for Cardiomegaly, Mass, Effusion, ...

Example usage:

```python3 drsna = xrv.datasets.RSNAPneumoniaDataset(imgpath="stage2trainimagesjpg", views=["PA","AP"], pathologymasks=True)

The has_masks column will let you know if any masks exist for that sample

drsna.csv.hasmasks.value_counts() False 20672 True 6012

Each sample will have a pathology_masks dictionary where the index

of each pathology will correspond to a mask of that pathology (if it exists).

There may be more than one mask per sample. But only one per pathology.

sample["pathologymasks"][drsna.pathologies.index("Lung Opacity")]

```

it also works with dataaugmentation if you pass in `dataaug=data_transforms` to the dataloader. The random seed is matched to align calls for the image and the mask.

Distribution shift tools (demo notebook)

The class xrv.datasets.CovariateDataset takes two datasets and two

arrays representing the labels. The samples will be returned with the

desired ratio of images from each site. The goal here is to simulate

a covariate shift to make a model focus on an incorrect feature. Then

the shift can be reversed in the validation data causing a catastrophic

failure in generalization performance.

ratio=0.0 means images from d1 will have a positive label ratio=0.5 means images from d1 will have half of the positive labels ratio=1.0 means images from d1 will have no positive label

With any ratio the number of samples returned will be the same.

```python3 d = xrv.datasets.CovariateDataset(d1 = # dataset1 with a specific condition d1target = #target label to predict, d2 = # dataset2 with a specific condition d2target = #target label to predict, mode="train", # train, valid, and test ratio=0.9)

```

Citation

Primary TorchXRayVision paper: https://arxiv.org/abs/2111.00595

``` Joseph Paul Cohen, Joseph D. Viviano, Paul Bertin, Paul Morrison, Parsa Torabian, Matteo Guarrera, Matthew P Lungren, Akshay Chaudhari, Rupert Brooks, Mohammad Hashir, Hadrien Bertrand TorchXRayVision: A library of chest X-ray datasets and models. Medical Imaging with Deep Learning https://github.com/mlmed/torchxrayvision, 2020

@inproceedings{Cohen2022xrv, title = {{TorchXRayVision: A library of chest X-ray datasets and models}}, author = {Cohen, Joseph Paul and Viviano, Joseph D. and Bertin, Paul and Morrison, Paul and Torabian, Parsa and Guarrera, Matteo and Lungren, Matthew P and Chaudhari, Akshay and Brooks, Rupert and Hashir, Mohammad and Bertrand, Hadrien}, booktitle = {Medical Imaging with Deep Learning}, url = {https://github.com/mlmed/torchxrayvision}, arxivId = {2111.00595}, year = {2022} }

and this paper which initiated development of the library: [https://arxiv.org/abs/2002.02497](https://arxiv.org/abs/2002.02497)

Joseph Paul Cohen and Mohammad Hashir and Rupert Brooks and Hadrien Bertrand

On the limits of cross-domain generalization in automated X-ray prediction.

Medical Imaging with Deep Learning 2020 (Online: https://arxiv.org/abs/2002.02497)

@inproceedings{cohen2020limits, title={On the limits of cross-domain generalization in automated X-ray prediction}, author={Cohen, Joseph Paul and Hashir, Mohammad and Brooks, Rupert and Bertrand, Hadrien}, booktitle={Medical Imaging with Deep Learning}, year={2020}, url={https://arxiv.org/abs/2002.02497} } ```

Supporters/Sponsors

|

CIFAR (Canadian Institute for Advanced Research) |

Mila, Quebec AI Institute, University of Montreal |

|:---:|:---:|

|

Stanford University's Center for

Artificial Intelligence in Medicine & Imaging |

Carestream Health |

Owner

- Name: Machine Learning and Medicine Lab

- Login: mlmed

- Kind: organization

- Website: https://mlmed.org/

- Repositories: 3

- Profile: https://github.com/mlmed

Citation (CITATION)

@inproceedings{Cohen2022xrv,

title = {{TorchXRayVision: A library of chest X-ray datasets and models}},

author = {Cohen, Joseph Paul and Viviano, Joseph D. and Bertin, Paul and Morrison, Paul and Torabian, Parsa and Guarrera, Matteo and Lungren, Matthew P and Chaudhari, Akshay and Brooks, Rupert and Hashir, Mohammad and Bertrand, Hadrien},

booktitle = {Medical Imaging with Deep Learning},

url = {https://github.com/mlmed/torchxrayvision},

arxivId = {2111.00595},

year = {2022}

}

GitHub Events

Total

- Create event: 8

- Release event: 3

- Issues event: 13

- Watch event: 141

- Delete event: 4

- Issue comment event: 27

- Push event: 22

- Pull request review event: 6

- Pull request event: 6

- Fork event: 19

Last Year

- Create event: 8

- Release event: 3

- Issues event: 13

- Watch event: 141

- Delete event: 4

- Issue comment event: 27

- Push event: 22

- Pull request review event: 6

- Pull request event: 6

- Fork event: 19

Committers

Last synced: about 3 years ago

All Time

- Total Commits: 350

- Total Committers: 10

- Avg Commits per committer: 35.0

- Development Distribution Score (DDS): 0.097

Top Committers

| Name | Commits | |

|---|---|---|

| Joseph Paul Cohen | j****h@j****m | 316 |

| Rupert Brooks | r****s@n****m | 15 |

| Matteo Guarrera | 3****a@u****m | 5 |

| Rupert Brooks | r****s@g****m | 3 |

| Janos Tolgyesi | j****i@n****m | 3 |

| Jean-Remi King | j****i@f****m | 2 |

| Evan Czyzycki | e****6@g****m | 2 |

| Joseph Viviano | j****h@v****a | 2 |

| Parsa Torabian | d****a@g****m | 1 |

| Abdolkarim Saeedi | p****3@g****m | 1 |

Committer Domains (Top 20 + Academic)

Issues and Pull Requests

Last synced: 9 months ago

All Time

- Total issues: 70

- Total pull requests: 62

- Average time to close issues: 4 months

- Average time to close pull requests: 6 days

- Total issue authors: 58

- Total pull request authors: 9

- Average comments per issue: 3.86

- Average comments per pull request: 0.39

- Merged pull requests: 54

- Bot issues: 0

- Bot pull requests: 0

Past Year

- Issues: 7

- Pull requests: 5

- Average time to close issues: 7 days

- Average time to close pull requests: 14 minutes

- Issue authors: 7

- Pull request authors: 1

- Average comments per issue: 2.43

- Average comments per pull request: 0.0

- Merged pull requests: 5

- Bot issues: 0

- Bot pull requests: 0

Top Authors

Issue Authors

- josephdviviano (4)

- catfish132 (4)

- pat-rig (2)

- ieee8023 (2)

- PabloMessina (2)

- Htermotto (2)

- lkourti (2)

- danbider (2)

- Eldo-rado (1)

- antonie-z (1)

- omarespejel (1)

- oplatek (1)

- Liqq1 (1)

- dgmato (1)

- chiragnagpal (1)

Pull Request Authors

- ieee8023 (53)

- matteoguarrera (2)

- RupertBrooks (2)

- etetteh (1)

- animesh (1)

- josephdviviano (1)

- Htermotto (1)

- a-parida12 (1)

- KiLJ4EdeN (1)

Top Labels

Issue Labels

Pull Request Labels

Packages

- Total packages: 1

-

Total downloads:

- pypi 9,591 last-month

- Total docker downloads: 347

- Total dependent packages: 1

- Total dependent repositories: 31

- Total versions: 50

- Total maintainers: 2

pypi.org: torchxrayvision

TorchXRayVision: A library of chest X-ray datasets and models

- Homepage: https://github.com/mlmed/torchxrayvision

- Documentation: https://torchxrayvision.readthedocs.io/

- License: Apache Software License

-

Latest release: 1.3.5

published 12 months ago

Rankings

Maintainers (2)

Dependencies

- actions/checkout v2 composite

- actions/setup-python v2 composite

- numpy >=1

- pandas >=1

- pillow >=5.3.0

- requests >=1

- scikit-image >=0.16

- torch >=1

- torchvision >=0.5

- tqdm >=4

- sphinx-rtd-theme *

- pydicom >=2.3.1 development

- pytest * development