anomalib

An anomaly detection library comprising state-of-the-art algorithms and features such as experiment management, hyper-parameter optimization, and edge inference.

Science Score: 54.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

○DOI references

-

○Academic publication links

-

✓Committers with academic emails

1 of 86 committers (1.2%) from academic institutions -

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (14.0%) to scientific vocabulary

Keywords

Keywords from Contributors

Repository

An anomaly detection library comprising state-of-the-art algorithms and features such as experiment management, hyper-parameter optimization, and edge inference.

Basic Info

- Host: GitHub

- Owner: open-edge-platform

- License: apache-2.0

- Language: Python

- Default Branch: main

- Homepage: https://anomalib.readthedocs.io/en/latest/

- Size: 61.5 MB

Statistics

- Stars: 4,895

- Watchers: 48

- Forks: 799

- Open Issues: 109

- Releases: 40

Topics

Metadata Files

README.md

**A library for benchmarking, developing and deploying deep learning anomaly detection algorithms**

---

[Key Features](#key-features) •

[Docs](https://anomalib.readthedocs.io/en/latest/) •

[Notebooks](examples/notebooks) •

[License](LICENSE)

[]()

[]()

[]()

[]()

[](https://github.com/open-edge-platform/anomalib/actions/workflows/pre_merge.yml)

[](https://codecov.io/gh/open-edge-platform/anomalib)

[](https://pepy.tech/project/anomalib)

[](https://snyk.io/advisor/python/anomalib)

[](https://www.bestpractices.dev/projects/8330)

[](https://anomalib.readthedocs.io/en/latest/?badge=latest)

[](https://gurubase.io/g/anomalib)

**A library for benchmarking, developing and deploying deep learning anomaly detection algorithms**

---

[Key Features](#key-features) •

[Docs](https://anomalib.readthedocs.io/en/latest/) •

[Notebooks](examples/notebooks) •

[License](LICENSE)

[]()

[]()

[]()

[]()

[](https://github.com/open-edge-platform/anomalib/actions/workflows/pre_merge.yml)

[](https://codecov.io/gh/open-edge-platform/anomalib)

[](https://pepy.tech/project/anomalib)

[](https://snyk.io/advisor/python/anomalib)

[](https://www.bestpractices.dev/projects/8330)

[](https://anomalib.readthedocs.io/en/latest/?badge=latest)

[](https://gurubase.io/g/anomalib)

🌟 Announcing v2.1.0 Release! 🌟

We're excited to announce the release of Anomalib v2.1.0! This version brings several state-of-the-art models and anomaly detection datasets. Key features include:

New models :

- 🖼️ UniNet (CVPR 2025): A contrastive learning-guided unified framework with feature selection for anomaly detection.

- 🖼️ Dinomaly (CVPR 2025): A 'less is more philosophy' encoder-decoder architecture model leveraging pre-trained foundational models.

- 🎥 Fuvas (ICASSP 2025): Few-shot unsupervised video anomaly segmentation via low-rank factorization of spatio-temporal features.

New datasets:

- MVTec AD 2 : A new version of the MVTec AD dataset with 8 categories of industrial anomaly detection.

- MVTec LOCO AD : MVTec logical constraints anomaly detection dataset that includes both structural and logical anomalies.

- Real-IAD : A real-world multi-view dataset for benchmarking versatile industrial anomaly detection.

- VAD dataset : Valeo Anomaly Dataset (VAD) showcasing a diverse range of defects, from highly obvious to extremely subtle.

We value your input! Please share feedback via GitHub Issues or our Discussions

👋 Introduction

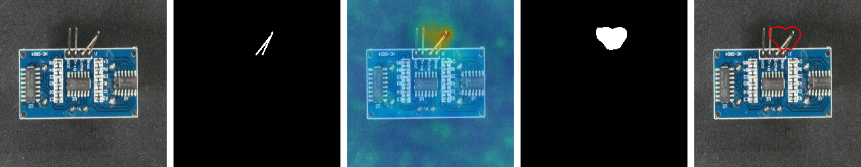

Anomalib is a deep learning library that aims to collect state-of-the-art anomaly detection algorithms for benchmarking on both public and private datasets. Anomalib provides several ready-to-use implementations of anomaly detection algorithms described in the recent literature, as well as a set of tools that facilitate the development and implementation of custom models. The library has a strong focus on visual anomaly detection, where the goal of the algorithm is to detect and/or localize anomalies within images or videos in a dataset. Anomalib is constantly updated with new algorithms and training/inference extensions, so keep checking!

Key features

- Simple and modular API and CLI for training, inference, benchmarking, and hyperparameter optimization.

- The largest public collection of ready-to-use deep learning anomaly detection algorithms and benchmark datasets.

- Lightning based model implementations to reduce boilerplate code and limit the implementation efforts to the bare essentials.

- The majority of models can be exported to OpenVINO Intermediate Representation (IR) for accelerated inference on Intel hardware.

- A set of inference tools for quick and easy deployment of the standard or custom anomaly detection models.

📦 Installation

Anomalib can be installed from PyPI. We recommend using a virtual environment and a modern package installer like uv or pip.

🚀 Quick Install

For a standard installation, you can use uv or pip. This will install the latest version of Anomalib with its core dependencies. PyTorch will be installed based on its default behavior, which usually works for CPU and standard CUDA setups.

```bash

With uv

uv pip install anomalib

Or with pip

pip install anomalib ```

For more control over the installation, such as specifying the PyTorch backend (e.g., XPU, CUDA and ROCm) or installing extra dependencies for specific models, see the advanced options below.

💡 Advanced Installation: Specify Hardware Backend

To ensure compatibility with your hardware, you can specify a backend during installation. This is the recommended approach for production environments and for hardware other than CPU or standard CUDA. **Using `uv`:** ```bash # CPU support (default, works on all platforms) uv pip install "anomalib[cpu]" # CUDA 12.4 support (Linux/Windows with NVIDIA GPU) uv pip install "anomalib[cu124]" # CUDA 12.1 support (Linux/Windows with NVIDIA GPU) uv pip install "anomalib[cu121]" # CUDA 11.8 support (Linux/Windows with NVIDIA GPU) uv pip install "anomalib[cu118]" # ROCm support (Linux with AMD GPU) uv pip install "anomalib[rocm]" # Intel XPU support (Linux with Intel GPU) uv pip install "anomalib[xpu]" ``` **Using `pip`:** The same extras can be used with `pip`: ```bash pip install "anomalib[cu124]" ```🧩 Advanced Installation: Additional Dependencies

Anomalib includes most dependencies by default. For specialized features, you may need additional optional dependencies. Remember to include your hardware-specific extra. ```bash # Example: Install with OpenVINO support and CUDA 12.4 uv pip install "anomalib[openvino,cu124]" # Example: Install all optional dependencies for a CPU-only setup uv pip install "anomalib[full,cpu]" ``` Here is a list of available optional dependency groups: | Extra | Description | Purpose | | :------------ | :--------------------------------------- | :------------------------------------------ | | `[openvino]` | Intel OpenVINO optimization | For accelerated inference on Intel hardware | | `[clip]` | Vision-language models | `winclip` | | `[vlm]` | Vision-language model backends | Advanced VLM features | | `[loggers]` | Experiment tracking (wandb, comet, etc.) | For experiment management | | `[notebooks]` | Jupyter notebook support | For running example notebooks | | `[full]` | All optional dependencies | All optional features |🔧 Advanced Installation: Install from Source

For contributing to `anomalib` or using a development version, you can install from source. **Using `uv`:** This is the recommended method for developers as it uses the project's lock file for reproducible environments. ```bash git clone https://github.com/open-edge-platform/anomalib.git cd anomalib # Create the virtual environment uv venv # Sync with the lockfile for a specific backend (e.g., CPU) uv sync --extra cpu # Or for a different backend like CUDA 12.4 uv sync --extra cu124 # To set up a full development environment uv sync --extra dev --extra cpu ``` **Using `pip`:** ```bash git clone https://github.com/open-edge-platform/anomalib.git cd anomalib # Install in editable mode with a specific backend pip install -e ".[cpu]" # Install with development dependencies pip install -e ".[dev,cpu]" ```🧠 Training

Anomalib supports both API and CLI-based training approaches:

🔌 Python API

```python from anomalib.data import MVTecAD from anomalib.models import Patchcore from anomalib.engine import Engine

Initialize components

datamodule = MVTecAD() model = Patchcore() engine = Engine()

Train the model

engine.fit(datamodule=datamodule, model=model) ```

⌨️ Command Line

```bash

Train with default settings

anomalib train --model Patchcore --data anomalib.data.MVTecAD

Train with custom category

anomalib train --model Patchcore --data anomalib.data.MVTecAD --data.category transistor

Train with config file

anomalib train --config path/to/config.yaml ```

🤖 Inference

Anomalib provides multiple inference options including Torch, Lightning, Gradio, and OpenVINO. Here's how to get started:

🔌 Python API

```python

Load model and make predictions

predictions = engine.predict( datamodule=datamodule, model=model, ckpt_path="path/to/checkpoint.ckpt", ) ```

⌨️ Command Line

```bash

Basic prediction

anomalib predict --model anomalib.models.Patchcore \ --data anomalib.data.MVTecAD \ --ckpt_path path/to/model.ckpt

Prediction with results

anomalib predict --model anomalib.models.Patchcore \ --data anomalib.data.MVTecAD \ --ckptpath path/to/model.ckpt \ --returnpredictions ```

📘 Note: For advanced inference options including Gradio and OpenVINO, check our Inference Documentation.

Training on Intel GPUs

[!Note] Currently, only single GPU training is supported on Intel GPUs. These commands were tested on Arc 750 and Arc 770.

Ensure that you have PyTorch with XPU support installed. For more information, please refer to the PyTorch XPU documentation

🔌 API

```python from anomalib.data import MVTecAD from anomalib.engine import Engine, SingleXPUStrategy, XPUAccelerator from anomalib.models import Stfpm

engine = Engine( strategy=SingleXPUStrategy(), accelerator=XPUAccelerator(), ) engine.train(Stfpm(), datamodule=MVTecAD()) ```

⌨️ CLI

bash

anomalib train --model Padim --data MVTecAD --trainer.accelerator xpu --trainer.strategy xpu_single

⚙️ Hyperparameter Optimization

Anomalib supports hyperparameter optimization (HPO) using Weights & Biases and Comet.ml.

```bash

Run HPO with Weights & Biases

anomalib hpo --backend WANDB --sweep_config tools/hpo/configs/wandb.yaml ```

📘 Note: For detailed HPO configuration, check our HPO Documentation.

🧪 Experiment Management

Track your experiments with popular logging platforms through PyTorch Lightning loggers:

- 📊 Weights & Biases

- 📈 Comet.ml

- 📉 TensorBoard

Enable logging in your config file to track:

- Hyperparameters

- Metrics

- Model graphs

- Test predictions

📘 Note: For logging setup, see our Logging Documentation.

📊 Benchmarking

Evaluate and compare model performance across different datasets:

```bash

Run benchmarking with default configuration

anomalib benchmark --config tools/experimental/benchmarking/sample.yaml ```

💡 Tip: Check individual model performance in their respective README files:

✍️ Reference

If you find Anomalib useful in your research or work, please cite:

tex

@inproceedings{akcay2022anomalib,

title={Anomalib: A deep learning library for anomaly detection},

author={Akcay, Samet and Ameln, Dick and Vaidya, Ashwin and Lakshmanan, Barath and Ahuja, Nilesh and Genc, Utku},

booktitle={2022 IEEE International Conference on Image Processing (ICIP)},

pages={1706--1710},

year={2022},

organization={IEEE}

}

👥 Contributing

We welcome contributions! Check out our Contributing Guide to get started.

Thank you to all our contributors!

Owner

- Name: Open Edge Platform

- Login: open-edge-platform

- Kind: organization

- Email: webadmin@linux.intel.com

- Location: United States of America

- Repositories: 1

- Profile: https://github.com/open-edge-platform

Citation (CITATION.cff)

# This CITATION.cff file was generated with cffinit.

# Visit https://bit.ly/cffinit to generate yours today!

cff-version: 1.2.0

title: "Anomalib: A Deep Learning Library for Anomaly Detection"

message: "If you use this library and love it, cite the software and the paper \U0001F917"

authors:

- given-names: Samet

family-names: Akcay

email: samet.akcay@intel.com

affiliation: Intel

- given-names: Dick

family-names: Ameln

email: dick.ameln@intel.com

affiliation: Intel

- given-names: Ashwin

family-names: Vaidya

email: ashwin.vaidya@intel.com

affiliation: Intel

- given-names: Barath

family-names: Lakshmanan

email: barath.lakshmanan@intel.com

affiliation: Intel

- given-names: Nilesh

family-names: Ahuja

email: nilesh.ahuja@intel.com

affiliation: Intel

- given-names: Utku

family-names: Genc

email: utku.genc@intel.com

affiliation: Intel

version: 0.2.6

doi: https://doi.org/10.48550/arXiv.2202.08341

date-released: 2022-02-18

references:

- type: article

authors:

- given-names: Samet

family-names: Akcay

email: samet.akcay@intel.com

affiliation: Intel

- given-names: Dick

family-names: Ameln

email: dick.ameln@intel.com

affiliation: Intel

- given-names: Ashwin

family-names: Vaidya

email: ashwin.vaidya@intel.com

affiliation: Intel

- given-names: Barath

family-names: Lakshmanan

email: barath.lakshmanan@intel.com

affiliation: Intel

- given-names: Nilesh

family-names: Ahuja

email: nilesh.ahuja@intel.com

affiliation: Intel

- given-names: Utku

family-names: Genc

email: utku.genc@intel.com

affiliation: Intel

title: "Anomalib: A Deep Learning Library for Anomaly Detection"

year: 2022

journal: ArXiv

doi: https://doi.org/10.48550/arXiv.2202.08341

url: https://arxiv.org/abs/2202.08341

abstract: >-

This paper introduces anomalib, a novel library for

unsupervised anomaly detection and localization.

With reproducibility and modularity in mind, this

open-source library provides algorithms from the

literature and a set of tools to design custom

anomaly detection algorithms via a plug-and-play

approach. Anomalib comprises state-of-the-art

anomaly detection algorithms that achieve top

performance on the benchmarks and that can be used

off-the-shelf. In addition, the library provides

components to design custom algorithms that could

be tailored towards specific needs. Additional

tools, including experiment trackers, visualizers,

and hyper-parameter optimizers, make it simple to

design and implement anomaly detection models. The

library also supports OpenVINO model optimization

and quantization for real-time deployment. Overall,

anomalib is an extensive library for the design,

implementation, and deployment of unsupervised

anomaly detection models from data to the edge.

keywords:

- Unsupervised Anomaly detection

- Unsupervised Anomaly localization

license: Apache-2.0

GitHub Events

Total

- Create event: 12

- Commit comment event: 1

- Release event: 1

- Issues event: 186

- Watch event: 506

- Delete event: 6

- Issue comment event: 409

- Push event: 45

- Pull request event: 131

- Pull request review event: 163

- Pull request review comment event: 128

- Fork event: 72

Last Year

- Create event: 12

- Commit comment event: 1

- Release event: 1

- Issues event: 186

- Watch event: 506

- Delete event: 6

- Issue comment event: 409

- Push event: 45

- Pull request event: 131

- Pull request review event: 163

- Pull request review comment event: 128

- Fork event: 72

Committers

Last synced: about 1 year ago

Top Committers

| Name | Commits | |

|---|---|---|

| Samet Akcay | s****y@i****m | 250 |

| Ashwin Vaidya | a****a@i****m | 155 |

| Dick Ameln | d****n@i****m | 83 |

| Ashwin Vaidya | a****a@g****m | 28 |

| dependabot[bot] | 4****] | 24 |

| Alexander Riedel | 5****1 | 17 |

| Blaž Rolih | 6****r | 15 |

| Joao P C Bertoldo | 2****o | 13 |

| abc-125 | 6****5 | 10 |

| Adrian Boguszewski | a****i@i****m | 10 |

| ORippler | o****l@g****e | 8 |

| Yunchu Lee | y****e@i****m | 7 |

| Barath Lakshmanan | b****n@i****m | 6 |

| Harim Kang | h****g@i****m | 6 |

| Willy Fitra Hendria | w****a@g****m | 5 |

| Weilin Xu | w****u@i****m | 5 |

| Ashish | a****a@i****m | 4 |

| Wenjing Kang | w****g@i****m | 4 |

| Philippe Carvalho | 3****l | 4 |

| Alexander Barabanov | 9****v | 3 |

| Alexander Dokuchaev | a****v@i****m | 3 |

| David Muhr | d****n | 3 |

| Nilesh Ahuja | 9****l | 3 |

| Sid Mehta | s****0@g****m | 3 |

| TRAN Triet | 5****2 | 3 |

| Tom Gambone | s****x | 3 |

| Paula Ramos | p****s@i****m | 3 |

| Danylo Boiko | 5****o | 2 |

| Isaac Ng | 4****z | 2 |

| Leonid Beynenson | l****n@i****m | 2 |

| and 56 more... | ||

Committer Domains (Top 20 + Academic)

Issues and Pull Requests

Last synced: 9 months ago

All Time

- Total issues: 122

- Total pull requests: 158

- Average time to close issues: 5 months

- Average time to close pull requests: about 2 months

- Total issue authors: 88

- Total pull request authors: 26

- Average comments per issue: 1.43

- Average comments per pull request: 0.52

- Merged pull requests: 92

- Bot issues: 0

- Bot pull requests: 11

Past Year

- Issues: 105

- Pull requests: 133

- Average time to close issues: about 1 month

- Average time to close pull requests: 4 days

- Issue authors: 73

- Pull request authors: 22

- Average comments per issue: 0.92

- Average comments per pull request: 0.41

- Merged pull requests: 79

- Bot issues: 0

- Bot pull requests: 11

Top Authors

Issue Authors

- monkeycc (11)

- wenwu2021 (5)

- samet-akcay (4)

- FedericoDeBona (3)

- Cua1103 (3)

- abc-125 (3)

- Narc17 (2)

- C1uckcluck (2)

- 1713mz (2)

- MarToonLi (2)

- 840691168 (2)

- lucianchauvin (2)

- huanghaiqiang (2)

- blackdevil112 (2)

- nghiakthp2401 (2)

Pull Request Authors

- samet-akcay (70)

- abc-125 (10)

- AlexanderBarabanov (10)

- rajeshgangireddy (9)

- dependabot[bot] (9)

- ashwinvaidya17 (7)

- alfieroddan (5)

- blaz-r (4)

- grannycola (3)

- eugene123tw (3)

- davnn (3)

- WenjingKangIntel (3)

- manuelkonrad (2)

- IceboxDev (2)

- yujiepan-work (2)

Top Labels

Issue Labels

Pull Request Labels

Packages

- Total packages: 1

-

Total downloads:

- pypi 26,213 last-month

- Total docker downloads: 21

- Total dependent packages: 3

- Total dependent repositories: 3

- Total versions: 32

- Total maintainers: 3

pypi.org: anomalib

anomalib - Anomaly Detection Library

- Documentation: https://anomalib.readthedocs.io/

- License: Apache License Version 2.0, January 2004 http://www.apache.org/licenses/ TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION 1. Definitions. "License" shall mean the terms and conditions for use, reproduction, and distribution as defined by Sections 1 through 9 of this document. "Licensor" shall mean the copyright owner or entity authorized by the copyright owner that is granting the License. "Legal Entity" shall mean the union of the acting entity and all other entities that control, are controlled by, or are under common control with that entity. For the purposes of this definition, "control" means (i) the power, direct or indirect, to cause the direction or management of such entity, whether by contract or otherwise, or (ii) ownership of fifty percent (50%) or more of the outstanding shares, or (iii) beneficial ownership of such entity. "You" (or "Your") shall mean an individual or Legal Entity exercising permissions granted by this License. "Source" form shall mean the preferred form for making modifications, including but not limited to software source code, documentation source, and configuration files. "Object" form shall mean any form resulting from mechanical transformation or translation of a Source form, including but not limited to compiled object code, generated documentation, and conversions to other media types. "Work" shall mean the work of authorship, whether in Source or Object form, made available under the License, as indicated by a copyright notice that is included in or attached to the work (an example is provided in the Appendix below). "Derivative Works" shall mean any work, whether in Source or Object form, that is based on (or derived from) the Work and for which the editorial revisions, annotations, elaborations, or other modifications represent, as a whole, an original work of authorship. For the purposes of this License, Derivative Works shall not include works that remain separable from, or merely link (or bind by name) to the interfaces of, the Work and Derivative Works thereof. "Contribution" shall mean any work of authorship, including the original version of the Work and any modifications or additions to that Work or Derivative Works thereof, that is intentionally submitted to Licensor for inclusion in the Work by the copyright owner or by an individual or Legal Entity authorized to submit on behalf of the copyright owner. For the purposes of this definition, "submitted" means any form of electronic, verbal, or written communication sent to the Licensor or its representatives, including but not limited to communication on electronic mailing lists, source code control systems, and issue tracking systems that are managed by, or on behalf of, the Licensor for the purpose of discussing and improving the Work, but excluding communication that is conspicuously marked or otherwise designated in writing by the copyright owner as "Not a Contribution." "Contributor" shall mean Licensor and any individual or Legal Entity on behalf of whom a Contribution has been received by Licensor and subsequently incorporated within the Work. 2. Grant of Copyright License. Subject to the terms and conditions of this License, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable copyright license to reproduce, prepare Derivative Works of, publicly display, publicly perform, sublicense, and distribute the Work and such Derivative Works in Source or Object form. 3. Grant of Patent License. Subject to the terms and conditions of this License, each Contributor hereby grants to You a perpetual, worldwide, non-exclusive, no-charge, royalty-free, irrevocable (except as stated in this section) patent license to make, have made, use, offer to sell, sell, import, and otherwise transfer the Work, where such license applies only to those patent claims licensable by such Contributor that are necessarily infringed by their Contribution(s) alone or by combination of their Contribution(s) with the Work to which such Contribution(s) was submitted. If You institute patent litigation against any entity (including a cross-claim or counterclaim in a lawsuit) alleging that the Work or a Contribution incorporated within the Work constitutes direct or contributory patent infringement, then any patent licenses granted to You under this License for that Work shall terminate as of the date such litigation is filed. 4. Redistribution. You may reproduce and distribute copies of the Work or Derivative Works thereof in any medium, with or without modifications, and in Source or Object form, provided that You meet the following conditions: (a) You must give any other recipients of the Work or Derivative Works a copy of this License; and (b) You must cause any modified files to carry prominent notices stating that You changed the files; and (c) You must retain, in the Source form of any Derivative Works that You distribute, all copyright, patent, trademark, and attribution notices from the Source form of the Work, excluding those notices that do not pertain to any part of the Derivative Works; and (d) If the Work includes a "NOTICE" text file as part of its distribution, then any Derivative Works that You distribute must include a readable copy of the attribution notices contained within such NOTICE file, excluding those notices that do not pertain to any part of the Derivative Works, in at least one of the following places: within a NOTICE text file distributed as part of the Derivative Works; within the Source form or documentation, if provided along with the Derivative Works; or, within a display generated by the Derivative Works, if and wherever such third-party notices normally appear. The contents of the NOTICE file are for informational purposes only and do not modify the License. You may add Your own attribution notices within Derivative Works that You distribute, alongside or as an addendum to the NOTICE text from the Work, provided that such additional attribution notices cannot be construed as modifying the License. You may add Your own copyright statement to Your modifications and may provide additional or different license terms and conditions for use, reproduction, or distribution of Your modifications, or for any such Derivative Works as a whole, provided Your use, reproduction, and distribution of the Work otherwise complies with the conditions stated in this License. 5. Submission of Contributions. Unless You explicitly state otherwise, any Contribution intentionally submitted for inclusion in the Work by You to the Licensor shall be under the terms and conditions of this License, without any additional terms or conditions. Notwithstanding the above, nothing herein shall supersede or modify the terms of any separate license agreement you may have executed with Licensor regarding such Contributions. 6. Trademarks. This License does not grant permission to use the trade names, trademarks, service marks, or product names of the Licensor, except as required for reasonable and customary use in describing the origin of the Work and reproducing the content of the NOTICE file. 7. Disclaimer of Warranty. Unless required by applicable law or agreed to in writing, Licensor provides the Work (and each Contributor provides its Contributions) on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied, including, without limitation, any warranties or conditions of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A PARTICULAR PURPOSE. You are solely responsible for determining the appropriateness of using or redistributing the Work and assume any risks associated with Your exercise of permissions under this License. 8. Limitation of Liability. In no event and under no legal theory, whether in tort (including negligence), contract, or otherwise, unless required by applicable law (such as deliberate and grossly negligent acts) or agreed to in writing, shall any Contributor be liable to You for damages, including any direct, indirect, special, incidental, or consequential damages of any character arising as a result of this License or out of the use or inability to use the Work (including but not limited to damages for loss of goodwill, work stoppage, computer failure or malfunction, or any and all other commercial damages or losses), even if such Contributor has been advised of the possibility of such damages. 9. Accepting Warranty or Additional Liability. While redistributing the Work or Derivative Works thereof, You may choose to offer, and charge a fee for, acceptance of support, warranty, indemnity, or other liability obligations and/or rights consistent with this License. However, in accepting such obligations, You may act only on Your own behalf and on Your sole responsibility, not on behalf of any other Contributor, and only if You agree to indemnify, defend, and hold each Contributor harmless for any liability incurred by, or claims asserted against, such Contributor by reason of your accepting any such warranty or additional liability. END OF TERMS AND CONDITIONS APPENDIX: How to apply the Apache License to your work. To apply the Apache License to your work, attach the following boilerplate notice, with the fields enclosed by brackets "[]" replaced with your own identifying information. (Don't include the brackets!) The text should be enclosed in the appropriate comment syntax for the file format. We also recommend that a file or class name and description of purpose be included on the same "printed page" as the copyright notice for easier identification within third-party archives. Copyright (C) 2020-2021 Intel Corporation Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.

-

Latest release: 2.1.0

published 10 months ago

Rankings

Maintainers (3)

Dependencies

- actions/checkout v3 composite

- actions/setup-python v4 composite

- actions/upload-artifact v3 composite

- actions/checkout v3 composite

- actions/configure-pages v2 composite

- actions/deploy-pages v1 composite

- actions/setup-python v4 composite

- actions/upload-pages-artifact v1 composite

- actions/labeler v4 composite

- actions/checkout v2 composite

- actions/upload-artifact v2 composite

- actions/checkout v2 composite

- actions/checkout v3 composite

- actions/setup-python v4 composite

- actions/checkout v2 composite

- actions/setup-python v2 composite

- actions/download-artifact v3 composite

- nvidia/cuda 11.4.3-devel-ubuntu20.04 build

- python_base_cuda11.4 latest build

- albumentations >=1.1.0

- av >=10.0.0

- einops >=0.3.2

- freia >=0.2

- imgaug ==0.4.0

- jsonargparse >=4.3

- kornia >=0.6.6,<0.6.10

- matplotlib >=3.4.3

- omegaconf >=2.1.1

- opencv-python >=4.5.3.56

- pandas >=1.1.0

- pytorch-lightning >=1.7.0,<1.10.0

- setuptools >=41.0.0

- timm >=0.5.4,<=0.6.12

- torchmetrics ==0.10.3

- coverage * development

- pre-commit * development

- pytest * development

- pytest-cov * development

- pytest-order * development

- pytest-sugar * development

- pytest-xdist * development

- tox * development

- furo ==2022.9.29

- ipykernel *

- myst-parser *

- nbsphinx >=0.8.9

- pandoc *

- sphinx >=4.1.2

- sphinx-autoapi *

- sphinxemoji ==0.1.8

- GitPython *

- comet-ml >=3.31.7

- gradio >=2.9.4

- ipykernel *

- tensorboard *

- wandb ==0.12.17

- gitpython *

- ipykernel *

- ipywidgets *

- notebook *

- defusedxml ==0.7.1

- networkx *

- nncf >=2.1.0

- onnx >=1.10.1

- openvino-dev >=2022.3.0

- requests >=2.26.0