cluster-prompt

Science Score: 54.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

✓CITATION.cff file

Found CITATION.cff file -

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

○DOI references

-

✓Academic publication links

Links to: arxiv.org -

○Academic email domains

-

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (14.9%) to scientific vocabulary

Repository

Basic Info

- Host: GitHub

- Owner: Etrama

- License: apache-2.0

- Language: Jupyter Notebook

- Default Branch: main

- Size: 10.4 MB

Statistics

- Stars: 0

- Watchers: 1

- Forks: 0

- Open Issues: 0

- Releases: 0

Metadata Files

README.md

Introduction

In this repo, we (Kaushik, Pulkit and Vitaly) attempt to come up with a hopefully brand new prompting approach called cluster prompt. This is part of our course work for the IFT 6165 class at MILA, taught by Dr. Irina Rish. A report detailing our findings is available at this link.

The rest of this README.md is forked from PAL by Gao et. al. We try to build on top of their work and stand on the shoulders of giants in our work.

Setup your OpenAI key as an environment variable called: OPENAIAPIKEY (variable name), the variable value will be your API key.

Installation

Clone this repo and install with pip.

git clone https://github.com/Etrama/PAL_v2.git

pip install -e ./pal

Before running the scripts, set the OpenAI key,

export OPENAI_API_KEY='sk-...'

Interactive Usage

The core components of the pal package are the Interface classes. Specifically, ProgramInterface connects the LLM backend, a Python backend and user prompts.

```

import pal

from pal.prompt import math_prompts

interface = pal.interface.ProgramInterface( model='code-davinci-002', stop='\n\n\n', # stop generation str for Codex API getanswerexpr='solution()' # python expression evaluated after generated code to obtain answer )

question = 'xxxxx'

prompt = mathprompts.MATHPROMPT.format(question=question)

answer = interface.run(prompt)

``

Here, theinterface'srunmethod will run generation with the OpenAI API, run the generated snippet and then evaluategetanswerexpr(heresolution()`) to obtain the final answer.

User should set get_answer_expr based on the prompt.

Inference Loop

We provide simple inference loops in the scripts/ folder.

mkdir eval_results

python scripts/{colored_objects|gsm|date_understanding|penguin}_eval.py

We have a beta release of a ChatGPT dedicated script for math reasoning.

python scripts/gsm_chatgpt.py

For running bulk inference, we used the generic prompting library prompt-lib and recommend it for running CoT inferenence on all tasks used in our work.

Results

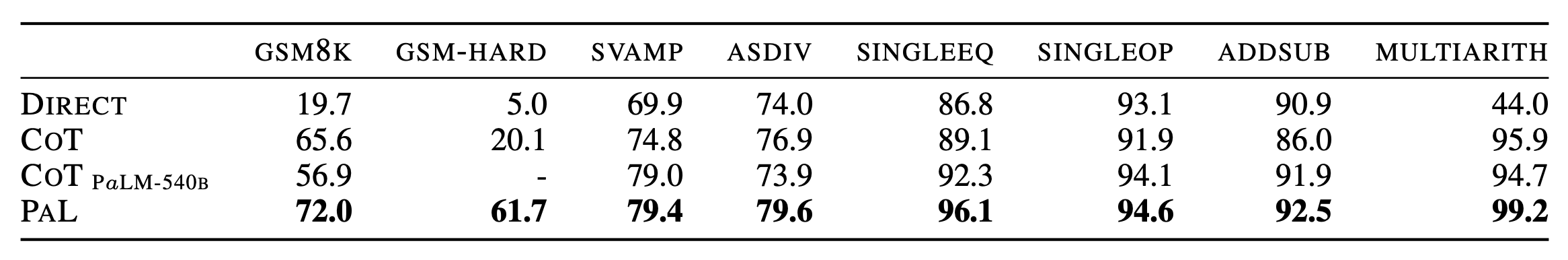

For the complete details of the results, see the paper .

Citation

@article{gao2022pal,

title={PAL: Program-aided Language Models},

author={Gao, Luyu and Madaan, Aman and Zhou, Shuyan and Alon, Uri and Liu, Pengfei and Yang, Yiming and Callan, Jamie and Neubig, Graham},

journal={arXiv preprint arXiv:2211.10435},

year={2022}

}

PAL_v2

Owner

- Name: Etrama

- Login: Etrama

- Kind: user

- Website: etrama.github.io

- Repositories: 2

- Profile: https://github.com/Etrama

Banana_Leopard

Citation (CITATION.cff)

@article{gao2022pal,

title={PAL: Program-aided Language Models},

author={Gao, Luyu and Madaan, Aman and Zhou, Shuyan and Alon, Uri and Liu, Pengfei and Yang, Yiming and Callan, Jamie and Neubig, Graham},

journal={arXiv preprint arXiv:2211.10435},

year={2022}

}