https://github.com/hpcaitech/colossalai

Making large AI models cheaper, faster and more accessible

Science Score: 59.0%

This score indicates how likely this project is to be science-related based on various indicators:

-

○CITATION.cff file

-

✓codemeta.json file

Found codemeta.json file -

✓.zenodo.json file

Found .zenodo.json file -

✓DOI references

Found 4 DOI reference(s) in README -

✓Academic publication links

Links to: arxiv.org -

✓Committers with academic emails

9 of 196 committers (4.6%) from academic institutions -

○Institutional organization owner

-

○JOSS paper metadata

-

○Scientific vocabulary similarity

Low similarity (12.2%) to scientific vocabulary

Keywords

Keywords from Contributors

Repository

Making large AI models cheaper, faster and more accessible

Basic Info

- Host: GitHub

- Owner: hpcaitech

- License: apache-2.0

- Language: Python

- Default Branch: main

- Homepage: https://www.colossalai.org

- Size: 63.3 MB

Statistics

- Stars: 41,118

- Watchers: 390

- Forks: 4,525

- Open Issues: 475

- Releases: 50

Topics

Metadata Files

README.md

Colossal-AI

Paper | Documentation | Examples | Forum | GPU Cloud Playground | Blog

[](https://github.com/hpcaitech/ColossalAI/stargazers) [](https://github.com/hpcaitech/ColossalAI/actions/workflows/build_on_schedule.yml) [](https://colossalai.readthedocs.io/en/latest/?badge=latest) [](https://www.codefactor.io/repository/github/hpcaitech/colossalai) [](https://huggingface.co/hpcai-tech) [](https://github.com/hpcaitech/public_assets/tree/main/colossalai/contact/slack) [](https://raw.githubusercontent.com/hpcaitech/public_assets/main/colossalai/img/WeChat.png) | [English](README.md) | [中文](docs/README-zh-Hans.md) |Get Started with Colossal-AI Without Setup

Access high-end, on-demand compute for your research instantly—no setup needed.

Sign up now and get $10 in credits!

Limited Academic Bonuses:

- Top up $1,000 and receive 300 credits

- Top up $500 and receive 100 credits

Latest News

- [2025/02] DeepSeek 671B Fine-Tuning Guide Revealed—Unlock the Upgraded DeepSeek Suite with One Click, AI Players Ecstatic!

- [2024/12] The development cost of video generation models has saved by 50%! Open-source solutions are now available with H200 GPU vouchers [code] [vouchers]

- [2024/10] How to build a low-cost Sora-like app? Solutions for you

- [2024/09] Singapore Startup HPC-AI Tech Secures 50 Million USD in Series A Funding to Build the Video Generation AI Model and GPU Platform

- [2024/09] Reducing AI Large Model Training Costs by 30% Requires Just a Single Line of Code From FP8 Mixed Precision Training Upgrades

- [2024/06] Open-Sora Continues Open Source: Generate Any 16-Second 720p HD Video with One Click, Model Weights Ready to Use

- [2024/05] Large AI Models Inference Speed Doubled, Colossal-Inference Open Source Release

- [2024/04] Open-Sora Unveils Major Upgrade: Embracing Open Source with Single-Shot 16-Second Video Generation and 720p Resolution

- [2024/04] Most cost-effective solutions for inference, fine-tuning and pretraining, tailored to LLaMA3 series

Table of Contents

- Why Colossal-AI

- Features

-

Colossal-AI for Real World Applications

- Open-Sora: Revealing Complete Model Parameters, Training Details, and Everything for Sora-like Video Generation Models

- Colossal-LLaMA-2: One Half-Day of Training Using a Few Hundred Dollars Yields Similar Results to Mainstream Large Models, Open-Source and Commercial-Free Domain-Specific Llm Solution

- ColossalChat: An Open-Source Solution for Cloning ChatGPT With a Complete RLHF Pipeline

- AIGC: Acceleration of Stable Diffusion

- Biomedicine: Acceleration of AlphaFold Protein Structure

- Parallel Training Demo

- Single GPU Training Demo

- Inference

- Installation

- Use Docker

- Community

- Contributing

- Cite Us

Why Colossal-AI

Prof. James Demmel (UC Berkeley): Colossal-AI makes training AI models efficient, easy, and scalable.

Prof. James Demmel (UC Berkeley): Colossal-AI makes training AI models efficient, easy, and scalable.

Features

Colossal-AI provides a collection of parallel components for you. We aim to support you to write your distributed deep learning models just like how you write your model on your laptop. We provide user-friendly tools to kickstart distributed training and inference in a few lines.

Parallelism strategies

- Data Parallelism

- Pipeline Parallelism

- 1D, 2D, 2.5D, 3D Tensor Parallelism

- Sequence Parallelism

- Zero Redundancy Optimizer (ZeRO)

- Auto-Parallelism

Heterogeneous Memory Management

Friendly Usage

- Parallelism based on the configuration file

Colossal-AI in the Real World

Open-Sora

Open-Sora:Revealing Complete Model Parameters, Training Details, and Everything for Sora-like Video Generation Models [code] [blog] [Model weights] [Demo] [GPU Cloud Playground] [OpenSora Image]

Colossal-LLaMA-2

[GPU Cloud Playground] [LLaMA3 Image]

7B: One half-day of training using a few hundred dollars yields similar results to mainstream large models, open-source and commercial-free domain-specific LLM solution. [code] [blog] [HuggingFace model weights] [Modelscope model weights]

13B: Construct refined 13B private model with just $5000 USD. [code] [blog] [HuggingFace model weights] [Modelscope model weights]

| Model | Backbone | Tokens Consumed | MMLU (5-shot) | CMMLU (5-shot)| AGIEval (5-shot) | GAOKAO (0-shot) | CEval (5-shot) | | :-----------------------------: | :--------: | :-------------: | :------------------: | :-----------: | :--------------: | :-------------: | :-------------: | | Baichuan-7B | - | 1.2T | 42.32 (42.30) | 44.53 (44.02) | 38.72 | 36.74 | 42.80 | | Baichuan-13B-Base | - | 1.4T | 50.51 (51.60) | 55.73 (55.30) | 47.20 | 51.41 | 53.60 | | Baichuan2-7B-Base | - | 2.6T | 46.97 (54.16) | 57.67 (57.07) | 45.76 | 52.60 | 54.00 | | Baichuan2-13B-Base | - | 2.6T | 54.84 (59.17) | 62.62 (61.97) | 52.08 | 58.25 | 58.10 | | ChatGLM-6B | - | 1.0T | 39.67 (40.63) | 41.17 (-) | 40.10 | 36.53 | 38.90 | | ChatGLM2-6B | - | 1.4T | 44.74 (45.46) | 49.40 (-) | 46.36 | 45.49 | 51.70 | | InternLM-7B | - | 1.6T | 46.70 (51.00) | 52.00 (-) | 44.77 | 61.64 | 52.80 | | Qwen-7B | - | 2.2T | 54.29 (56.70) | 56.03 (58.80) | 52.47 | 56.42 | 59.60 | | Llama-2-7B | - | 2.0T | 44.47 (45.30) | 32.97 (-) | 32.60 | 25.46 | - | | Linly-AI/Chinese-LLaMA-2-7B-hf | Llama-2-7B | 1.0T | 37.43 | 29.92 | 32.00 | 27.57 | - | | wenge-research/yayi-7b-llama2 | Llama-2-7B | - | 38.56 | 31.52 | 30.99 | 25.95 | - | | ziqingyang/chinese-llama-2-7b | Llama-2-7B | - | 33.86 | 34.69 | 34.52 | 25.18 | 34.2 | | TigerResearch/tigerbot-7b-base | Llama-2-7B | 0.3T | 43.73 | 42.04 | 37.64 | 30.61 | - | | LinkSoul/Chinese-Llama-2-7b | Llama-2-7B | - | 48.41 | 38.31 | 38.45 | 27.72 | - | | FlagAlpha/Atom-7B | Llama-2-7B | 0.1T | 49.96 | 41.10 | 39.83 | 33.00 | - | | IDEA-CCNL/Ziya-LLaMA-13B-v1.1 | Llama-13B | 0.11T | 50.25 | 40.99 | 40.04 | 30.54 | - | | Colossal-LLaMA-2-7b-base | Llama-2-7B | 0.0085T | 53.06 | 49.89 | 51.48 | 58.82 | 50.2 | | Colossal-LLaMA-2-13b-base | Llama-2-13B | 0.025T | 56.42 | 61.80 | 54.69 | 69.53 | 60.3 |

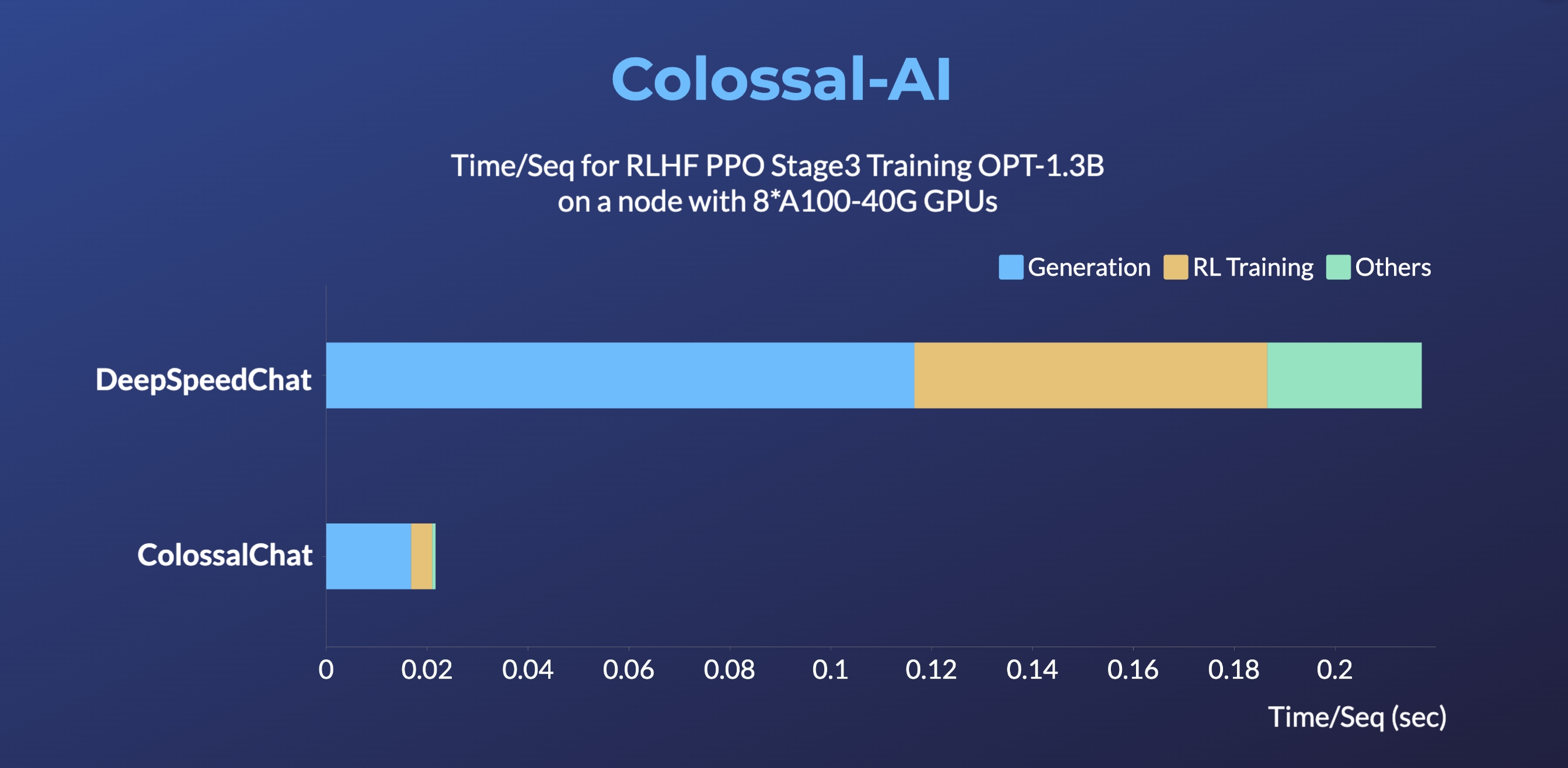

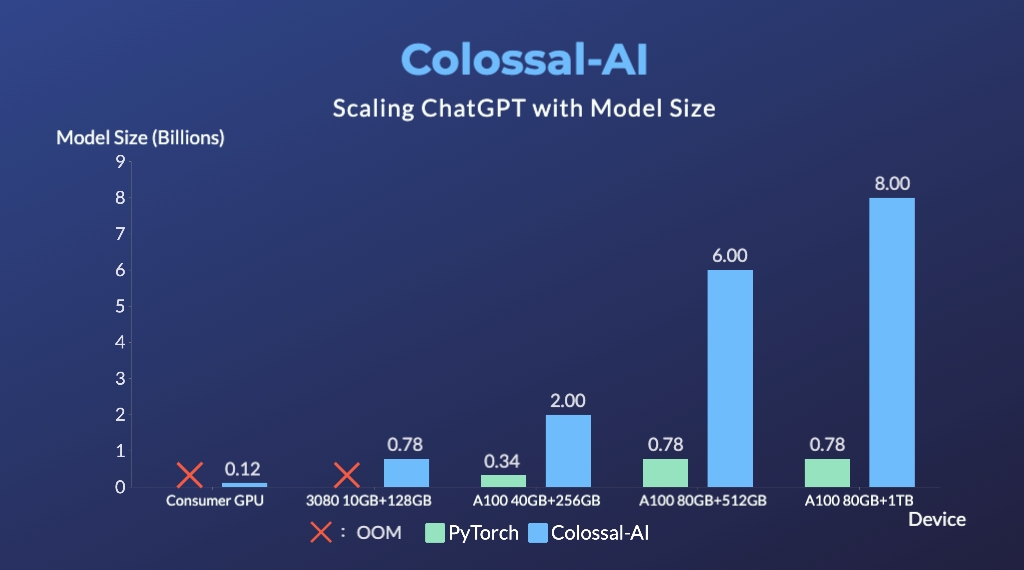

ColossalChat

ColossalChat: An open-source solution for cloning ChatGPT with a complete RLHF pipeline. [code] [blog] [demo] [tutorial]

- Up to 10 times faster for RLHF PPO Stage3 Training

- Up to 7.73 times faster for single server training and 1.42 times faster for single-GPU inference

- Up to 10.3x growth in model capacity on one GPU

- A mini demo training process requires only 1.62GB of GPU memory (any consumer-grade GPU)

- Increase the capacity of the fine-tuning model by up to 3.7 times on a single GPU

- Keep at a sufficiently high running speed

AIGC

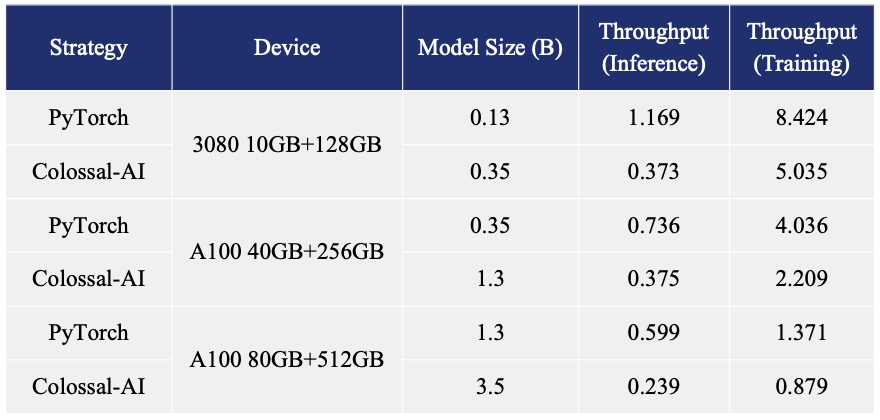

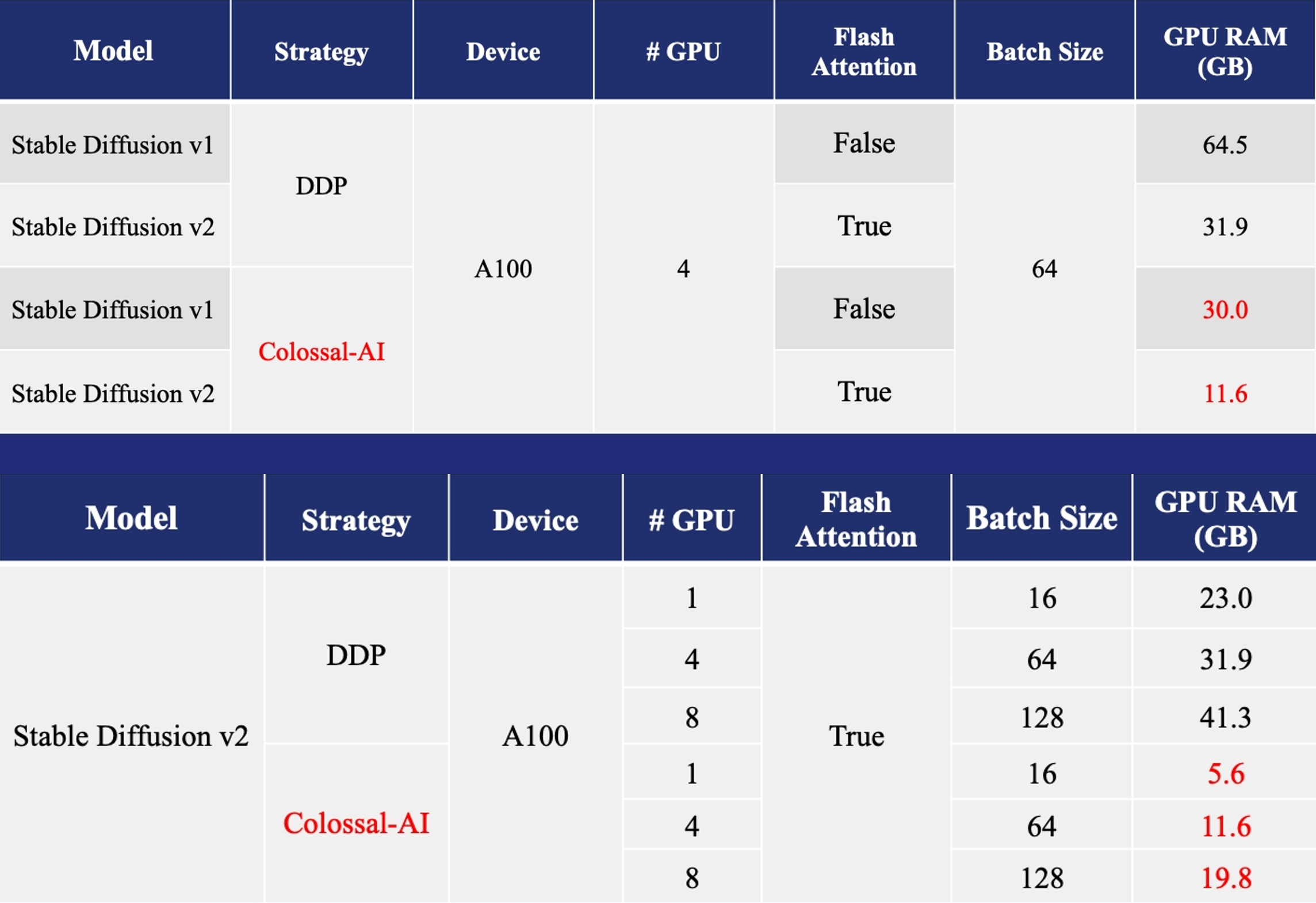

Acceleration of AIGC (AI-Generated Content) models such as Stable Diffusion v1 and Stable Diffusion v2.

- Training: Reduce Stable Diffusion memory consumption by up to 5.6x and hardware cost by up to 46x (from A100 to RTX3060).

- DreamBooth Fine-tuning: Personalize your model using just 3-5 images of the desired subject.

- Inference: Reduce inference GPU memory consumption by 2.5x.

Biomedicine

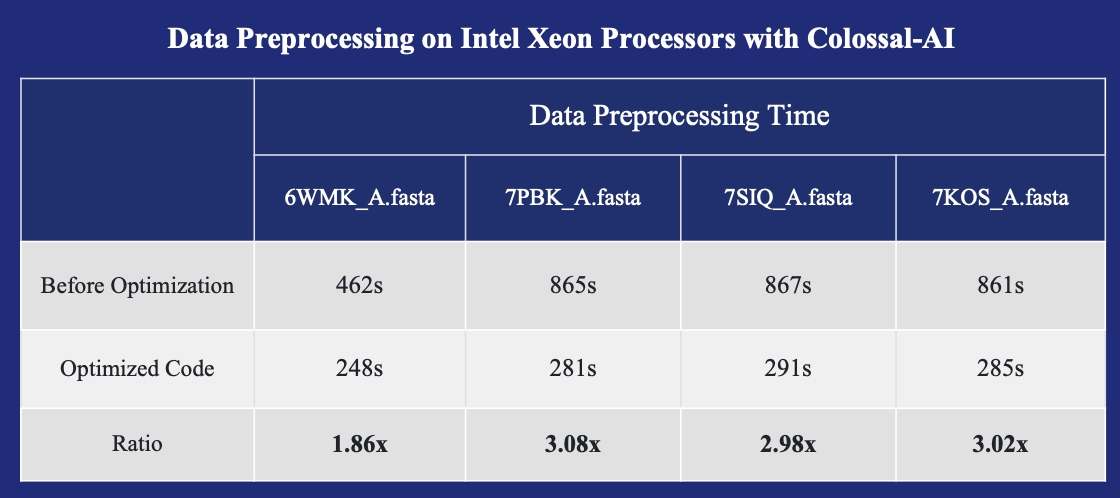

Acceleration of AlphaFold Protein Structure

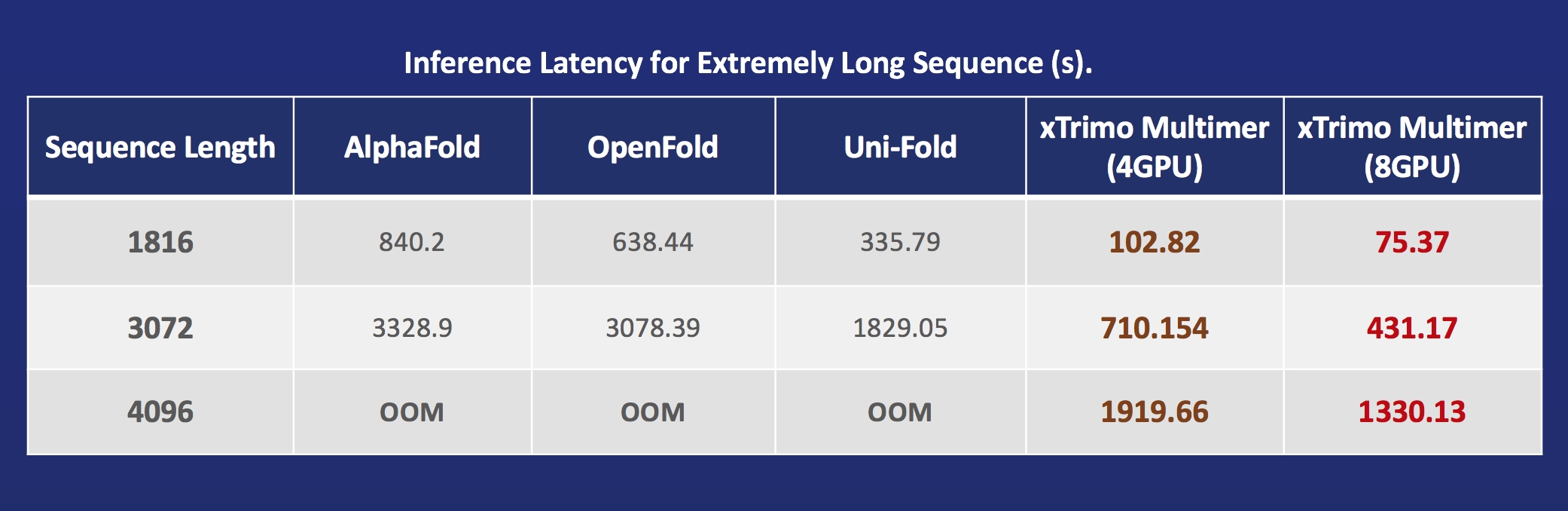

- FastFold: Accelerating training and inference on GPU Clusters, faster data processing, inference sequence containing more than 10000 residues.

- FastFold with Intel: 3x inference acceleration and 39% cost reduce.

- xTrimoMultimer: accelerating structure prediction of protein monomers and multimer by 11x.

Parallel Training Demo

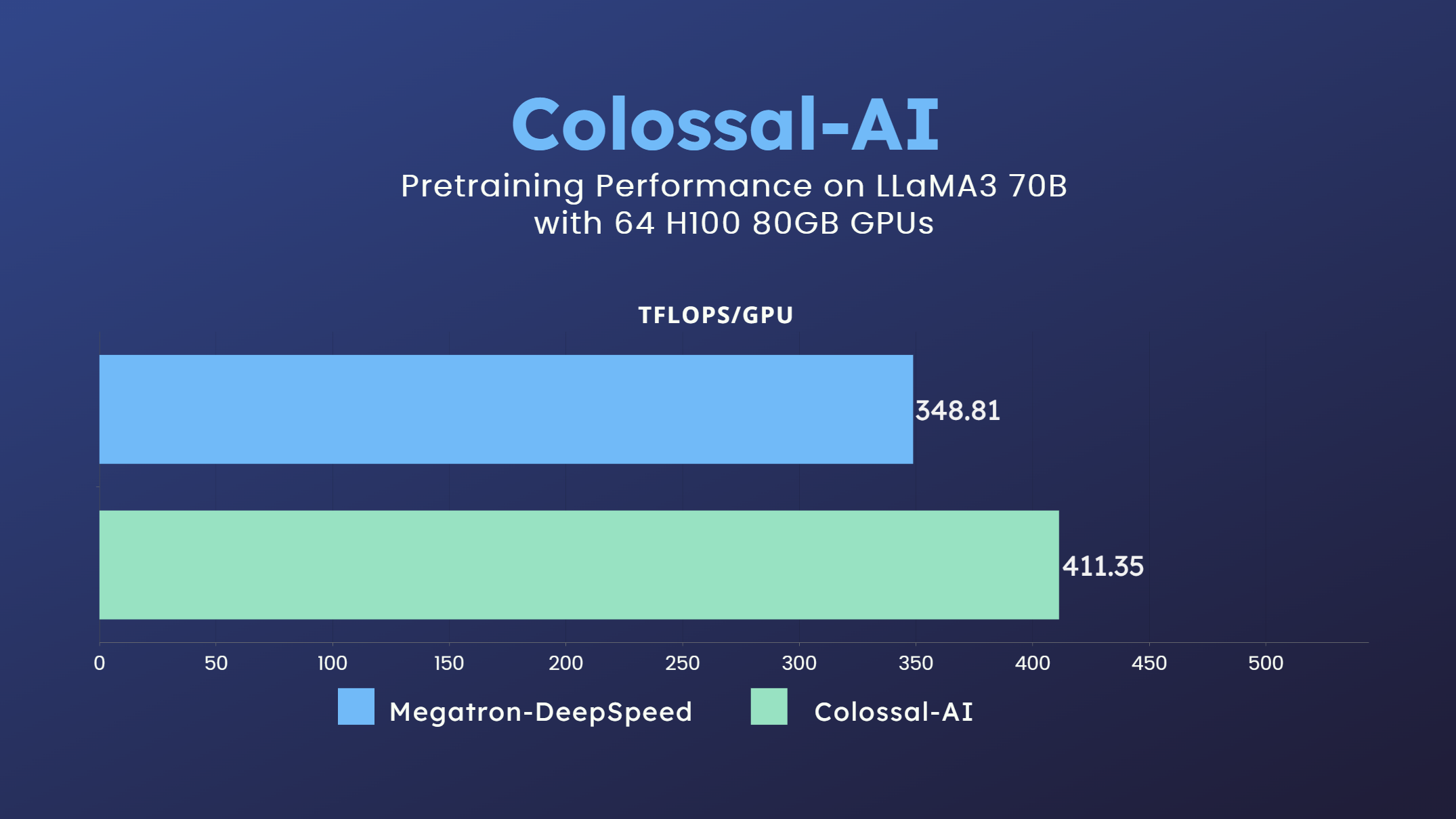

LLaMA3

- 70 billion parameter LLaMA3 model training accelerated by 18% [code] [GPU Cloud Playground] [LLaMA3 Image]

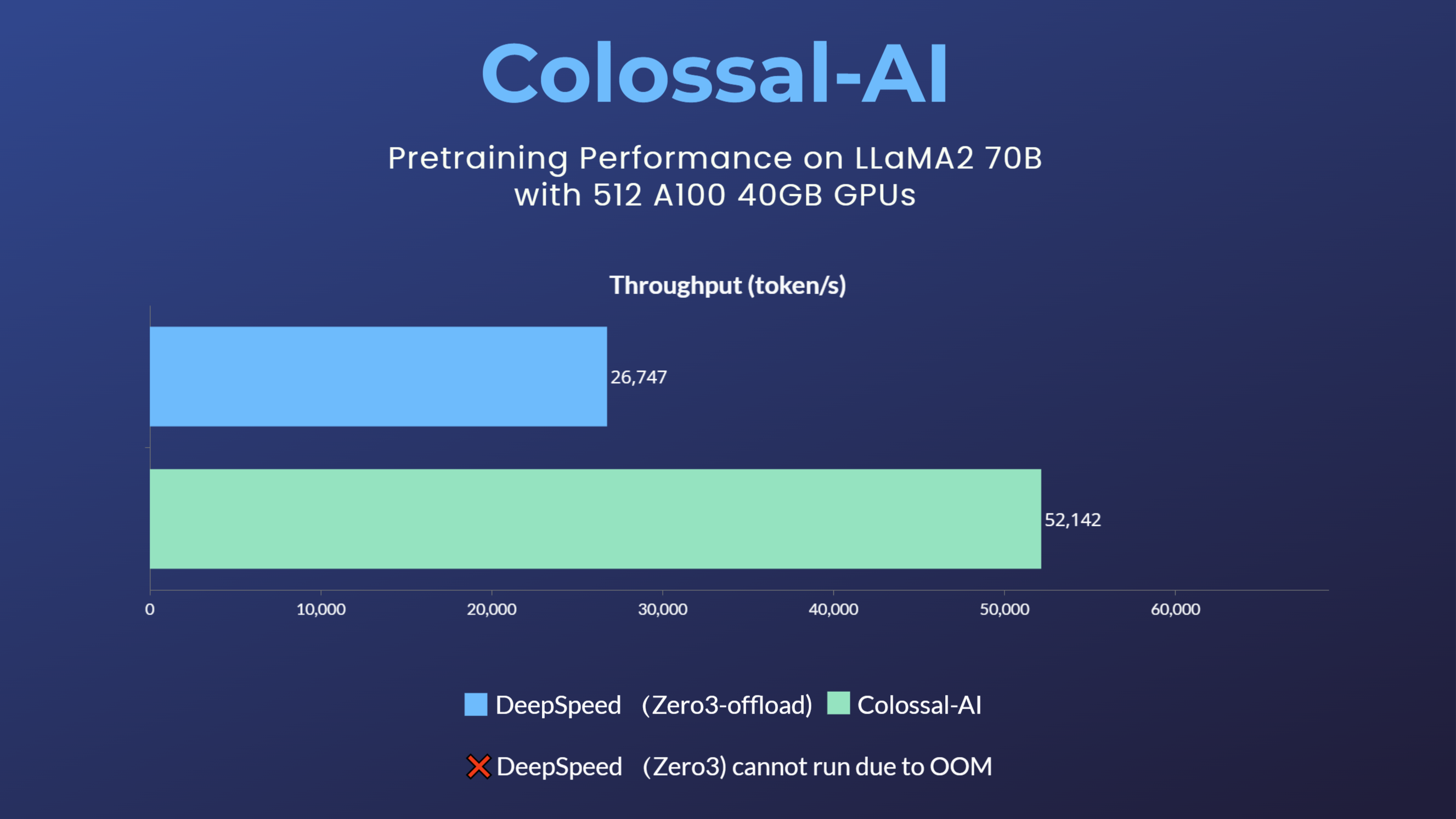

LLaMA2

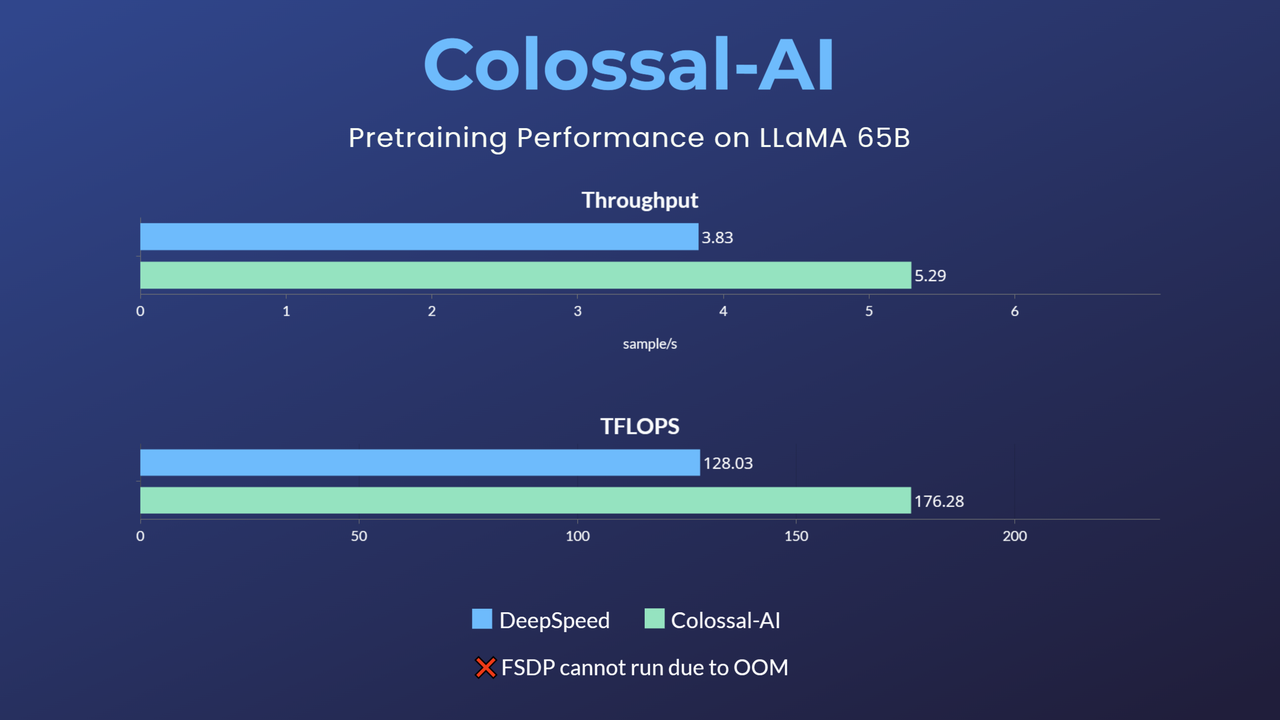

LLaMA1

MoE

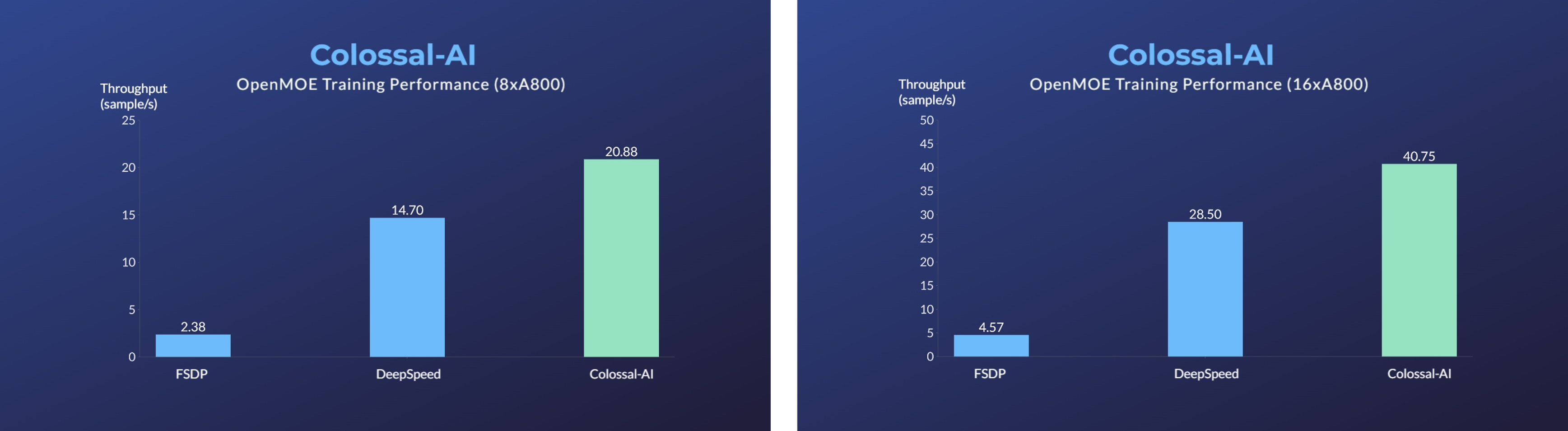

- Enhanced MoE parallelism, Open-source MoE model training can be 9 times more efficient [code] [blog]

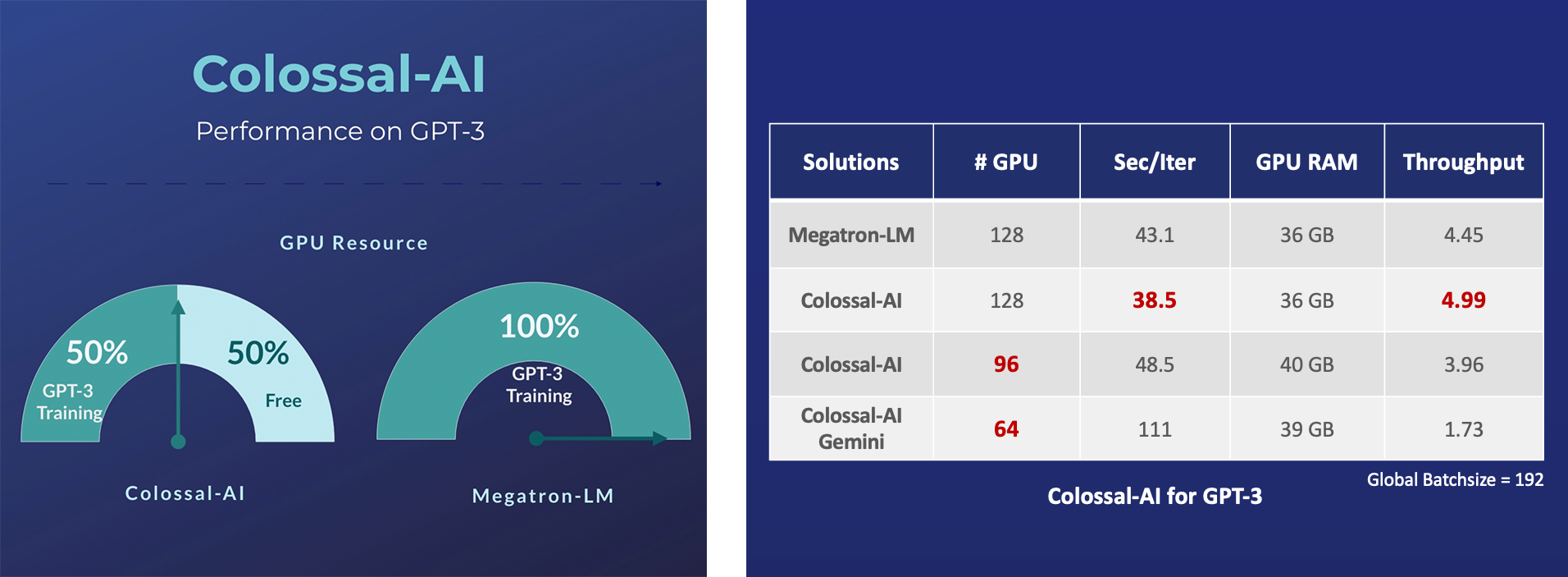

GPT-3

- Save 50% GPU resources and 10.7% acceleration

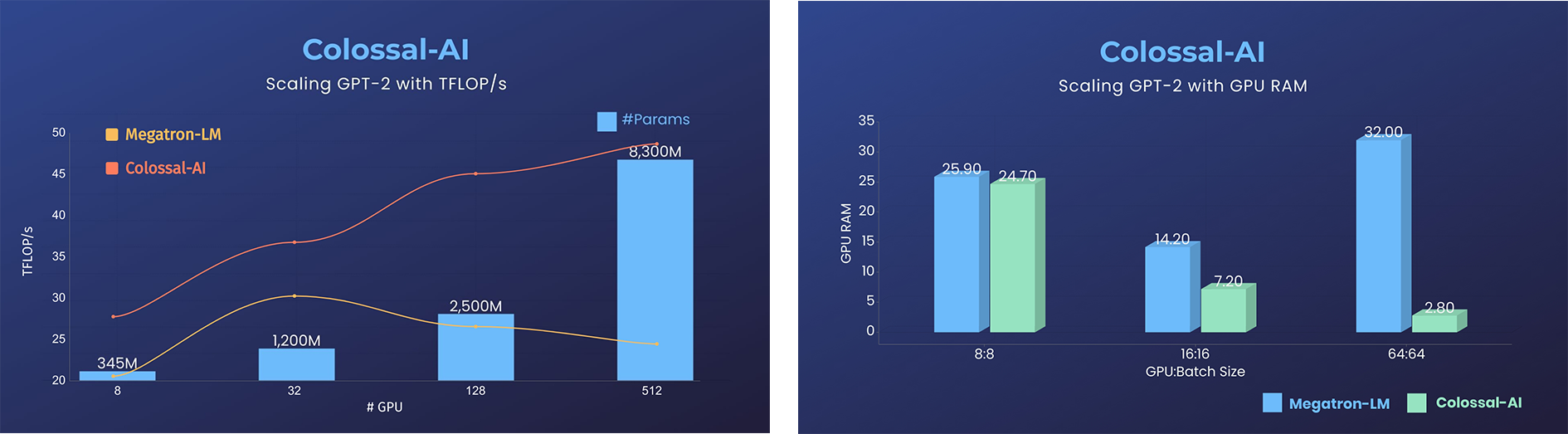

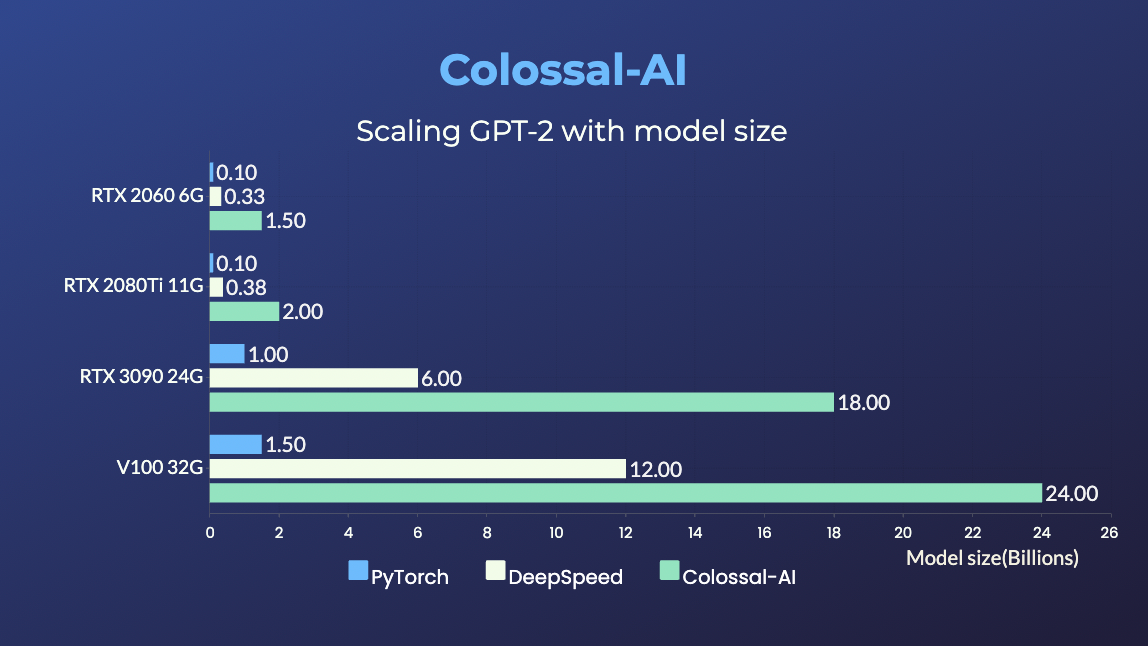

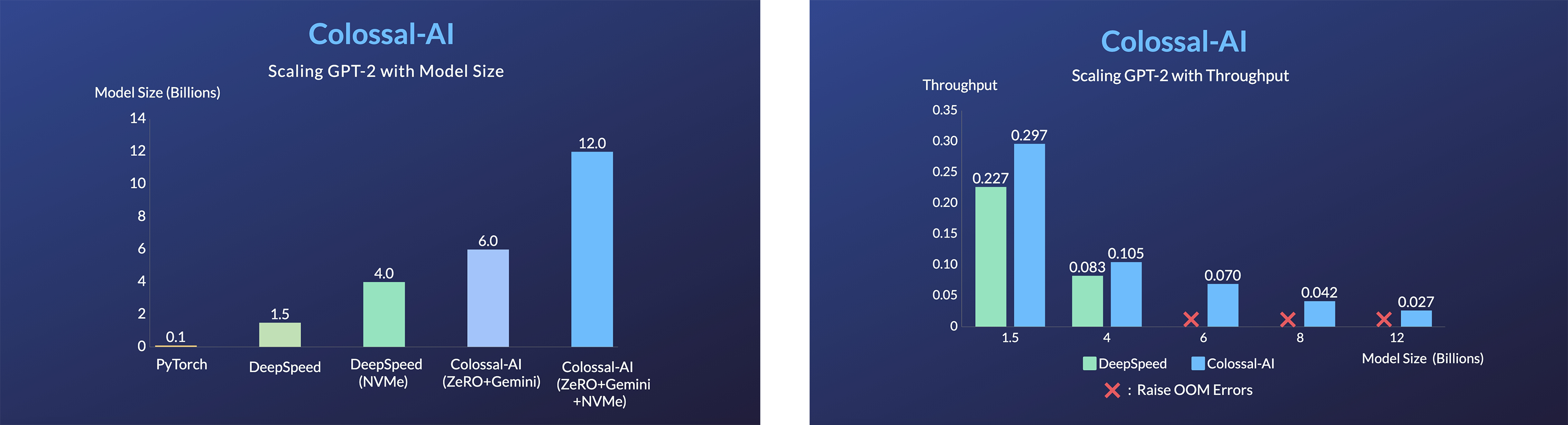

GPT-2

- 11x lower GPU memory consumption, and superlinear scaling efficiency with Tensor Parallelism

GPT-2.png)

- 24x larger model size on the same hardware

over 3x acceleration

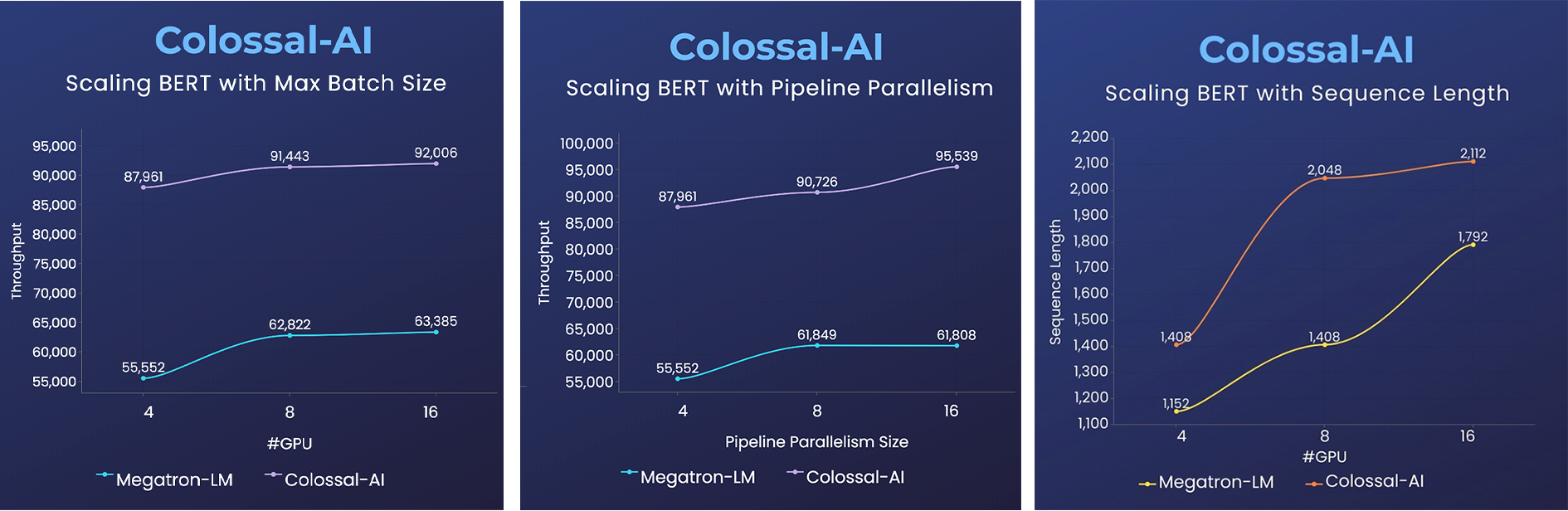

BERT

2x faster training, or 50% longer sequence length

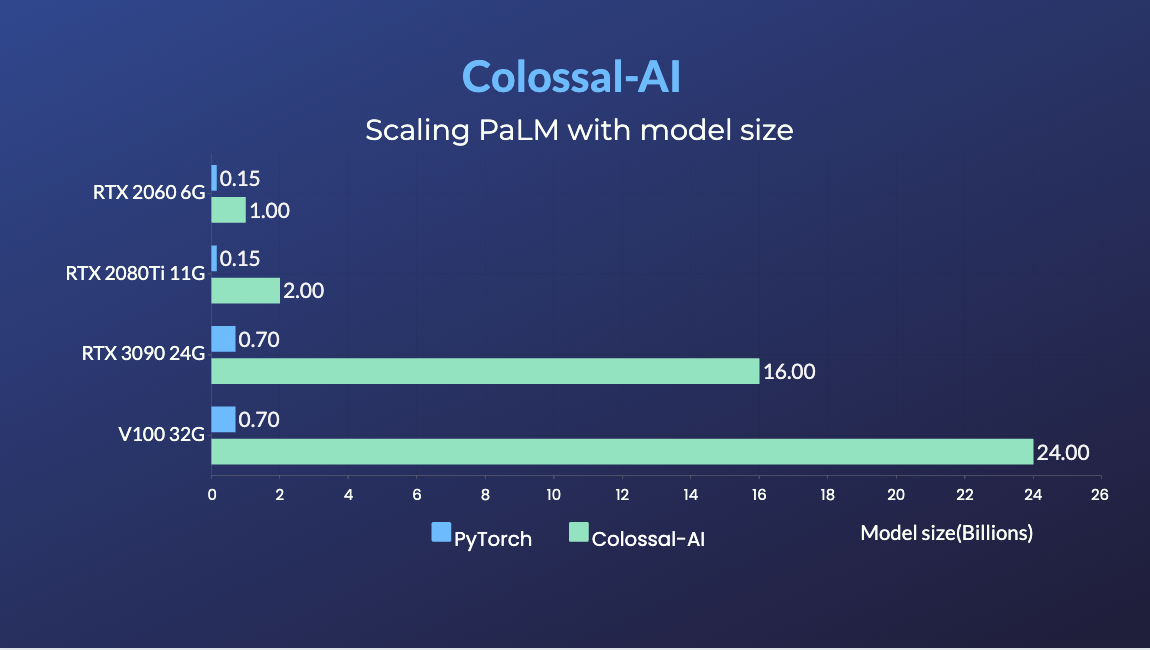

PaLM

- PaLM-colossalai: Scalable implementation of Google's Pathways Language Model (PaLM).

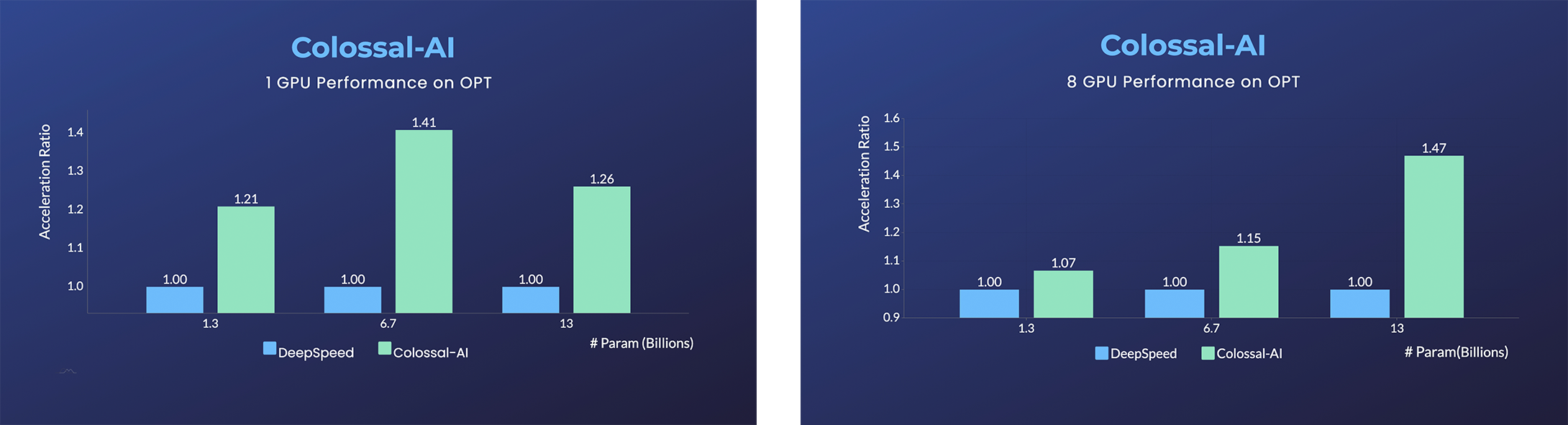

OPT

- Open Pretrained Transformer (OPT), a 175-Billion parameter AI language model released by Meta, which stimulates AI programmers to perform various downstream tasks and application deployments because of public pre-trained model weights.

- 45% speedup fine-tuning OPT at low cost in lines. [Example] [Online Serving]

Please visit our documentation and examples for more details.

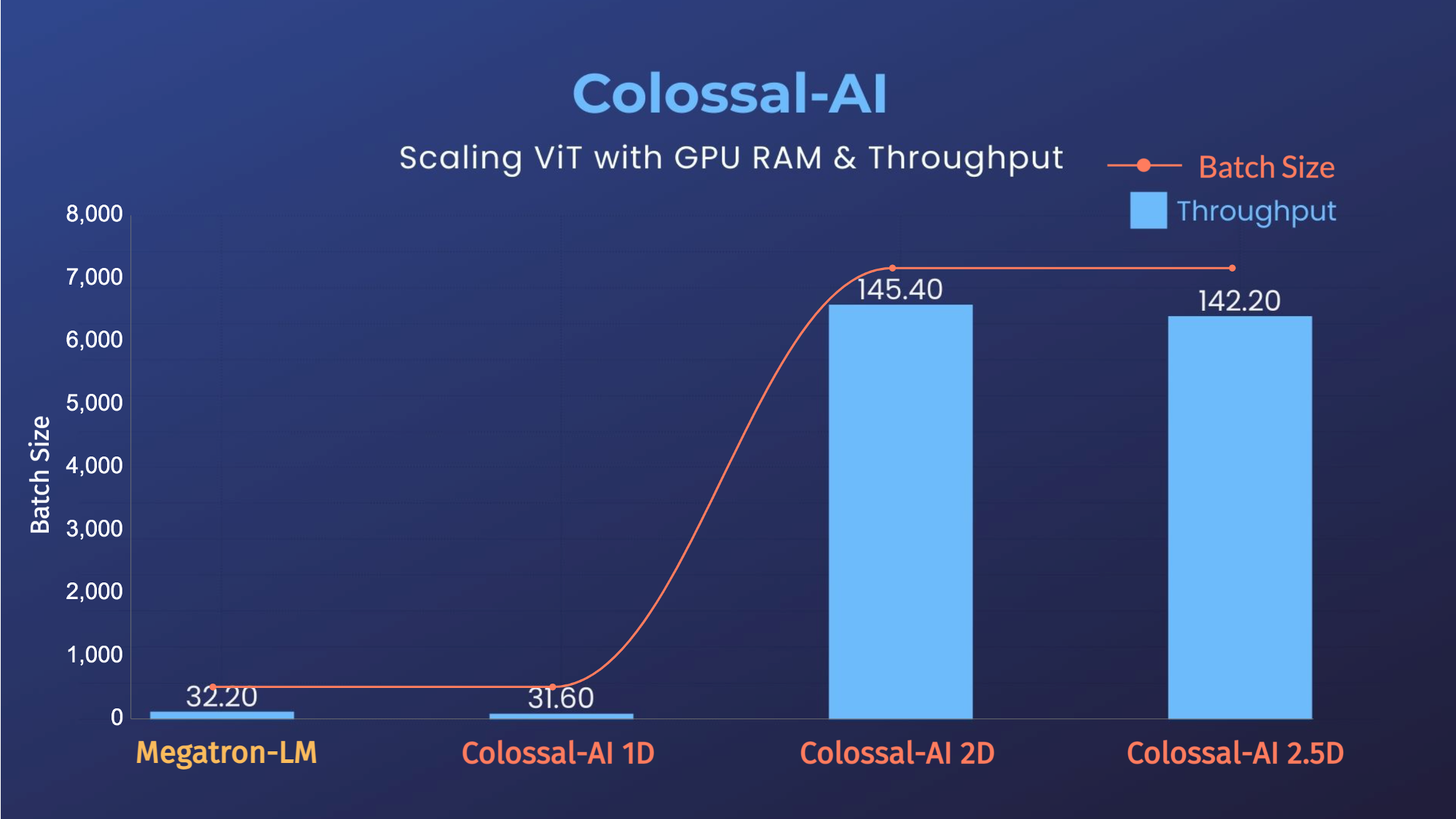

ViT

- 14x larger batch size, and 5x faster training for Tensor Parallelism = 64

Recommendation System Models

- Cached Embedding, utilize software cache to train larger embedding tables with a smaller GPU memory budget.

Single GPU Training Demo

GPT-2

- 20x larger model size on the same hardware

- 120x larger model size on the same hardware (RTX 3080)

PaLM

- 34x larger model size on the same hardware

Inference

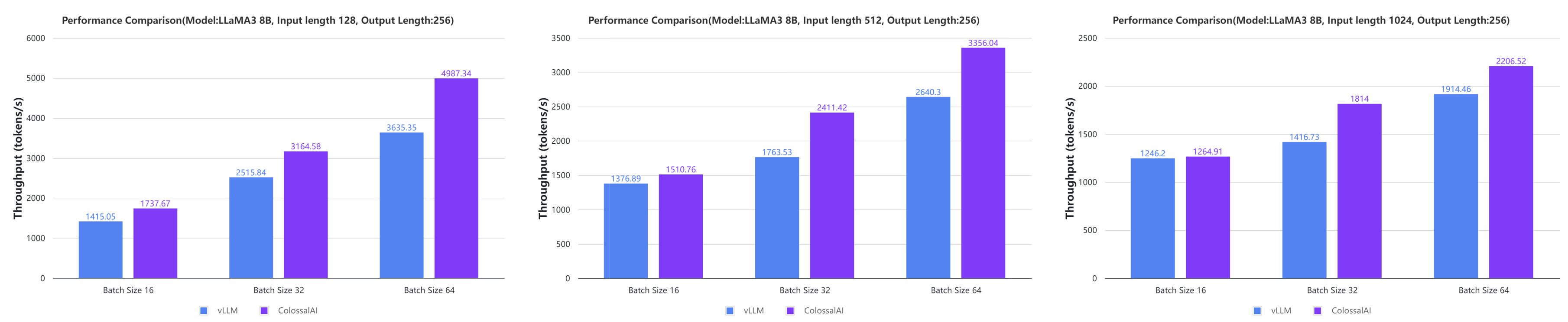

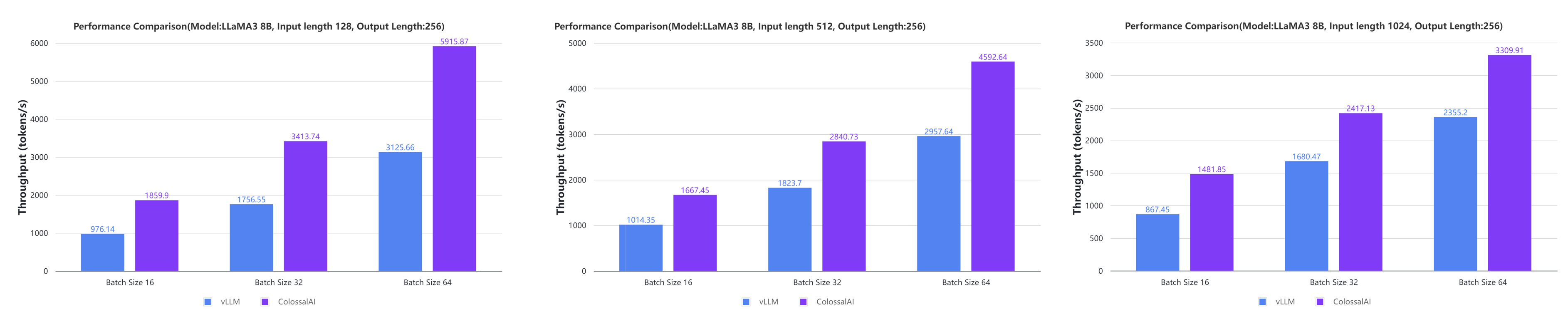

Colossal-Inference

- Large AI models inference speed doubled, compared to the offline inference performance of vLLM in some cases. [code] [blog] [GPU Cloud Playground] [LLaMA3 Image]

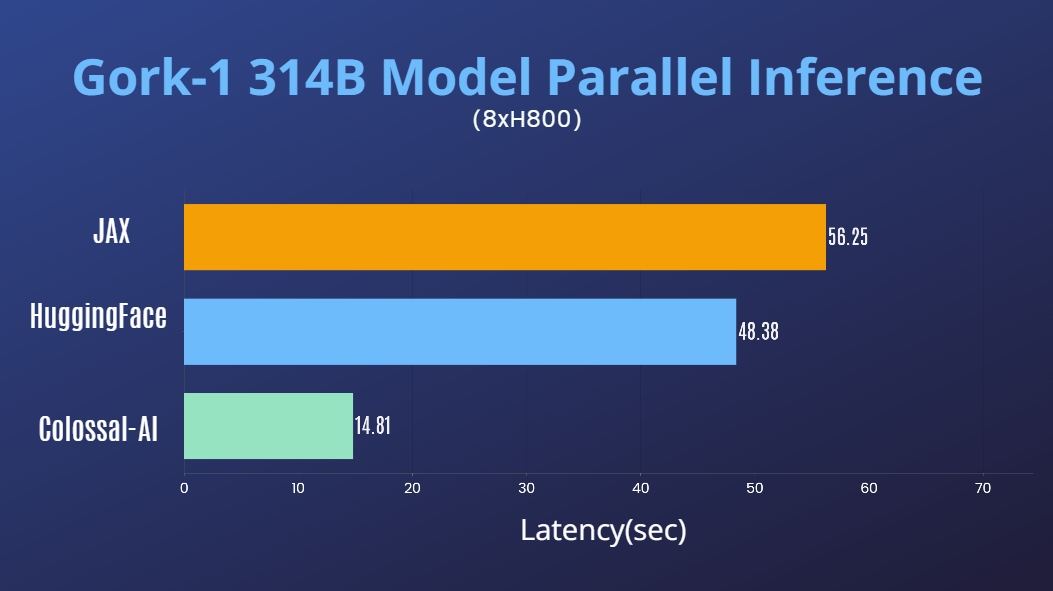

Grok-1

- 314 Billion Parameter Grok-1 Inference Accelerated by 3.8x, an easy-to-use Python + PyTorch + HuggingFace version for Inference.

[code] [blog] [HuggingFace Grok-1 PyTorch model weights] [ModelScope Grok-1 PyTorch model weights]

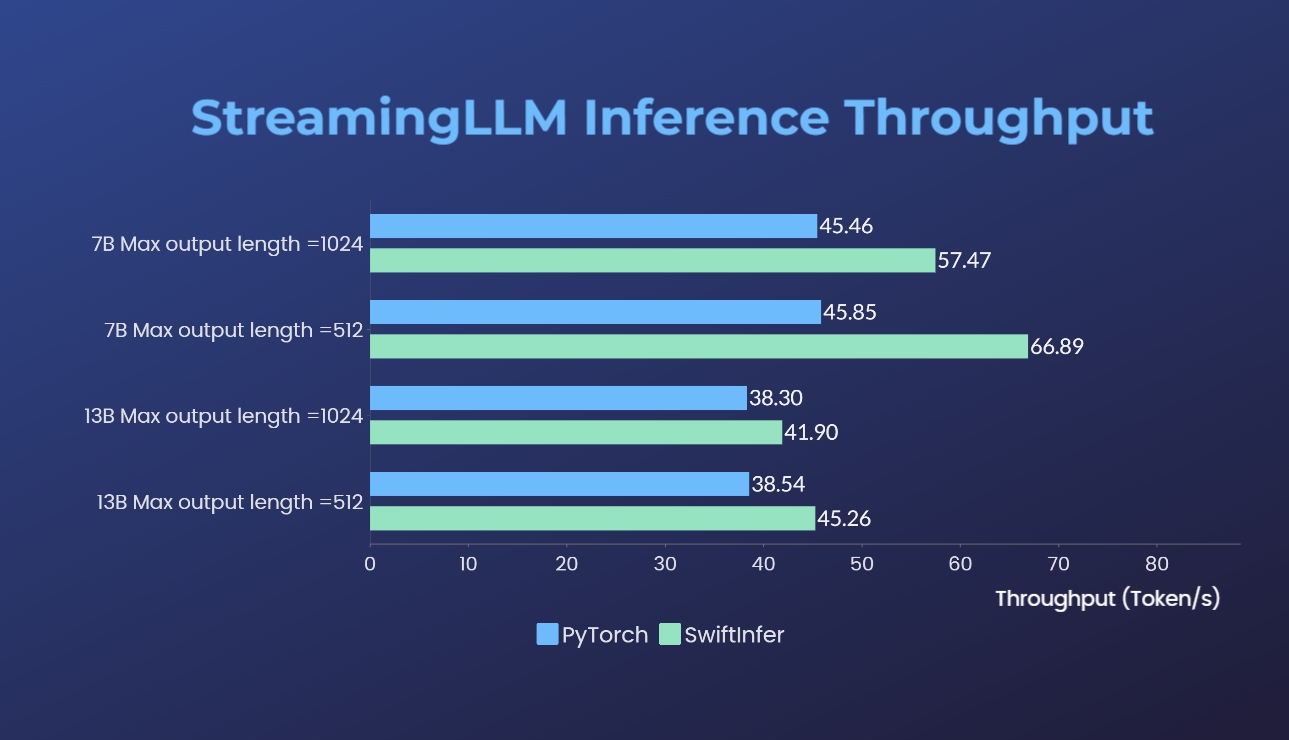

SwiftInfer

- SwiftInfer: Inference performance improved by 46%, open source solution breaks the length limit of LLM for multi-round conversations

Installation

Requirements: - PyTorch >= 2.2 - Python >= 3.7 - CUDA >= 11.0 - NVIDIA GPU Compute Capability >= 7.0 (V100/RTX20 and higher) - Linux OS

If you encounter any problem with installation, you may want to raise an issue in this repository.

Install from PyPI

You can easily install Colossal-AI with the following command. By default, we do not build PyTorch extensions during installation.

bash

pip install colossalai

Note: only Linux is supported for now.

However, if you want to build the PyTorch extensions during installation, you can set BUILD_EXT=1.

bash

BUILD_EXT=1 pip install colossalai

Otherwise, CUDA kernels will be built during runtime when you actually need them.

We also keep releasing the nightly version to PyPI every week. This allows you to access the unreleased features and bug fixes in the main branch. Installation can be made via

bash

pip install colossalai-nightly

Download From Source

The version of Colossal-AI will be in line with the main branch of the repository. Feel free to raise an issue if you encounter any problems. :)

```shell git clone https://github.com/hpcaitech/ColossalAI.git cd ColossalAI

install colossalai

pip install . ```

By default, we do not compile CUDA/C++ kernels. ColossalAI will build them during runtime. If you want to install and enable CUDA kernel fusion (compulsory installation when using fused optimizer):

shell

BUILD_EXT=1 pip install .

For Users with CUDA 10.2, you can still build ColossalAI from source. However, you need to manually download the cub library and copy it to the corresponding directory.

```bash

clone the repository

git clone https://github.com/hpcaitech/ColossalAI.git cd ColossalAI

download the cub library

wget https://github.com/NVIDIA/cub/archive/refs/tags/1.8.0.zip unzip 1.8.0.zip cp -r cub-1.8.0/cub/ colossalai/kernel/cuda_native/csrc/kernels/include/

install

BUILD_EXT=1 pip install . ```

Use Docker

Pull from DockerHub

You can directly pull the docker image from our DockerHub page. The image is automatically uploaded upon release.

Build On Your Own

Run the following command to build a docker image from Dockerfile provided.

Building Colossal-AI from scratch requires GPU support, you need to use Nvidia Docker Runtime as the default when doing

docker build. More details can be found here. We recommend you install Colossal-AI from our project page directly.

bash

cd ColossalAI

docker build -t colossalai ./docker

Run the following command to start the docker container in interactive mode.

bash

docker run -ti --gpus all --rm --ipc=host colossalai bash

Community

Join the Colossal-AI community on Forum, Slack, and WeChat(微信) to share your suggestions, feedback, and questions with our engineering team.

Contributing

Referring to the successful attempts of BLOOM and Stable Diffusion, any and all developers and partners with computing powers, datasets, models are welcome to join and build the Colossal-AI community, making efforts towards the era of big AI models!

You may contact us or participate in the following ways: 1. Leaving a Star ⭐ to show your like and support. Thanks! 2. Posting an issue, or submitting a PR on GitHub follow the guideline in Contributing 3. Send your official proposal to email contact@hpcaitech.com

Thanks so much to all of our amazing contributors!

CI/CD

We leverage the power of GitHub Actions to automate our development, release and deployment workflows. Please check out this documentation on how the automated workflows are operated.

Cite Us

This project is inspired by some related projects (some by our team and some by other organizations). We would like to credit these amazing projects as listed in the Reference List.

To cite this project, you can use the following BibTeX citation.

@inproceedings{10.1145/3605573.3605613,

author = {Li, Shenggui and Liu, Hongxin and Bian, Zhengda and Fang, Jiarui and Huang, Haichen and Liu, Yuliang and Wang, Boxiang and You, Yang},

title = {Colossal-AI: A Unified Deep Learning System For Large-Scale Parallel Training},

year = {2023},

isbn = {9798400708435},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3605573.3605613},

doi = {10.1145/3605573.3605613},

abstract = {The success of Transformer models has pushed the deep learning model scale to billions of parameters, but the memory limitation of a single GPU has led to an urgent need for training on multi-GPU clusters. However, the best practice for choosing the optimal parallel strategy is still lacking, as it requires domain expertise in both deep learning and parallel computing. The Colossal-AI system addressed the above challenge by introducing a unified interface to scale your sequential code of model training to distributed environments. It supports parallel training methods such as data, pipeline, tensor, and sequence parallelism and is integrated with heterogeneous training and zero redundancy optimizer. Compared to the baseline system, Colossal-AI can achieve up to 2.76 times training speedup on large-scale models.},

booktitle = {Proceedings of the 52nd International Conference on Parallel Processing},

pages = {766–775},

numpages = {10},

keywords = {datasets, gaze detection, text tagging, neural networks},

location = {Salt Lake City, UT, USA},

series = {ICPP '23}

}

Colossal-AI has been accepted as official tutorial by top conferences NeurIPS, SC, AAAI, PPoPP, CVPR, ISC, NVIDIA GTC ,etc.

Owner

- Name: HPC-AI Tech

- Login: hpcaitech

- Kind: organization

- Email: contact@hpcaitech.com

- Website: https://www.hpc-ai.tech/

- Repositories: 14

- Profile: https://github.com/hpcaitech

We are a global team to help you train and deploy your AI models

Committers

Last synced: about 1 year ago

Top Committers

| Name | Commits | |

|---|---|---|

| Frank Lee | s****9@g****m | 454 |

| ver217 | l****7@g****m | 426 |

| Jiarui Fang | f****3@g****m | 335 |

| YuliangLiu0306 | 7****6 | 183 |

| oahzxl | x****o@g****m | 157 |

| HELSON | c****8@g****m | 137 |

| binmakeswell | b****l@g****m | 133 |

| flybird11111 | 1****2@q****m | 108 |

| github-actions[bot] | 4****] | 89 |

| wangbluo | 2****5@q****m | 71 |

| hxwang | w****0@e****g | 68 |

| digger yu | d****u@o****m | 67 |

| Jianghai | 7****1 | 61 |

| Boyuan Yao | 7****0 | 58 |

| Ziyue Jiang | z****7@g****m | 55 |

| YeAnbang | a****2@o****m | 54 |

| Yuanheng Zhao | 5****o | 52 |

| Baizhou Zhang | e****g@p****n | 50 |

| Super Daniel | 7****u | 49 |

| yuehuayingxueluo | 8****9@q****m | 46 |

| FoolPlayer | 4****r | 46 |

| アマデウス | k****g | 45 |

| LuGY | 7****u | 37 |

| Fazzie-Maqianli | 5****y | 36 |

| Haze188 | h****8@q****m | 32 |

| Edenzzzz | w****n@w****u | 32 |

| GuangyaoZhang | x****1@q****m | 31 |

| Maruyama_Aya | c****1@1****m | 29 |

| Tong Li | t****8@g****m | 29 |

| pre-commit-ci[bot] | 6****] | 26 |

| and 166 more... | ||

Committer Domains (Top 20 + Academic)

Issues and Pull Requests

Last synced: 9 months ago

All Time

- Total issues: 85

- Total pull requests: 364

- Average time to close issues: 2 months

- Average time to close pull requests: 10 days

- Total issue authors: 70

- Total pull request authors: 38

- Average comments per issue: 1.73

- Average comments per pull request: 0.4

- Merged pull requests: 235

- Bot issues: 0

- Bot pull requests: 3

Past Year

- Issues: 62

- Pull requests: 269

- Average time to close issues: 18 days

- Average time to close pull requests: 8 days

- Issue authors: 52

- Pull request authors: 22

- Average comments per issue: 0.79

- Average comments per pull request: 0.33

- Merged pull requests: 164

- Bot issues: 0

- Bot pull requests: 3

Top Authors

Issue Authors

- ericxsun (16)

- GuangyaoZhang (13)

- wangbluo (8)

- CjhHa1 (6)

- BurkeHulk (6)

- insujang (5)

- wxthu (5)

- SeekPoint (5)

- ver217 (5)

- flybird11111 (5)

- happynaruto (4)

- duanjunwen (4)

- Edenzzzz (4)

- hiprince (3)

- 447428054 (3)

Pull Request Authors

- ver217 (171)

- flybird11111 (125)

- wangbluo (81)

- YeAnbang (69)

- TongLi3701 (60)

- yuanheng-zhao (47)

- FrankLeeeee (45)

- duanjunwen (44)

- Edenzzzz (43)

- botbw (38)

- yuehuayingxueluo (36)

- binmakeswell (28)

- Hz188 (27)

- Courtesy-Xs (26)

- CjhHa1 (22)

Top Labels

Issue Labels

Pull Request Labels

Packages

- Total packages: 5

-

Total downloads:

- pypi 24,735 last-month

- Total docker downloads: 1,431,248

-

Total dependent packages: 9

(may contain duplicates) -

Total dependent repositories: 63

(may contain duplicates) - Total versions: 263

- Total maintainers: 2

pypi.org: colossalai

An integrated large-scale model training system with efficient parallelization techniques

- Homepage: https://www.colossalai.org

- Documentation: http://colossalai.readthedocs.io

- License: Apache Software License 2.0

-

Latest release: 0.5.0

published 12 months ago

Rankings

Maintainers (1)

proxy.golang.org: github.com/hpcaitech/colossalai

- Documentation: https://pkg.go.dev/github.com/hpcaitech/colossalai#section-documentation

- License: apache-2.0

-

Latest release: v0.5.1

published 12 months ago

Rankings

proxy.golang.org: github.com/hpcaitech/ColossalAI

- Documentation: https://pkg.go.dev/github.com/hpcaitech/ColossalAI#section-documentation

- License: apache-2.0

-

Latest release: v0.5.1

published 12 months ago

Rankings

pypi.org: colossalai-nightly

An integrated large-scale model training system with efficient parallelization techniques

- Homepage: https://www.colossalai.org

- Documentation: http://colossalai.readthedocs.io

- License: Apache Software License 2.0

-

Latest release: 2025.7.12

published 11 months ago

Rankings

Maintainers (1)

pypi.org: custom-colossalai

An integrated large-scale model training system with efficient parallelization techniques

- Homepage: https://www.colossalai.org

- Documentation: http://colossalai.readthedocs.io

- License: Apache Software License 2.0

-

Latest release: 0.4.5

published over 1 year ago

Rankings

Maintainers (1)

Dependencies

- actions/stale v3 composite

- actions/github-script v6 composite

- irongut/CodeCoverageSummary v1.3.0 composite

- actions/checkout v2 composite

- peter-evans/create-pull-request v3 composite

- usthe/issues-translate-action v2.7 composite

- hpcaitech/cuda-conda 11.3 build

- hpcaitech/pytorch-cuda 1.12.0-11.3.0 build

- albumentations ==1.3.0

- colossalai *

- datasets *

- einops ==0.3.0

- gradio ==3.11

- imageio ==2.9.0

- imageio-ffmpeg ==0.4.2

- omegaconf ==2.1.1

- open-clip-torch ==2.7.0

- opencv-python ==4.6.0

- prefetch_generator *

- pudb ==2019.2

- streamlit >=0.73.1

- test-tube >=0.7.5

- torchmetrics ==0.6

- transformers ==4.19.2

- webdataset ==0.2.5

- numpy *

- torch *

- tqdm *

- accelerate *

- colossalai *

- diffusers >==0.5.0

- ftfy *

- modelcards *

- tensorboard *

- torchvision *

- transformers >=4.21.0

- colossalai >=0.1.12

- numpy >=1.24.1

- timm >=0.6.12

- titans >=0.0.7

- torch >=1.8.1

- tqdm >=4.61.2

- transformers >=4.25.1

- PuLP >=2.7.0

- colossalai >=0.1.12

- torch >=1.8.1

- transformers >=4.231

- colossalai >=0.1.12

- torch >=1.8.1

- colossalai >=0.1.12

- torch >=1.8.1

- colossalai *

- transformers >=4.23

- colossalai ==0.2.0

- titans ==0.0.7

- torch ==1.12.1

- colossalai >=0.1.12

- torch >=1.8.1

- colossalai >=0.1.12

- torch >=1.8.1

- colossalai *

- datasets *

- matplotlib *

- pulp *

- titans *

- torch *

- transformers *

- numpy *

- torch *

- tqdm *

- colossalai *

- titans *

- torch *

- colossalai *

- titans *

- torch *

- colossalai *

- fastapi ==0.85.1

- locust ==2.11.0

- pydantic ==1.10.2

- sanic ==22.9.0

- sanic_ext ==22.9.0

- torch >=1.10.0

- transformers ==4.23.1

- uvicorn ==0.19.0

- accelerate ==0.13.2

- colossalai *

- datasets >=1.8.0

- protobuf *

- sentencepiece *

- torch >=1.8.1

- colossalai >=0.1.12

- torch >=1.8.1

- colossalai *

- torch *

- contexttimer * test

- einops * test

- fbgemm-gpu ==0.2.0 test

- flash_attn c422fee3776eb3ea24e011ef641fd5fbeb212623 test

- pytest * test

- pytest-cov * test

- timm * test

- titans * test

- torchaudio * test

- torchrec ==0.2.0 test

- torchvision * test

- transformers * test

- triton ==2.0.0.dev20221011 test

- click *

- contexttimer *

- fabric *

- ninja *

- numpy *

- packaging *

- pre-commit *

- psutil *

- rich *

- tqdm *

- actions/checkout v2 composite

- actions/upload-artifact v3 composite

- tj-actions/changed-files v35 composite

- actions/checkout v2 composite

- actions/checkout v2 composite

- actions/checkout v3 composite

- actions/checkout v2 composite

- actions/checkout v3 composite

- actions/checkout v2 composite

- actions/checkout v3 composite

- actions/checkout v2 composite

- actions/checkout v2 composite

- actions/setup-python v2 composite

- actions/checkout v3 composite

- actions/checkout v2 composite

- tj-actions/changed-files v35 composite

- actions/checkout v2 composite

- actions/checkout v3 composite

- actions/checkout v2 composite

- actions/create-release v1 composite

- actions/setup-python v2 composite

- actions/checkout v3 composite

- actions/checkout v3 composite

- tj-actions/changed-files v35 composite

- actions/checkout v3 composite

- actions/cache v3 composite

- actions/checkout v2 composite

- actions/setup-python v3 composite

- peter-evans/create-pull-request v4 composite

- peter-evans/enable-pull-request-automerge v2 composite

- tj-actions/changed-files v35 composite

- actions/checkout v2 composite

- actions/setup-python v2 composite

- docker/login-action f054a8b539a109f9f41c372932f1ae047eff08c9 composite

- actions/checkout v2 composite

- actions/setup-python v2 composite

- pypa/gh-action-pypi-publish release/v1 composite

- actions/checkout v2 composite

- actions/setup-python v2 composite

- pypa/gh-action-pypi-publish release/v1 composite

- actions/checkout v2 composite

- actions/setup-python v2 composite

- pypa/gh-action-pypi-publish release/v1 composite

- actions/checkout v2 composite

- actions/setup-python v2 composite

- actions/checkout v2 composite

- actions/checkout v2 composite

- ray *

- colossalai >=0.3.1

- pandas >=1.4.1

- sentencepiece *

- accelerate *

- bitsandbytes *

- fastapi *

- jieba *

- locust *

- numpy *

- pydantic *

- safetensors *

- slowapi *

- sse_starlette *

- torch *

- uvicorn *

- colossalai >=0.3.1 test

- pytest * test

- colossalai >=0.3.1

- datasets *

- fastapi *

- gpustat *

- langchain *

- loralib *

- sentencepiece *

- sse_starlette *

- tensorboard *

- tokenizers *

- torch <2.0.0,>=1.12.1

- tqdm *

- transformers >=4.20.1

- wandb *

- colossalai * test

- packaging * test

- psutil * test

- pytest * test

- tensornvme * test

- torch * test

- transformers * test

- colossalai >=0.1.12

- h5py *

- numpy *

- tensorboard *

- torch >=1.8.1

- tqdm *

- wandb *

- colossalai *

- pytest *

- torch *

- torchvision *

- tqdm *

- colossalai *

- datasets *

- evaluate *

- ptflops *

- scikit-learn *

- scipy *

- torch *

- tqdm *

- transformers *

- colossalai >=0.1.12

- torch >=1.8.1

- SentencePiece ==0.1.99

- colossalai >=0.3.2

- datasets *

- flash-attn >=2.0.0,<=2.0.5

- numpy *

- tensorboard ==2.14.0

- torch >=1.12.0,<=2.0.0

- tqdm *

- transformers *

- colossalai *

- torch *

- torchvision *

- tqdm *

- colossalai *

- timm *

- torch *

- torchvision *

- tqdm *

- colossalai *

- datasets *

- scikit-learn *

- scipy *

- torch *

- tqdm *

- transformers *

- actions/checkout v2 composite

- hpcaitech/pytorch-cuda 1.13.0-11.6.0 build

- autoflake ==2.2.1

- black ==23.9.1

- colossalai ==0.3.2

- datasets *

- flash-attn >=2.0.0,<=2.0.5

- ninja ==1.11.1

- packaging ==23.1

- protobuf <=3.20.0

- sentencepiece ==0.1.99

- six ==1.16.0

- tensorboard ==2.14.0

- torch <2.0.0,>=1.12.1

- tqdm *

- transformers *

- colossalai >=0.3.4

- fuzzywuzzy *

- jieba *

- matplotlib *

- openai *

- pandas *

- peft *

- rouge *

- scikit-learn *

- seaborn *

- tabulate *

- transformers >=4.32.0

- fastapi ==0.99.1

- pydantic ==1.10.13

- uvicorn >=0.24.0

- Requests ==2.31.0

- chromadb ==0.4.9

- datasets ==2.13.0

- gpustat ==1.1.1

- gradio ==3.44.4

- jq ==1.6.0

- langchain ==0.0.330

- langchain-experimental ==0.0.37

- modelscope ==1.9.0

- openai ==0.28.0

- pypdf ==3.16.0

- pytest ==7.4.2

- sentence-transformers ==2.2.2

- sentencepiece ==0.1.99

- sqlalchemy ==2.0.20

- tiktoken ==0.5.1

- tokenizers ==0.13.3

- torch <2.0.0,>=1.12.1

- tqdm ==4.66.1

- transformers >=4.20.1

- unstructured ==0.10.14

- colossalai >=0.3.3

- datasets *

- sentencepiece *

- torch >=1.8.1

- transformers >=4.20.0,<=4.34.0

- auto-gptq ==0.5.0

- transformers ==4.34.0

- cudatoolkit 11.3.*

- numpy 1.23.1.*

- pip 20.3.*

- python 3.9.12.*

- pytorch 1.12.1.*

- torchvision 0.13.1.*

- colossalai >=0.3.3

- datasets *

- sentencepiece *

- torch >=1.8.1

- transformers ==4.36.0